We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

Out of any updates you can do for your PC as a gamer, one of the most important, if not the most important is a video card. You can get a new processor and see some improvement, an SSD and see a bit of improvement, memory helps lots too but a video card can make or break a game. Today, I have the pleasure of bringing you a review of the EVGA GTX 1070 FTW, one of NVIDIA’s lead board partner’s if not the lead partner. OK, before we get into the card itself, let’s check out some of the specs.

Spec’s and Features

- 1,607Mhz Base Clock

- 1,797Mhz Boost Clock

- 8GT’s Texture Fill Rate

- 1920 Pixel Pipelines/CUDA Cores

- 8,192MB, 256bit GDDR5

- 8,008MHz effective Clock Speed

- 2,002MHz Memory

- 3GB/s Memory Bandwidth

- 8,008MHz effective Clock Speed

- NVIDIA SLI Ready

- HB Bridge Support

- Supports up to 4 monitors

- 1 x Dual Link DVI-D

- Up to 2560×1600

- 3 x Display Port 1.4

- Up to 7680×4320

- 1 x HDMI 2.0b

- Up to 3840×2160

- Max Digital Refresh rate: 240Hz

- 1 x Dual Link DVI-D

- EVGA ACX 3.0 Cooling

- 10 Phase Power Design

- Simultaneous Multi-Projection

- VR Ready

- NVIDIA Surround

- NVIDIA GPU Boost 3.0

- NVIDIA G-Sync Ready

- NVIDIA Ansel

- EVGA Double BIOS

- NVIDIA GameStream

- Supported API’s

- DirectX 12

- OpenGL 4.5

- Vulkan

- Adjustable RGB LED

Product Details

- Length: 10.5in

- Height: 5.064in

- Width: Dual Slot

OK, let’s get on to an unboxing, I am dying to check this out.

EVGA comes out with a bang releasing NVIDIA’s latest generation GPU based off of the Pascal architecture and it looks nice doesn’t it?

Ok, let’s go over what comes inside the box first, aside from the card itself.

EVGA includes a huge portfolio that has a bunch of documents and swag.

First off we have the User’s Guide, and also Quick installation guide. This guide provides a quick guide on how to install the video card into your system. It gives a decent amount of information, though later in this review I show you firsthand how to install it, be patient I am sure you will like it.

Because it can be difficult… they include a little document on how to install the SLI bridge, even though the SLI Bridge is not included. Also, this leaflet shows, “New 2-way SLI bridge for GTX 1000 series” and shows an older SLI bridge…. odd.

They also include 2 x huge stickers, same sticker really, but one is black and one is white.

And while I am sure no one uses it, most people don’t have optical drives, they include a drivers CD. Now I never recommend using these CD’s because the minute they print these discs, they are already outdated. I recommend going to NVIDIA’s site and downloading the latest drivers for this card.

This little case badge is very cool, feels like metal, but I am sure it is very tough plastic. You may think at first that your card did not come with it, but it might be stuck in a manual or something.

It also comes with this awesome poster. Not that I wanted this poster in my room or anything, I am an adult…. so I put it in my sons room, on his perfectly clean and smooth wall, which needs no work. Soon I will be doing some work in my room and I might break a hole in the wall or something, these things happen you know and I will tell my wife that I will put the poster in our room for now to cover the hole, but I will fix the hole soon… is what I will tell her at least….

Last but not least, while not inside of the portfolio, it comes underneath the card itself is the pair of 6Pin PCI-E connections to 8Pin adapters. I will go over this on the next page of this review. Now let’s discover the card itself.

[nextpage title=”A closer look at the card”]

Sorry for the tease, but here is the EVGA GTX1070 FTW Gaming ACX 3.0 card. We will have a trip around the card and I will discuss some of the features and specs of the card itself.

Starting from the front of the card, this bad boy allows connecting up to 4 monitors simultaneously, even though it has 5 ports. You can connect 2 x Display Ports, 2 x HDMI Ports and a single DVI-D monitor. Thankfully they still support DVI-D, as not everyone has a HDMI or Display Port monitor and well, DVI-D can display up to 2560×1440, which is still a decent resolution.

There is no standard on how the 4 monitors connect or which of the 4 connections work together, just that out of the 5 connections, only 4 will work. I was a little confused of this at first, but I joined EVGA’s community and simply asked the question and one of the knowledgeable members, “arestavo” answered my question. He pointed and referenced NVIDIA’s website and pointed me to the Surround System Requirement page, you can find it here: http://www.geforce.com/hardware/technology/surround/system-requirements

And that answered my question. Thank you arestavo and thank you EVGA for your great community.

The card does not bring any sort of adapters to change from DVI to HDMI, or HDMI to Display port, no such adapters, which is a bit of a bummer but not the end of the world. Make sure if you have for example 3 x HDMI monitors or 3 x DP monitors, to buy an adapter ahead of time, would be horrible to get the card and have to wait for an adapter.

Here are somethings that might work for you; you can click on the images to take you to Amazon:

Display Port to HDMI

HDMI to Display Port

Just some examples to help you out, there might be better or worse adapters, keep an eye out if you need one.

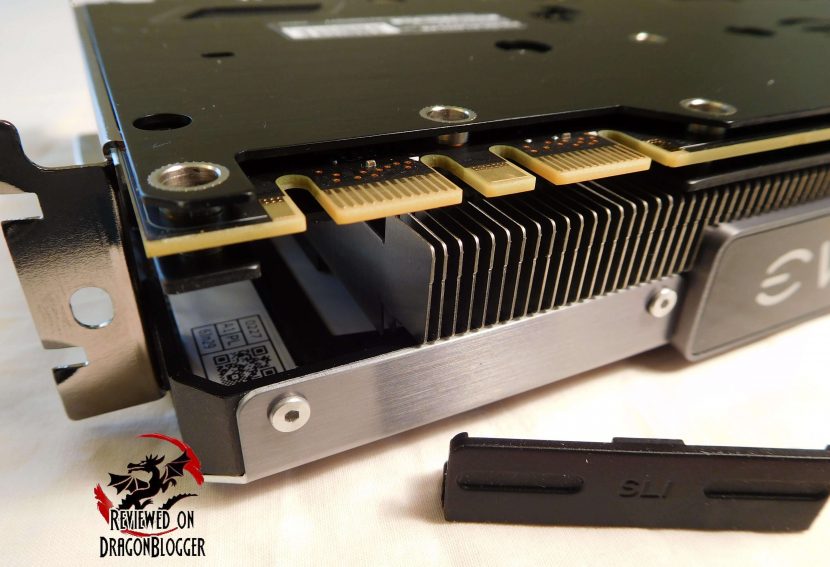

Moving to the right of the card, we find a plastic piece blocking the SLI port or fingers, let’s remove that.

Here are the naked SLI fingers, careful if there are children around. They have here also the beginning of their fin design; they call their Optimally Tuned Fin Design.

Back to the SLI fingers, if you wanted to use SLI, you could always use the old style ribbon SLI bridges, but you would potentially lose performance. This SLI bridge is not included, but can be purchased separately.

EVGA introduced their Pro SLI Bridge HB which provides improved performance with their High Bandwidth Technology available currently only on the GTX 10 series of cards. The extra bandwidth can be used in games at 4K at 60Hz and above. The 100-2W-0025-LR model also allows you to change colors from Red, Green, Blue and White. Please remember, this SLI bridge is not included and must be purchased separately.

Then off a little more to the right, we find the EVGA Logo on the card, as well as the model.

This is not just a logo and model to show and brag about, there is a bit of a gimmicky function to this. This badge itself actually changes colors and lighting patterns. This is their Adjustable RGB LED lighting design that allows for the LED’s to be controlled through EVGA’s PrecisionX, I will go over this later in the review. You will also see a continuance of the fins along the side.

Slightly more to the right we find more of those fins, but we also find 2 x 8 PIN PCI-E power ports. Oh yeah, more fins too.

Don’t worry if you don’t have any 8Pin PCI-E connections on your power supply, they include 2 x 8Pin PCI-E adapters, but it will mean you will need 4 x 6Pin PCI-E connections free. Seems like a lot, but if you have them then you are good. Below I show you 2 potential alternatives if you don’t have these connections.

This might work for you too, just in case. It is an adapter that will take you from 2 x Molex connections to a single PCI-E 8Pin connection.

Or maybe 2 x SATA power connections to an 8Pin connection.

Please remember, it does not bring these adapters, you would need to buy them separately.

Now if you are buying this video card, chances are you have a power supply that has these connections, but if yours does not, you will want to make sure you have at least a 500Watt power supply, something like the EVGA 500B Bronze Power Supply. The card itself requires 215Watts (at the default speeds), but the additional power is to power your system.

Each PCIe 8 Pin connection provides 150 Watts of power to the card, so that’s 300Watts. That means you will have 85 Additional watts of power, but actually you will have more. The PCIe slot provides 75Watts as well. The extra power is for overclocking, so you have some room to play with it, but be careful you don’t want to melt the card, so be careful.

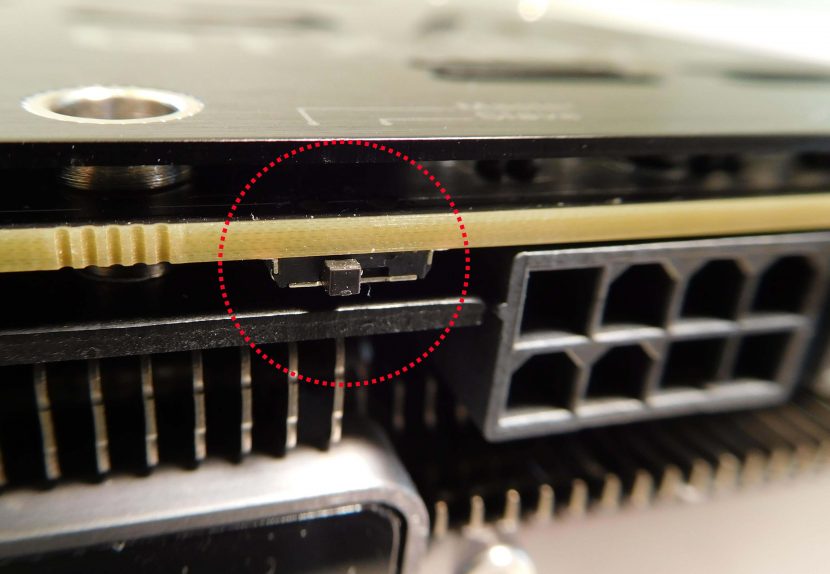

Now, this is a double bios/firmware card, meaning you can have a stock clock on the first BIOS profile and an overclocked one that you can push as hard as you like. If you overclock it too much and the card won’t boot anymore with that firmware, you can simply flick a switch and you are back to your old profile. The switch was hidden in the picture I showed you with the 2 x 8Pin PCI-E connections, but let me make it a little easier for you to see; I will zoom in and even circle it for you.

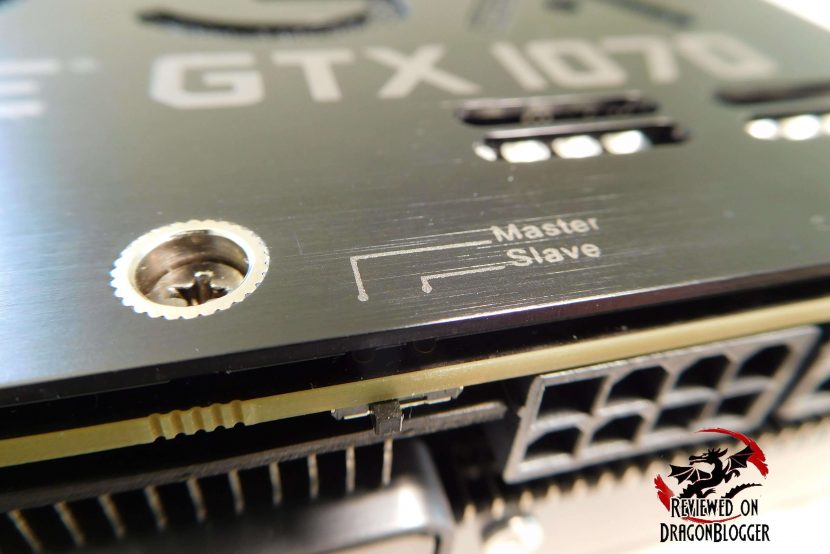

On the back-plate of the card, which I will go over a little later in the review you can actually find where the switch is called out.

They have the Master/Slave switch silk-screened here, brings me back to the days of old IDE hard drives. We will come to the back-plate soon, but let’s keep going to the right on this card.

Coming around to the back, we find where the LED lights are connected as well as the ends of the heat-pipes, helping keep these cards nice and cool, of course with the aid of the 2 fans included.

Coming around more to the right, we find a ton more fins to exhaust air onto the PCI-E slots and the board, but we of course find the actual PCI-E connection itself. The card does support PCIe 3.0, PCIe 2.0 and even standard PCIe. I would not recommend using the standard PCIe slot; I would recommend at least the 2.0 slot, for bandwidth sake.

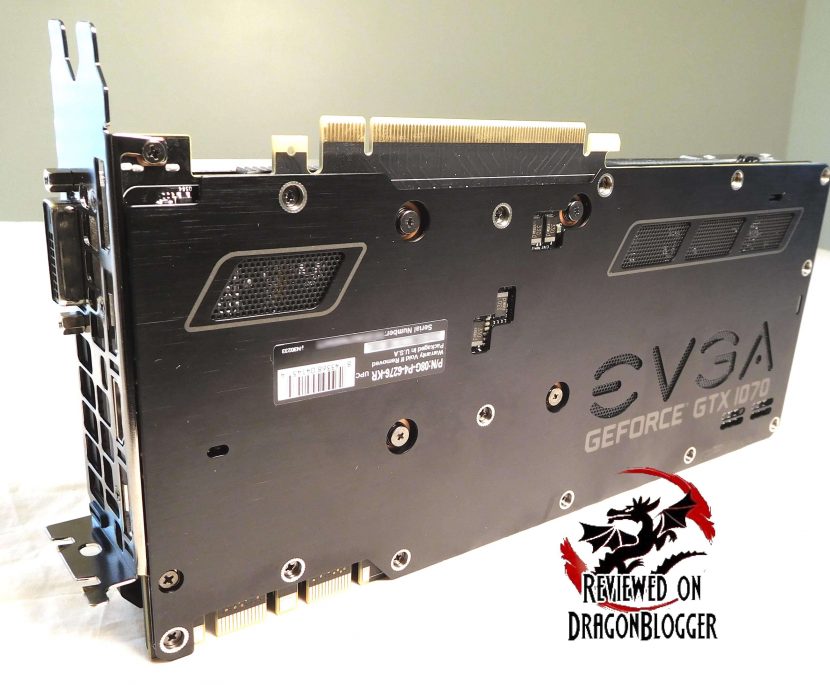

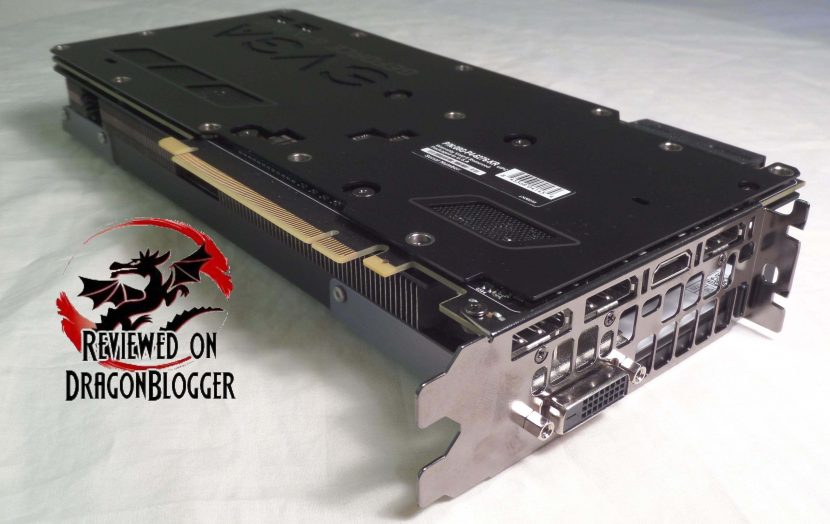

No we are on the back; I would say we are saving the coolest part for the end, even though the backplate is cool. It has vents is certain spots and even the EVGA logo cutout out, as well as the GEFORCE GTX 1070 silkscreened into the backplate.

I think this does look pretty cool, and helps to pass through a little of the radiating heat. EVGA also has notches cutout to not ground the solder points for the PCIe connections. Here you can also the double BIOS switch.

Then a few other vents and cutouts here. Let’s check out the top side of the card now, the best for last.

Here again is the top of the card, I would say it’s pretty sexy and while a bit gimmicky, it is somewhat functional.

So starting on the center of the card, we have the EVGA / GEFORCEE GTX 1070 badges.

At the top of the card, we have the ACX 3.0 silk screen on the metal retainer, looks nice but then we have the bolted on EVGA badge. This is pretty cool I think and lends to the industrial type of look EVGA is obviously going for with the 10 series of cards.

Towards the bottom of the card, we have another bolted on badge, the “GEFORCE GTX 1070” badge.

Around the card, we have this industrial bolted on look, with these white patches. This is along the bottom, closest to the DVI connection on the front of the card.

This is at the front of the card, towards the top of the card.

This is along the back of the card, near the heatpipes, next to the double bios switch.

This is towards the bottom of the card towards the heatpipes, where the motherboard would be.

This white patches are not just white patches, they are the pieces that serve a little of the gimmicky portion, EVGA’s adjustable RGB LED.

Here you can see the LED’s colored blue inside of my system. A first glimpse maybe for many of you on EVGA’s adjustable RGB LED. It looks nice doesn’t it?

Please don’t get confused when I say gimmicky thinking that I don’t like it. I do like the LED lights, but it serves no practical purpose, aside from looking cool and maybe giving more of a purpose to having a windowed side panel, even though I do like to look at my gear at all times.

I will get into the RGB lighting a little later on in the review, in a video as well to show you guys just how it works, but a tiny bit more on the card itself first.

So yeah there are fans, 2 of them actually but these are not your typical fans from yesteryear. Behind these 2 seemingly standard fans, but wait there’s more.

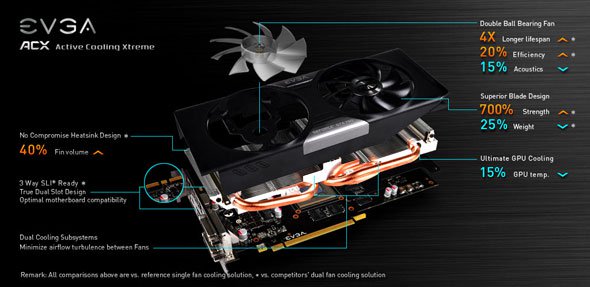

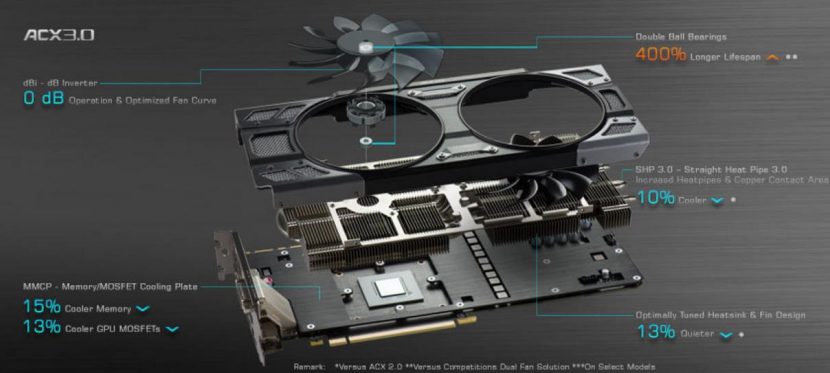

EVGA has implemented ACX 3.0 on to this series of cards but there is not too much of a difference between 2.0 and 3.0, though that minute difference is still a good one. ACX 3.0 claims to have 13% more optimally tuned heatsink and fin design that ACX 2.0. Due to the fin and heatsink design update, they have also increased the heatpipe and cooper contract areas

ACX 1.0

ACX 2.0

ACX 3.0

From these, you can see how ACX has evolved a bit overtime. Everything cools better than everything in previous iterations as you can see from the screenshots above but why wouldn’t they? Why would you buy something that did not work as well or far worse than the one you currently own right?

I won’t really go over 1.0, but I included it here if you wanted to check it out, but from 2.0 to 3.0, you can see how the cooling would have improved because of the heatpipe design, and yeah copper is a big one too.

The fans are not totally the reason the car/GPU is cooler, the GTX1070 is based off of NVIDIA’s Pascal architecture. Pascal while offering over 3 times the bandwidth of Maxwell (where ACX 2.0 was primarily used under) Pascal is more power efficient and due to that generates less heat because it is built off of the 16nm FinFET manufacturing process, Maxwell’s was a 28nm process, Kepler’s as well.

The FinFET process is important not only for NVIDIA but for AMD as well. AMD’s latest iteration of GPU’s based off of Polaris also uses this manufacturing process, though NVIDIA’s is 16nm and AMD’s is 14nm. Not important in this review, but I was going down the rabbit hole and found no way out and had to continue.

OK, so I have talked a lot about this card, I think I fell asleep and sleep typed I typed so much. Now I am sure you want to get to the benches, to see if this card is worth it, I do too but first we need to find out how to install it. This next chapter will go into installing the card.

[nextpage title=”Installing the EVGA Geforce GTX1070 FTW ACX 3.0″]

Some of my more advance readers might think it’s a little dumb that I am showing them how to install a video card, you may already know how to, and if so this video might not be for you. I am providing this video for those of you that don’t know how to install a card to save you some money and time. Aside from saving you money, I am hoping to provide you some confidence to install and upgrade your video card on your own, even other devices.

Check out this video, hopefully it helps you out.

So here she is installed into the Anidees AI Crystal chassis. The case has a slightly tinted side panel, so I removed it to show you the card in all its glory, plus I didn’t want to catch any of the glare.

OK, enough with this, I hope it helped you but let’s get into the benchmarks.

[nextpage title=”Benchmarks, Performance, Temperatures and Power Consumption”]

Before we get into the benchmarks, here are my system specs, maybe compare them without your own system to get an idea of what kind of performance you would get?

- Anidees AI Crystal Case:https://geni.us/6NAIJBN?ygdb

- Intel Core i7 5930K Processor:https://geni.us/6NAIJBN?4C8Itd

- EVGA X99 Classified Motherboard:https://geni.us/6NAIJBN?E9eamo

- Arctic Liquid Freezer 240MM CPU Liquid Cooling:https://geni.us/6NAIJBN?vEaJAf

- Kingston HyperX Predator 3000Mhz 16Gig:https://geni.us/6NAIJBN?w9kPe5

- Sapphire Nitro RX 480 Video card:https://geni.us/6NAIJBN?zeF3

- Samsung 850 EVO 500GB SSD:https://geni.us/6NAIJBN?1gf0fs

- Hitachi 1TB SATA 3G HD:https://geni.us/6NAIJBN?pU2QOo

- Patriot Ignite 480GB SSD:https://geni.us/6NAIJBN?eoPVsG

- Kingston HyperX 240GB SSD:https://geni.us/6NAIJBN?8leEDW

- Plextor 256GB PCIE SSD:https://geni.us/6NAIJBN?gVBR

- Cooler Master Silent Pro Gold 1200W Power Supply:https://geni.us/6NAIJBN?Umwm

- Microsoft Windows 10 Professional:https://geni.us/6NAIJBN?GYbBRY

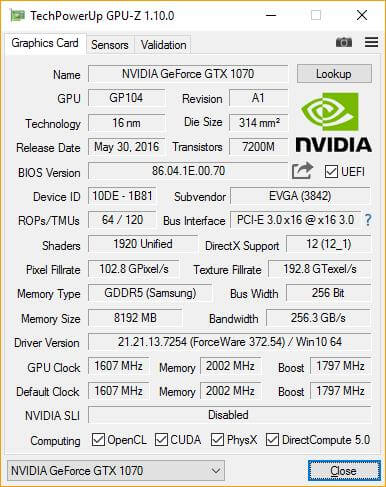

Here are the clocks and specs reported by TechPowerUp’s GPU-Z.

These readings might come into play a little later in the review, so memorize them. You can see for these benchmarks I was using NVIDIA’s driver version 372.54 for Windows 10 64-bit.

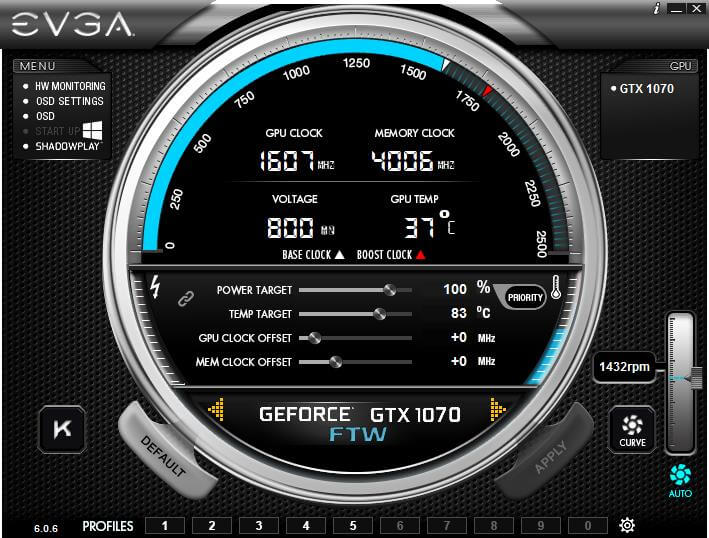

EVGA also has their own utility to allow you to see the a ton of specs and also allows you to do some tweaking.

From here you can see the active GPU Clock, Memory Clock, Voltages, GPU Temperatures and even the active fan RPM’s. There is a bit more you can see from here, but I will get into that a little later.

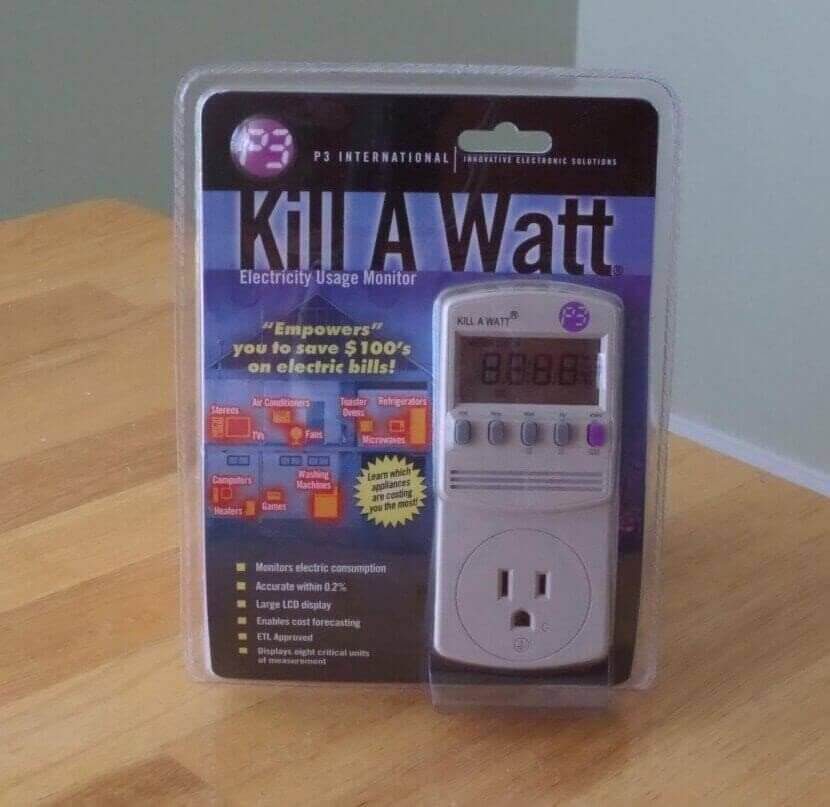

For the benchmarks you are about to see, aside from the actual results, I provide temperatures and power consumption. For the power consumption, I test Minimum, Average and Max usage using the “Kill A Watt” by “P3 International”.

The programs I am using to benchmark are the following.

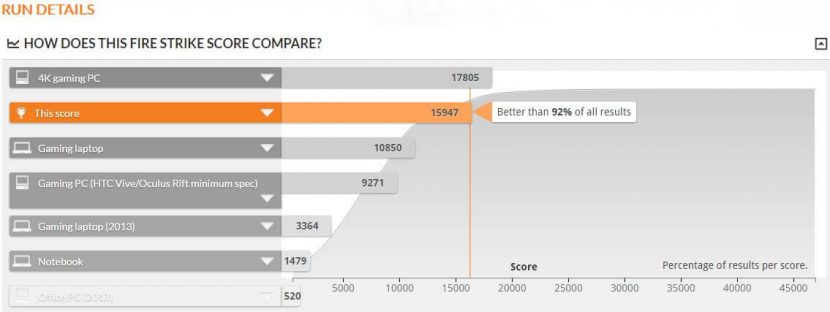

- FutureMark’s 3DMark Fire Strike

- Metro Last Light

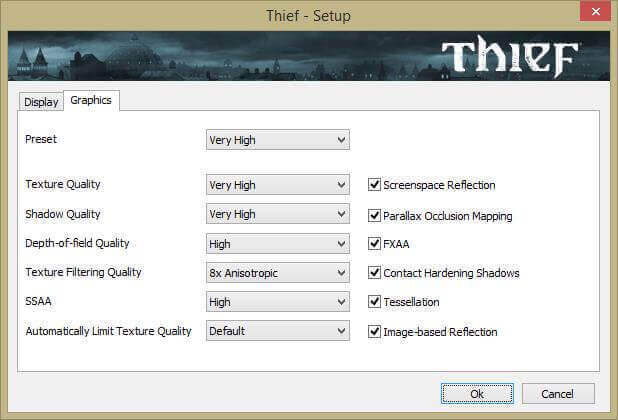

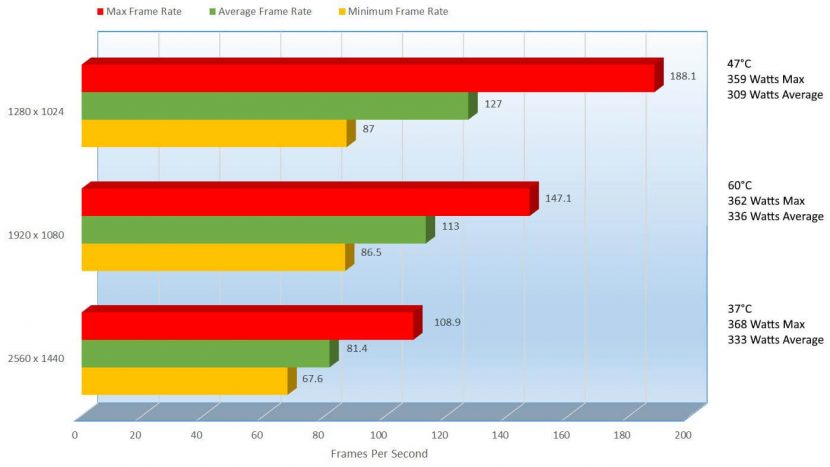

- Thief

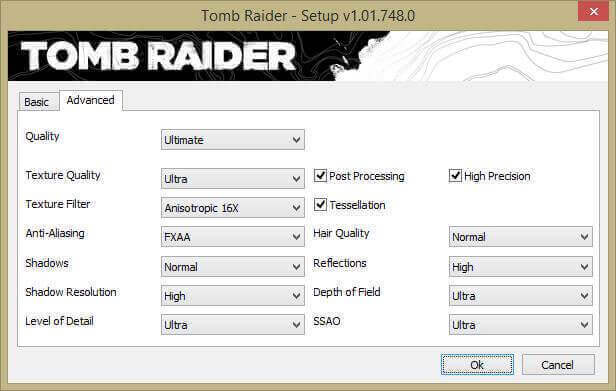

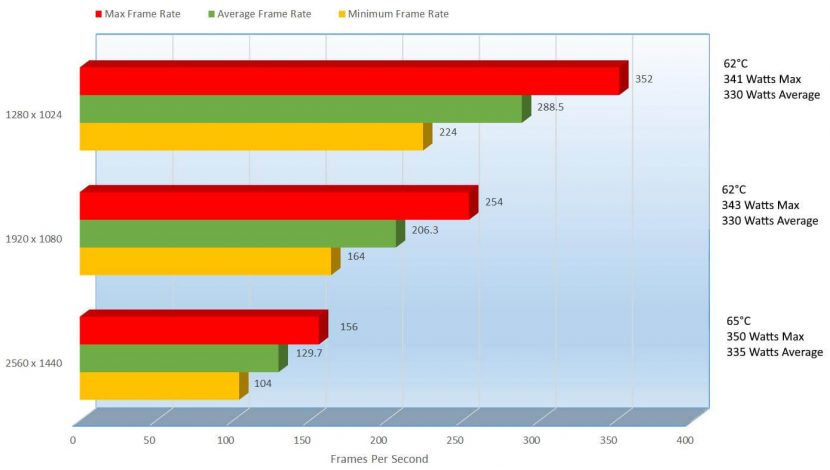

- Tomb Raider

- Ashes of Singularity

- Tom Clancy’s The Division

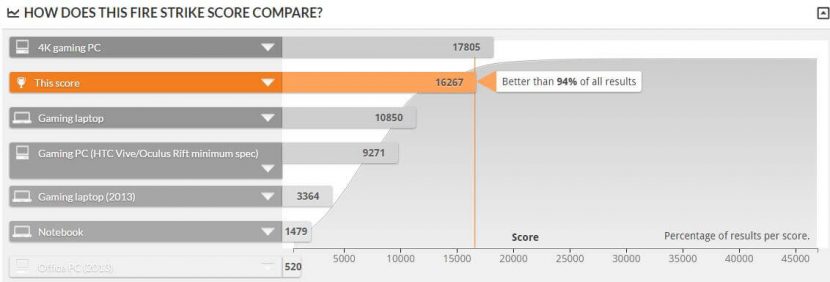

An awesome score, the end result is 92% better than all of the reported results. The lowest wattage reached while benchmarking was 228Watts, the average power consumption was 333Watts and a peak of 368Watts.

Now, these cards are 0db until they hit 60°C, which scared me a bit when I first started benching it. I didn’t freak at first because I knew they had it, but while I am benching, I am looking at the card and I don’t see the fan spinning. Maybe my eyes went static but I know my blood pressure was steadily increasing then suddenly and amazing thing occurred, the fans spun it, the actually started spinning. The hottest this card got in 3DMark was 65°C, that’s pretty sweet.

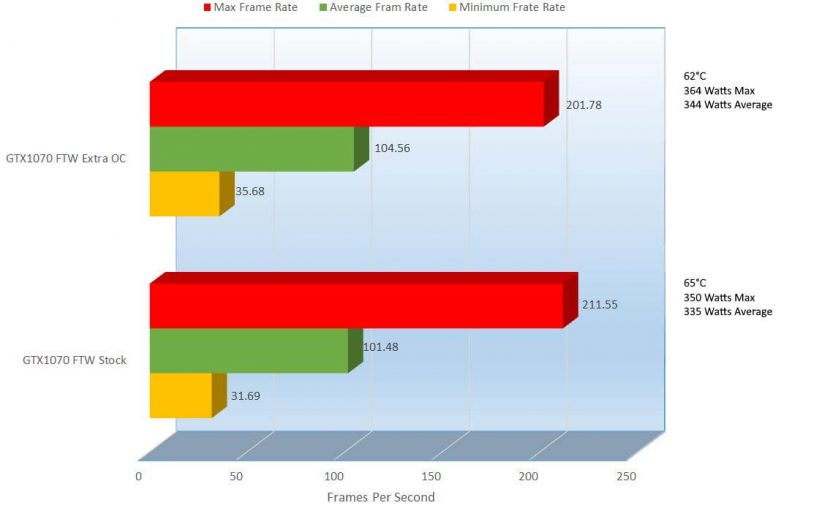

Now, one of the most grueling benchmarks is Metro Last Light, potentially due to poor optimization of the game, or the fact that it is just that rough. Let’s check out Metro Last Lights benchmarks.

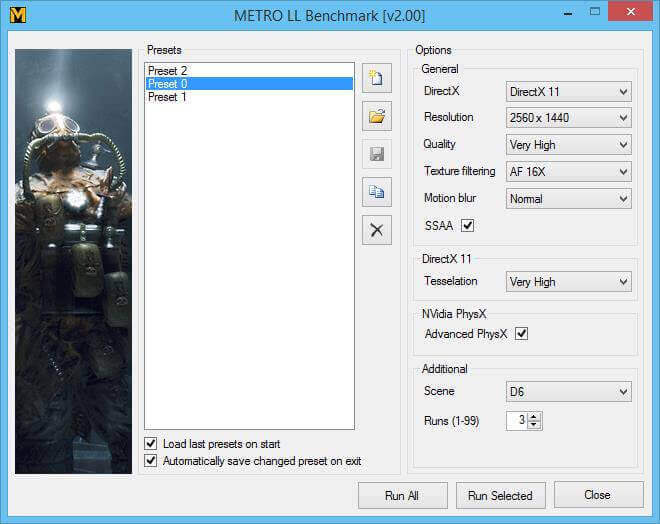

Throughout the benchmarks I keep the settings the same only changing the resolution. Here are the presets.

As I mentioned, I keep the settings the same in all 3 of the presets, only changing the resolution.

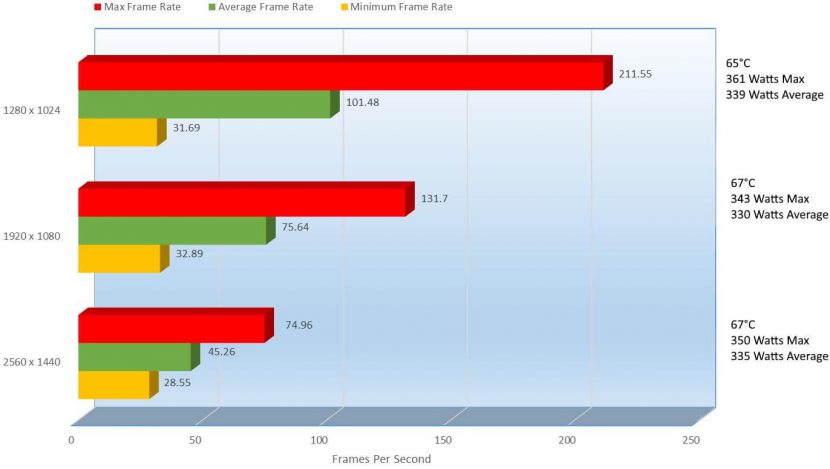

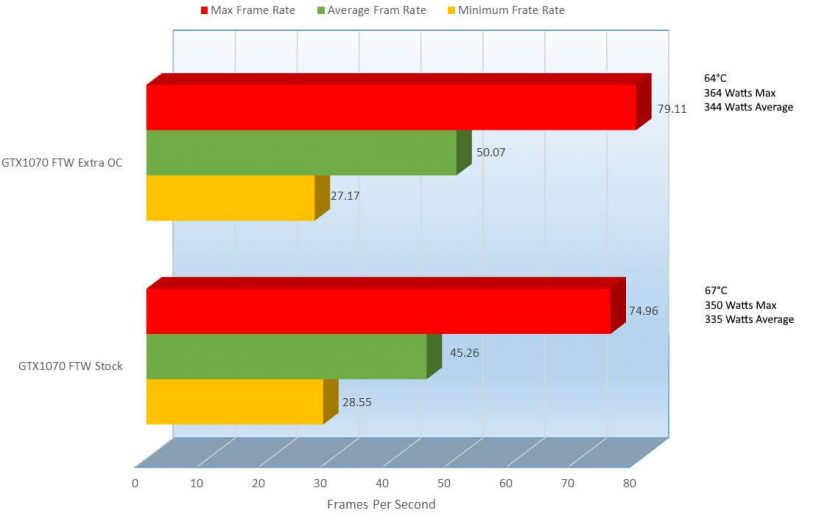

Some of the best performance I have seen in my time reviewing, I am impressed so far, but there is still a long road ahead. We were able to get 45.26 frames per second here at 2560 x 1440, which I know is no great considering 60FPS is the sweet spot but the game does not start to get choppy till we reach the end where the huge militia starts breaking through the wall and bullets are flying everywhere, so it is still playable but results are results, I will not stray from the, 45.26FPS is it and are presented for a reason, you want at least 60FPS at all times if not better.

So the 45.26 was at a resolution of 2560 x 1440, but if we toned it down a notch to a more common resolution of 1920 x1080 we can see the card start to shine here in Metro Last Light. At 1920 x 1080, we can see 75.64FPS, a 50.26% improvement over 2560 x 1440.

At 2560 x 1440, we reached an average of 335 Watts and at 1920 x 1080, the average was only slightly lower at 330 Watts. Lowering the resolution only dropped the power consumption 1.50%, both still very lower.

As a gamer, I want FPS, but I want to be able to see as much as I can in a game. While going a lower resolution helps that, I prefer 2560 x 1440 so at that point I would just dial down some of the eye candy and surely it would get 60FPs, or even higher.

In the end, we got here some of the best results in Metro Last Light; I think though that our thieves might be a little jealous and try to steal the show. Let’s go see what THIEF shows.

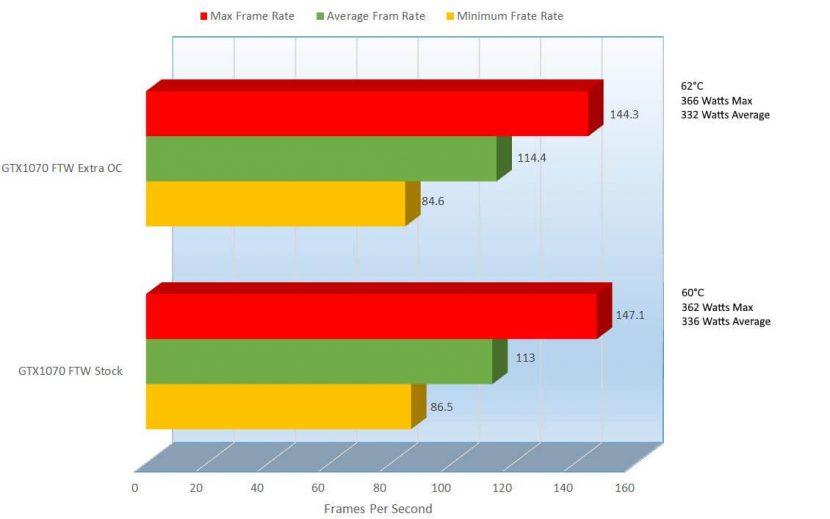

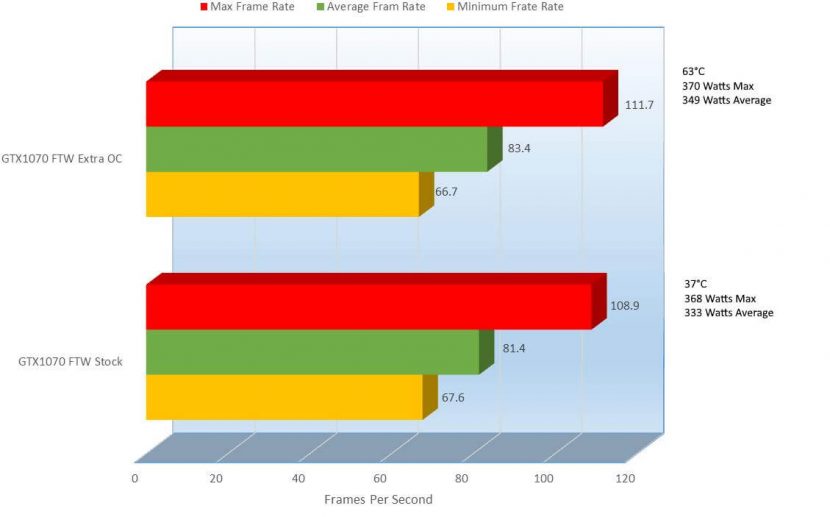

Thief blew everything away showing you some more of the power of the card, with a few oddities. First off, at 2560 x 1440 we reached 60FPS and went further with 81.4FPS on average, even the lowest frame rate was over at 67.6FPS, very impressed. Here the average power consumption was 333 Watts.

If you were looking at the differences between 2560 x 1440, 1920 x 1080 and 1280 x 1024 you would have noticed something a little odd. At 1920 x 1080, on average consumed more power than 2560 x 1440, only a 0.90% difference but not only that, it hit 60°C, while 2560 x 1440 did not even have the fan turn on. Very strange, but I was in front of the PC during every benchmark, and saw these with my own eyes. No worries though, because if you can you will be playing at 2560 x 1440. If you can’t hit 2560 x 1440, the barely 1% power difference and maybe a little extra noise with the fans on wont bug anyone.

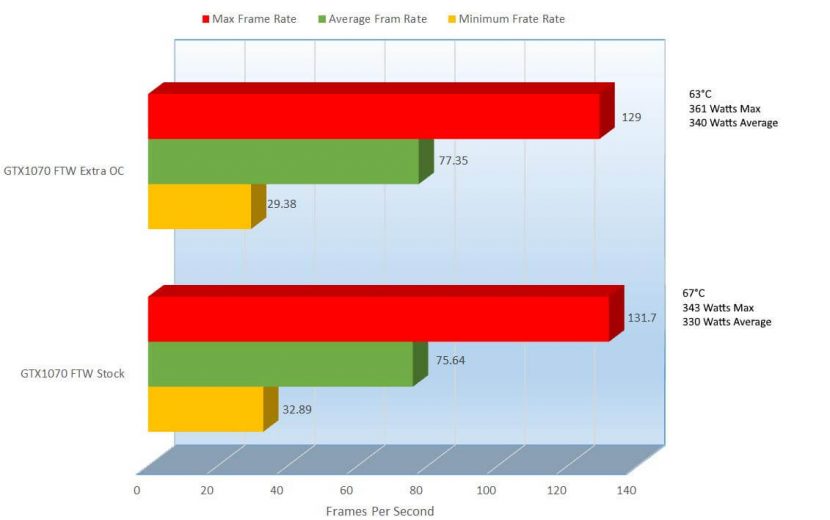

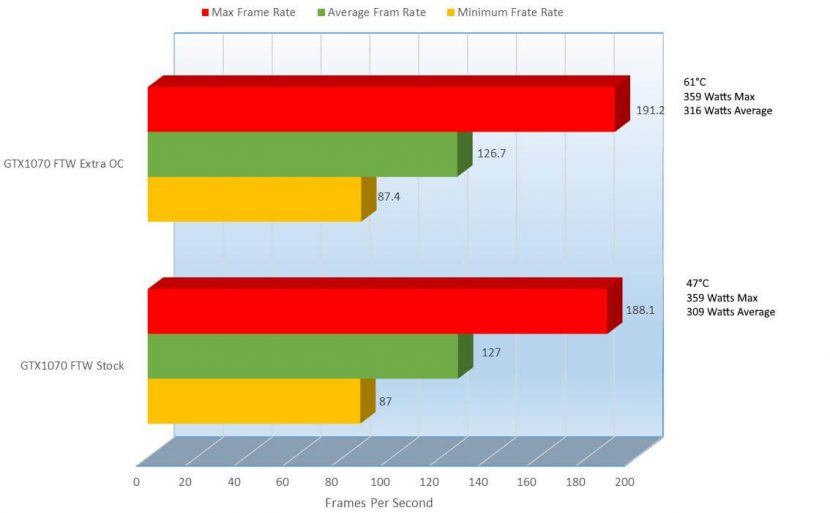

Talking about not bugging anyone, let’s have a chat with our friend Laura over at Tomb Raider.

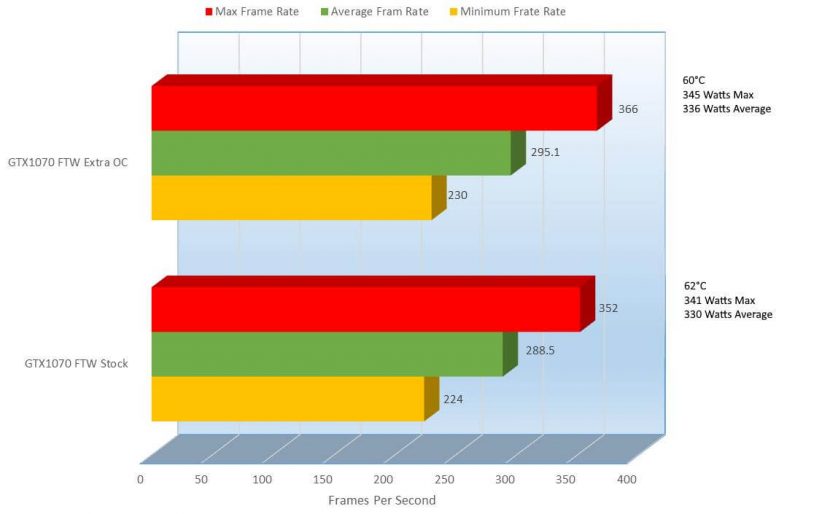

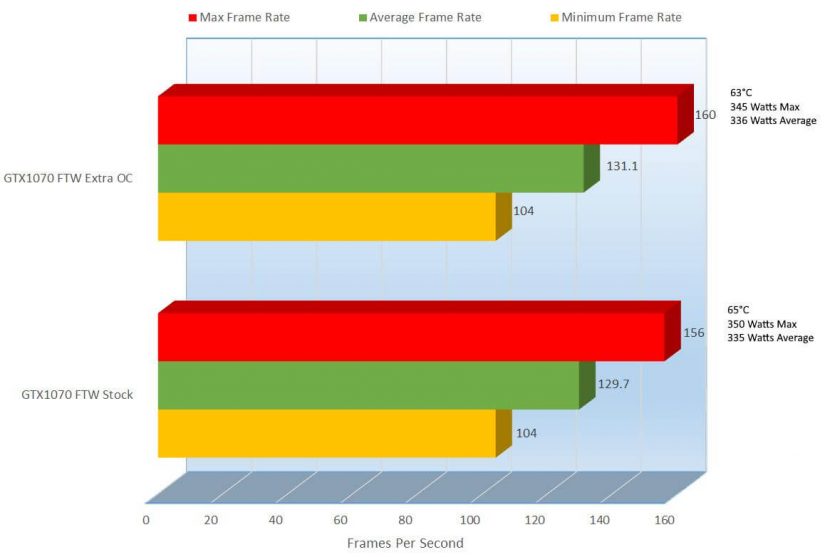

The performance here is amazing; at 2560 x 1440 we can see an average FPS of 129.7, more than double the acceptable FPS. At that FPS on an average we consumed only 335Watts Average. If you needed to tone down the resolution though, you would see a 45.60% FPS increase to 206.3FPS at only 330 Watts average.

All this action on earth can get a little boring though, so let’s go out of this world with DX12 and Ashes of the Singularity.

As I mentioned, Ashes of the Singularity is a DX12 gaming, though to actually get it to work in DX12 is not as easy as going into settings and selecting DX12, as most of us would have tried. In Steam, when you are about to click on this game to get it started, instead you would lick click on the game like this.

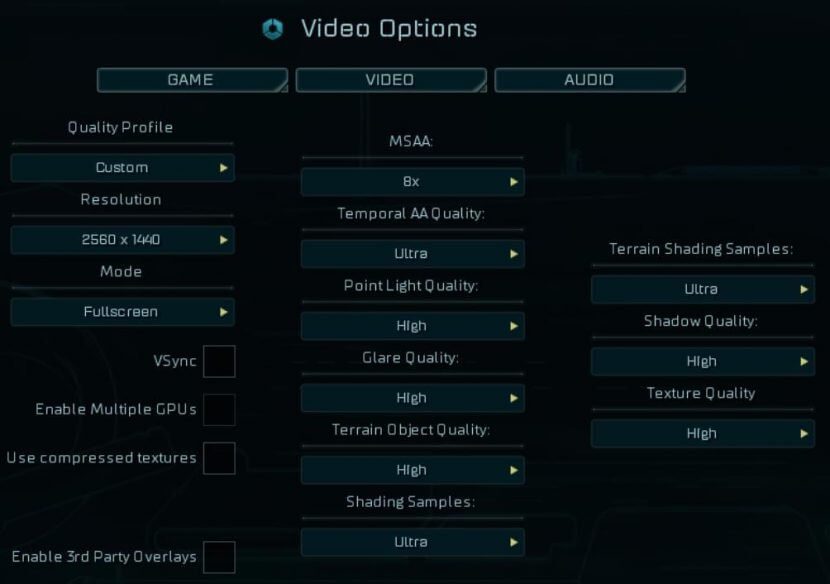

In the drop down of the game, you will find “Launch DirectX 12 Version (Windows 10 Only)”. As you might have been able to gather, this will only work in DX12 because Windows 10 is the only OS that works with DirectX 12. Here are the settings I use in the benchmark, of course aside from changing the resolution.

Before you run the benchmark though, you are greeted with the configuration menu to start the benchmark, here you can verify if you are running DX12

Ok, so let’s get to the benchmarks.

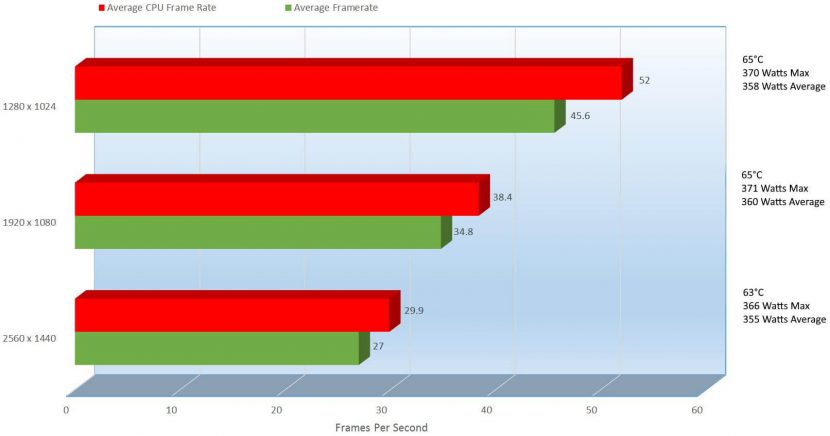

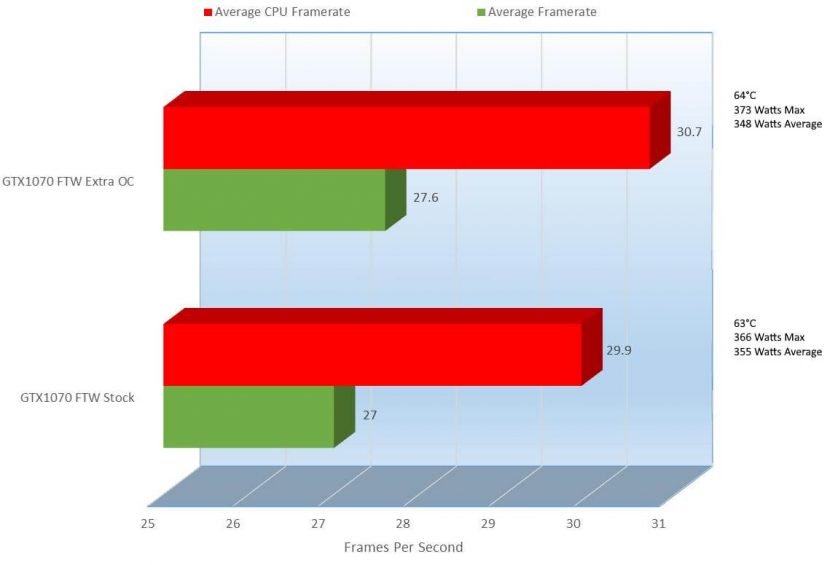

We can see here where the extra money comes into play. On lower end cards, we can see that the CPU picks up the slack where the GPU might fall short, but here we can see that the CPU is on par with the GPU.

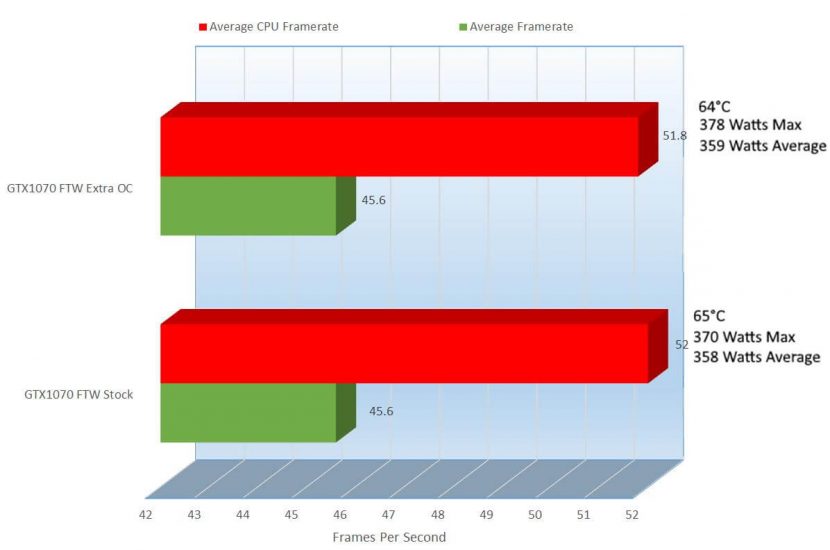

At 2560 x 1440, we can see that the average frame rate for the GPU was at 27FPS, the average FPS for 1920 x 1080 was 34.8FPS, a 25.24% improvement. Now this can look very deceiving, and many including myself would think that 27FPS or 34.8FPS is 100% unplayable, but actually that’s where the CPU helps. To show you how this looks like, I recorded a video showing gameplay of this, as well as a few other games that I will show a little later in this review, so keep your eyes out for it.

Let’s see what Mr. Clancy has to say with the Division.

Here are the settings I use in The Division, as like in the previous benchmark results, I only change the resolution.

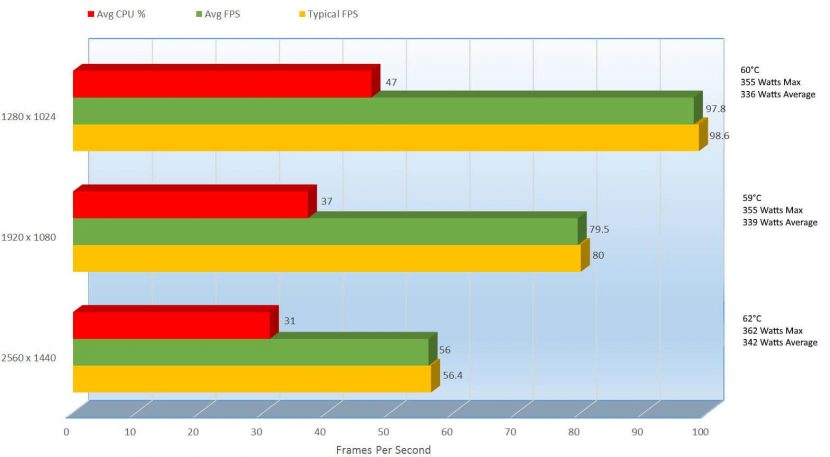

Like Ashes of the Singularity, Tom Clancy’s The Division is optimized to take better advantage of the CPU. Here we can see that the average FPS is 56FPS, and the CPU helps a little tiny bit at 31%. At 2560×1440 running at 56FPS is very acceptable, especially only running at 62°C and an average power consumption of 342 Watts. At 1920 x 1080 a standard resolution, we were able to get an average of 79.5 FPS running at 59°C which means the GPU fans never turned on and an average of 339 Watts, a 34.69% improvement in FPS.

Now, not everyone can make the conversions on what FPS means in a game, so I try to help you on this. In this next chapter I play a few games to show you how the game runs.

[nextpage title=”Gameplay and Performance”]Now for my favorite part in the review process, the gameplay, I like to have fun too. So that I can show you how games run, I will play for your Grand Theft Auto V, Battlefield 4, Tom Clancy’s “The Division” and I also added Ashes of the Singularity to help better explain why you see the FPS so low, but the game plays so well. Not everyone plays the same kind of game, so I try to play ones that will work with everyone’s preferences.

Grand Theft Auto V running at Ultra:

Not bad, Grand Theft Auto V can be a bit rough on video cards, but let’s see what Battlefield 4 runs at

Ok, let’s do some 3rd person game play with Tom Clancy.

Sorry about this one guys, for Ashes of the Singularity, this was the first time I played (well at least 30 minutes into it I started recording) so I really don’t know what everything is just yet, but its relatively easy to get a hand on. This is an RTS, and I love Command & Conquer, so that helped me learn this game, but this game also gives you an idea of what the FPS mean.

Ashes of the Singularity:

Well, maybe you are not happy with the performance you saw here and I get that. For those of you that might not be happy, the next chapter might make you a little happy. In this next chapter I show you how I overlclock and then I show the performance again.

[nextpage title=”Overclocking Performance, Benchmarks, Temperatures and Power Consumption”]

Some might want to squeeze a little extra performance out of their video cards; I get that though this is the FTW so it is overclocked. Just because it is overclocked, that does not mean we can’t squeeze some more, and I go over this a tiny bit.

Overclocking, especially on a card that is already factory overclocked is not an easy thing, it takes hours, sometimes days to get everything just right. To make things easier for you, I show you how I overclocked but just know, this overclocked, even if you buy the same card may not work on your card.

Let me show you GPU-Z’s representation of my card before and after the overclock.

BEFORE AFTER

With this overclocked I was able to squeeze an additional 70Mhz out of the GPU and 33Mhz out of the memory and 70Mhz above the stock Boost rate. With that tiny overclock I was able to get an additional 4.5 Gigatexels out of the Pixel Fillrate , 8.4 Gigatexels out of the Texture Fillrate and pulled out 4.2 giga bits of additional bandwidth. So with this, I will compare the stock speeds with the newly overclocked speed in the benchmarks plus this is a good way to make sure the overclock is a stable overclock.

To overclock, I used EVGA’s Precision X, a free download EVGA provides, you just need an account on EVGA’s site to download it.

Here are the default clocks and settings in Precision X

BEFORE

AFTER

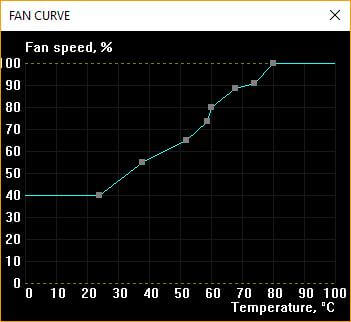

Using the “Curve” button

Which brings up the Curve menu

With that, you can set your curves on how you would like the fans to ramp up once the heat raises.

Clicking the Fan Curve button actually brings up this menu and the Fan curve menu, but I won’t go into this one right now, I will a little later in the review.

EVGA adds everything you need to overclock at the front of the program.

Here you can adjust the power target, temp target, GPU clock offset’s and mem clock offset sliders.

These 10 buttons will save you so much time. Every time you make a change, and just before you start testing your overclock you will want to save your overclock in one of these 10 profiles. Sometimes when you overclock, when you are testing your overclock your PC will freeze or reboot so when you get back in windows, this will give you an opportunity to bring back your previous overclock and from there you can make adjustments.

OK, so let’s get back into the benchmarks.

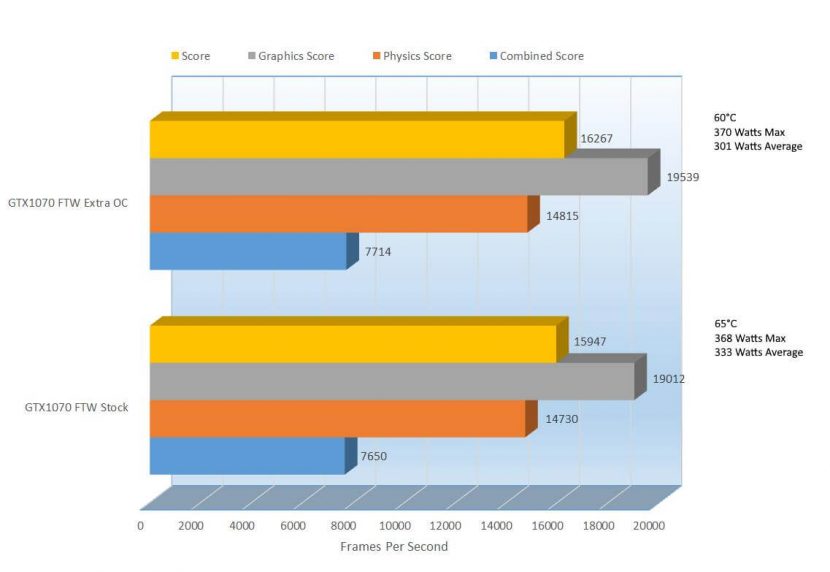

Starting from the first screen showing the results, you can already see that before on stock we scored 15,947 and now we are scoring 16,267, which it seems like a huge leap, it is only a 1.99% increase. Still though with that little increase we can see that it brought our results up from 92% better than all other results to 94%, a welcomed improvement.

Now 3DMark is not just a single score, there are various parts here that bring this score up, we have the Graphics score, physics score and combined. Usually when overclocking you might see one or 2 of these drop, but you can see that in all aspects the overclock improved the performance. The temp dropped as well, which of course did make the sound go up a tiny bit, but as a gamer, we have our headphones on so we can’t hear that anyway, that it only brought are max wattage up 2Watts and actually dropped out average wattage, surely an improvement because of the improved cooling.

OK, let’s get to some gaming benchmarks with Metro Last Light.

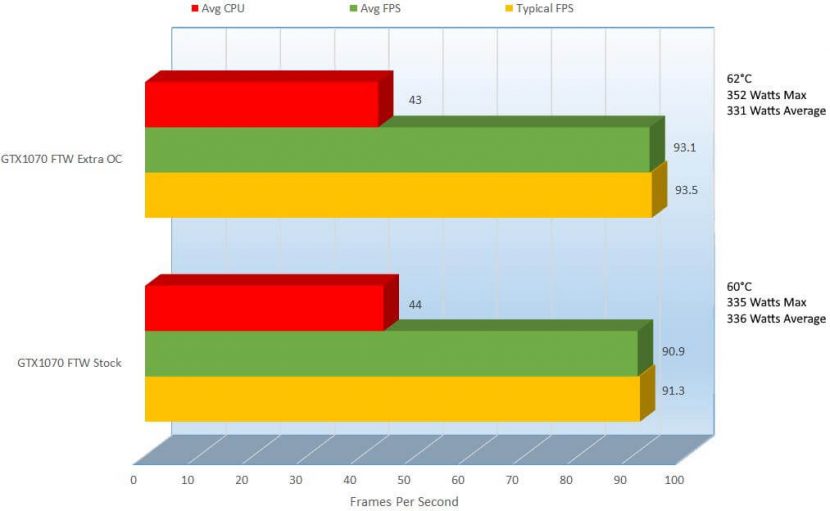

With the overclocks, we get a little more granular in the results.

1280×1024

At first, when I was compiling the results, I notice that the max frame rate was actually about 10 points lower; I thought the rest would be lower as well. Looking at the average FPS I see that we got a 2.99% increase in performance and the cooling improved as well because of the fan curve, a 4.72% improvement in cooling. Power consumption did go up a bit, from 335 Watts to 344 Watts, a 2.65% increase.

1920×1080

At 1920 x 1080, we can see the improvement again was very slight from 75.64FPs to 77.35FPS an improvement of 2.24%. The cooling also improve from 67°C to 63°C and with those 2 improvements we got a little higher power consumption again from 330 Watts to 340 Watts, a 2.99% increase in wattage.

2560×1440

At 2560 x 1440, the performance improvement was slightly more impressive bring us ever so close to that magic number. We can see that while on the stock clocks we received 45.26FPS; on the overclock we received 50.07FPS, a 10.09% increase. The temps improved from 67°C to 64°C but the wattage again increased from 335 to 344 a 2.65% raise in power consumption.

Now let’s see if Thief can steal the show, even though the stock speeds in Thief were already impressive.

1280×1024

I want to point out that the GPU temperature shot up to 61°C, of course because I overvolted it but it is so nice to see that this card can truly perform at 0db while normal usage, even some gameplay when stock. The overclock really did not help here at all, actually it might have made things a little worse, while it did improve the max frame rate by 1.63%, the average frame rate dropped by .3FPS, a 0.24% decrease but that increase or decrease would do nothing, it is just interesting. With that decrease, there was an increase as I mentioned before in temperature but also in average power consumption from 309 Watts to 316 Watts, a 2.24% increase in average power usage even though the average FPS slightly decreased.

1920×1080

Here we can see that there was a slight performance increase… of 1.23%, 113FPS versus 114.4FPS. With that increase came a 2°C increase in GPU temperature but a 4 Watt decrease in system power consumption. Let’s see what 2560 x 1440 has to say about this.

2560×1440

Another performance improvement here from 81.4FPS to 83.4FPS, while a 2.43% increase is small, I will take it. The temps shot up from the stock 0db 37°C to a louder yet still totally tolerable 63°C and power consumption went up .54%, 368 Watts to 370 Watts, barely noticeable.

This game seems to be perfectly optimized for the card and driver set and works perfectly fine no matter what eye candy or resolution you have it set to. Let’s see what Laura has to say about this.

1280×1024

At 1280×1024 not only are the FPS obscene, they get better. At the stock settings we can see 288.5FPS but with a slight overclock we get a 2.26% improvement to 295.1FPS. Since we are working on an enhancement fan curve we get a 2°C temperature improvement. With that overclock though, since we are overvolting we can see a 1.80% increase in power consumption, from 330Watts on stock to 336 Watts on average through the overclock settings.

1920×1080

We can see that yet again this tiny bump has increase performance in Tomb Raider, from 288.5FPs to 295.1FPS a 2.26% increase in performance. There was only a 1°C improvement in temperatures but there was an ever so slight increase in the average power consumption, 1.20% more power being used. OK, what can we expect from 2560×1440.

2560×1440

Here we can see that there was a slight performance increase of 1.07%, a frame increase of 129.7FPS to 131.1FPS. With that performance increase there was only 1 additional watt consumed and thankfully with the fan curve there was a 2°C improvement in cooling bringing down the temperature from 65°C to 63°C.

There were some pretty decent improvements in both FPS and cooling here, so let’s see what Ashes of the Singularity can do here.

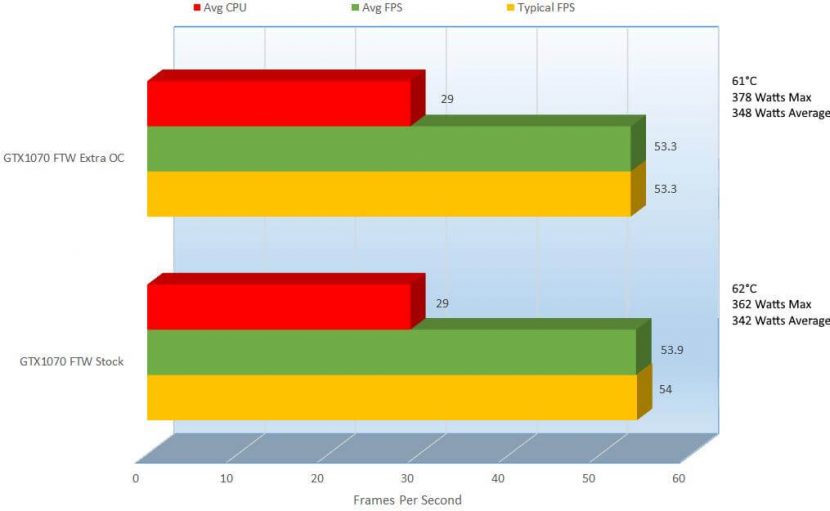

1280×1024

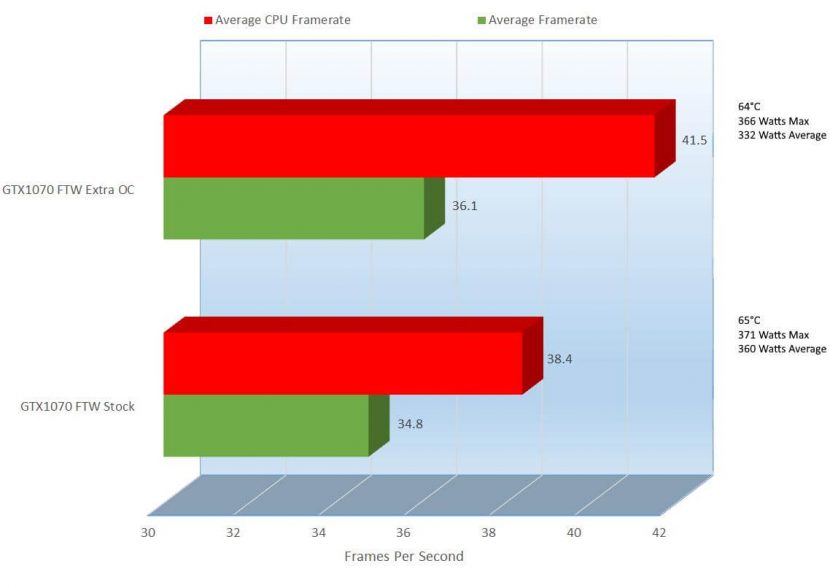

At 1280×1024, it looks like the performance was almost exactly the same; I took screenshots and all after each benchmark and double checked them to check my sanity. What you see here is correct; the performance on 1280×1024 actually took a slight performance hit, though the highest temperature was only 64°C so I know it was not a thermal issue. I hope the performance difference in 1920×1080 is a bit better.

1920×1080

Ok, this is a little better. We can see that the average FPS on the overclock is 36.1FPS while the stock speeds is 34.8FPS, a 3.68% improvement. Aside from the improvement there, it looks like the average wattage went down from 360Watt average on the stock speed to 332 on the overclock, 8.09% less power consumption and 1°C cooler too.

2560×1440

At this resolution we can see that there was improvement, but at .6FPS, you would never notice. As you saw in the gameplay video, the CPU Frame rate helps tremendously. Let’s come back down to earth and check out The Division.

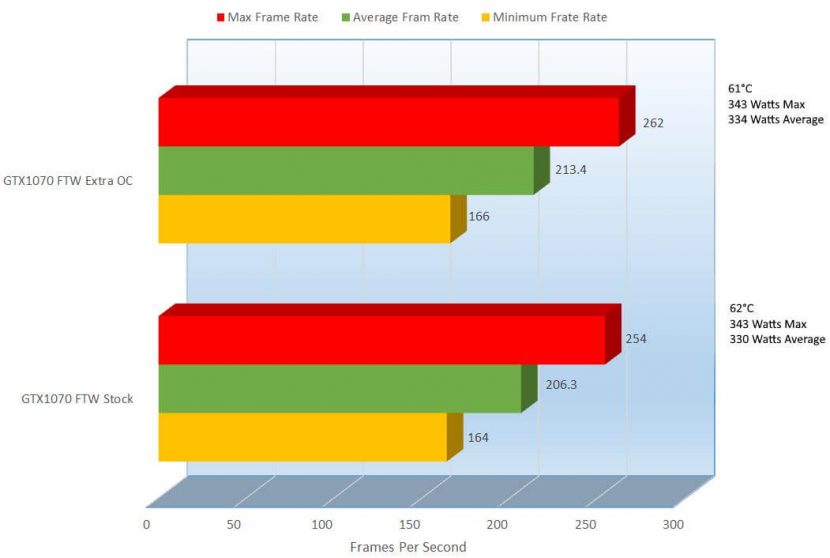

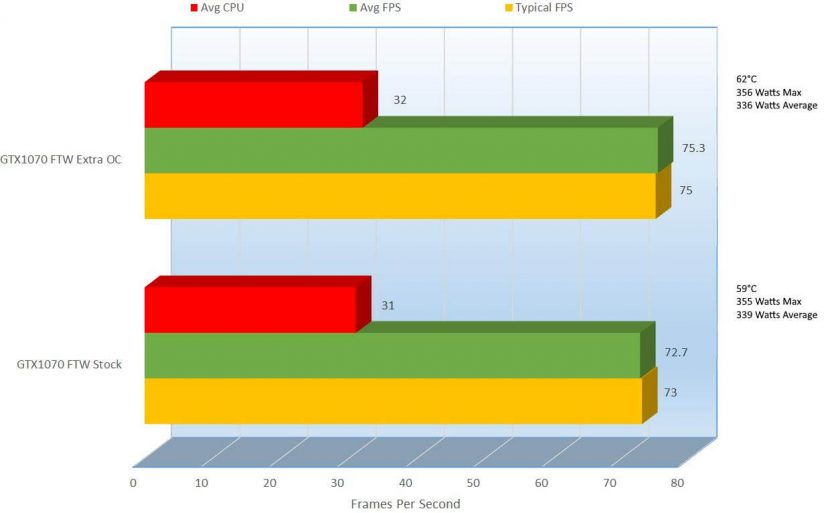

1280×1024

At 1280×1024 we can see a slight performance improvement; we are getting 2.39% better performance with this overclock. The temperature did increase 2°C though the average wattage did decrease by 1.50%. Not a great improvement, but at over 90 frames per second, it’s probably not noticeable.

1920×1080

A 3.51% increase in performance in the most common resolution is definitely a welcomed improvement but still a very small benefit. With the increase in performance the temperature did increase 4.96% by 3°C though the average power consumption dropped.

2560×1440

In an odd switch, the performance actually dropped by 1.12% from 53.9FPS to 53.3FPS. The temperature did drop by 1°C and the power draw was raise by 1.74%.

Looks like I need to have been a little more aggressive in my overclock, but I did it mainly to show you that even though it is an overclocked card, you could push it more.

Tom Clancy’s The Division as a new game for me, I am very pleased that I can play it perfectly well at 2560 x 1440, and maybe in the very near future a graphic driver update will give me that much more extra umph to get it well above 60FPS.

I have given you a little info on how to overclock using EVGA’s PrecisionX, but it can do so much more than just overclock. Let’s take a look in this next chapter.

[nextpage title=”EVGA PrecisionX Overclocking and Card Utility”]

EVGA’s Precision has been around for some time and has gone over many different makeovers and tons of extra features have been added.

As I have shown you, this is what PrecisionX looks like. So let’s dive into it, let’s start from the top left hand corner.

First off, HW Monitor, a sweet little utility built into PrecesionX that provides GPU and system temperatures, fan speeds, clock speeds, etc… lots of info

Next up is the OSD Setting. OSD stand for On Screen Display, a set of text that appears on the screen when you are gaming, video/photo editing and other types of video duties. Here are the settings when you click “OSD Settings”

And for example, while using Paint.NET to edit images for this review, we can see the OSD. Provides good statistics on the card itself.

Next is “OSD”, again for On Screen Display, this will simply toggle on or off the OSD.

Start Up, like OSD toggles whether to start up PrecisionX within Windows or not to start it up. This is just a shortcut to this setting; it is in the settings of the program as well.

ShadowPlay is a cool NVIDIA utility; this is a shortcut to it. I will not go over it, but it can help you check for drivers, record game play videos, optimize games for optimal performance and so much more

Then we have the big K,

This automatically overclocks your card to full boost speed at all times.

My overclock is only slightly better than KBoost, but it’s an effortless overclock at all times.

Default, not a lot of explanation is needed for this one I think, if you overclock too much and want to revert back to stock, just click this.

Coming to the very bottom, we find the version of PrecisionX, 6.0.6 the Profiles. Here we have 10 profiles that we can save custom settings into

If you hover your mouse over any of the profiles, it will pop up this information window, but only long enough for you to read the first sentence then disappears almost mockingly. I took a screenshot to make it easier for you to read.

Pretty easy right?

Clicking on the cog at the end of the profile pops up the “Fan Curve” window and the settings tab.

As I showed you before, you can adjust the current fan speed by adjusting the Fan Curve, this comes from the Fan tab, on the General Tab, we have general settings, and the Start with OS I mentioned previously.

In case you were wondering how loud those fans are, check this out

Pixel Clock allows you to overclock your monitor, careful with this; you could kill your monitor.

A very cool feature that was introduced with these cards are the LED’s. Clicking on the “EVGA LOGO LED” drop down shows you these options.

But better than describe the options, I will show them to you.

Here you can see the card a bit inside of my system with the LED lighting on, aside from the video of course.

And yes, even the bottom of the card lights up

This is what I was mentioning early on in the review about the gimmicky lights, the adjustable RGB LED. gimmicky as it is, I do like it. OK, back to PrecisionX.

Here we can save all of the profiles with hotkey assignments and all.

Interface, allows us to change the interface of PrecisionX itself

Framerate Target lets us set the framerate we would like the program in focus to have. You might use this for older games that would just blow up and end the minute you start them because you have so much horse power behind the wheel now that you just didn’t have back in 1999.

Depending on what you set this do, you card could work extremely hard, or breeze through games. This can of course cause your card to run hotter, cooler and take up more or less power.

Ok, that’s it with the Settings, let’s keep circling around PrecisionX

Curve brings back the Fan Curve and all of its settings

Closing that again, we can also Disable or Enable the Automatic Fan control

Then there is a little slider here where we can adjust the fan speed, default is right on the middle

Raising that bar to 100%, then clicking apply throws the fan into overdrive and it gets real loud, but of course that is to be expect when raise the fans to 100%, 2709RPM, I saw it hit 2720RPM though.

When you are done making any changes, make sure to click Apply.

The middle is where you would make your changes if you are overclocking. The power target controls the power provided to the GPU, the temp target controls the temperature at which the fans try to keep the GPU. The GPU Clock offset overclocks the GPU, you can go up in 1Mhz increase, though with the slider it is a bit hard. Mem Clock Offset overclocks the video cards GDDR5 memory as well in 1Mhz increments using the slider.

EVGA thought ahead and actually lets you also overclock by clicking where the number of Mhz is and typical a number, the same applies to all of the targets and offsets.

Using the chain I have highlighted on the left, we can link the sliders between Power Target and Temp Target, so as we slide Power Target, the Temp Target would go up as well.

By clicking on the chain, you can link or unlink the 2. Adjusting these target will allow you to stabilize overclocks by providing more power or lower or higher temps to the GPU. The Temp target allows you to have a level of protection to your overclock by settings the temperature at which the GPU will throttle itself down, or downclock itself to protect itself against thermal damage.

Adjusting the priority allows you to tell the card which is more important to focus on, Power or Temp Target.

The voltage meter, allows you to adjust how much voltage the card receives during an overclock, be very careful with this, too much voltage will ruin your card. Like the Power and Temp Targets, adjusting the voltage will help stabilize overclocks. During my overclocks, I never touched this bar.

At the top, the blue bar tells you where your GPU’s Mhz are at the moment, the base clock. If you look at the bar itself, a little after the blue, you will see a red triangle. The read triangle tells you where the boost clock will reach with it is required.

Toward the bottom between the “GEFORCE GTX 1070 FTW” branding, you will see a left and a right yellow arrow.

Pressing Right will change you to the Voltage points. By clicking the arrow on the Basic button, you will get Manual, Linear and Basic settings.

Selecting manual and clicking in the box to create a little green box will create a voltage/frequency offset limit. Click Run to test them. Let’s click the yellow right arrow again.

This brings the HW monitoring,… in a tiny box… Not sure why you would want to see it so small, but you can if you want to. These are the only 3 options you get clicking the arrow.

Top right hand corner, we can see the GPU’s in the system, I only have one. If I had to, we can select a particular GPU to apply an overclock and change any other settings too. If the chain is linked, it will synchronize all of the cards included in the system.

With all of these GPU and Temperature talks, we have seen a little on how hot this GPU can get, but only in numbers, let’s check actual thermal reading using the Seek Compact Thermal Sensor, a review of this sensor will come later as well.

The first picture, shows you the temp of the GPU while running 3DMark looping for about 10 minutes, you can see here the hottest points in the card are in white, they are 56°C, You can tell from the legend on the left. The coolest portion in this pic is not actually on the GPU, it’s the liquid cooling on the Freezer 240MM. On the card itself, it’s about the 30’s or so in green.

This is the picture of the bottom of the card during the benchmarks, here the side of the fan closet to the motherboard is the coolest at are 13°C.

After letting the card cool down for about 10 minutes, we can see the hottest point on the card was 47°C. The hottest part is the EVGA logo, which exposes the PCB and the vents of the card.

Since the card is under 60°C, we can see under the card, the fans are still, and the coolest portion is that same spot on the card at around 21°C.

We can see here that the card does not get very hot so the fans don’t need to do too much work. This was also evident in the benchmarking in the earlier chapters in the review itself. This is a good thing because the way the vents are made is that the air is vented into the case, there vents on the back of the card as seen here

The problem is that not much air comes out of there, not only because of the vents on the side but because at the beginning of the card, where it meets the outside of the case, there is an opening.

In that opening, you can see the rear of the card, so there is no buildup of pressure to exhaust the air.

OK, so let’s put everything together and bring this review to a close. The next chapter “Final Thoughts and Conclusions” brings it all together.

[nextpage title=”Final Thoughts and Conclusions”]

So let’s see if our opinions are similar on this card.

Pros

- Tons of ports to fit almost any monitor

- Great performance

- Supports 3 x 4K displays

- Supports 4 monitors Simultaneously

- G-Sync Support

- Supports DX12

- 0DB Fan Operation

- Aluminum Backplate (For Better Cooling)

- Adjustable RGB LED

- RGB LED’s seem a little gimmicky, not a bad thing though

- Dual BIOS support

- VR Ready

- Supports 7680×4320 8K Super UHD

- Supports GameStream

- Includes 8 Pin Adapters

Cons

- Does not include any display adapters

- Could be important for people that have 2 x DVI Monitors, 3 x HDMI Monitors, 3 x DP monitors, etc…

While the con is a biggy, I can’t say anything bad about this card, aside from the price but considering its performance is a notch above the rest, it can’t be beat. Its next competitor over is the 1080 which can be double the price, the price of the 1070 in comparison is decent.

EVGA did a great job with the card, keeping it extremely quiet, beautiful (yet gimmicky) RGB LED’s, industrial yet elegant looking with amazing performance, this card is a keeper. With all of this, I am forced yet pleased to give this card our Editor’s Choice award.

We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

I have spent many years in the PC boutique name space as Product Development Engineer for Alienware and later Dell through Alienware’s acquisition and finally Velocity Micro. During these years I spent my time developing new configurations, products and technologies with companies such as AMD, Asus, Intel, Microsoft, NVIDIA and more. The Arts, Gaming, New & Old technologies drive my interests and passion. Now as my day job, I am an IT Manager but doing reviews on my time and my dime.