We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

Hello my gamer friends out there, I am here to bring you another video card review. Today’s flavor will be the EVGA Geforce GTX 970 Superclocked ACX2.0. This card has the makings and already the folklore behind it that makes many gamers want it, let’s find out why and if that lore is true.

EVGA is NVIDIA’s top 3D card partner, bringing you all of NVIDIA’s latest and greatest and improving on them in great ways. Latest and greatest is not what wins them this top tier card partner achievement it is also very heavily reliant on customer service and if you don’t know, you will now, EVGA has amazing customer service.

Enough on that, I could go on about my amazing personal experiences with them but we are here to talk about the EVGA Geforce GTX 970 Superclocked ACX2.0, even more specifically, part # 04G-P4-2974-KR.

Check out the specs on this beast

Specs and Features

- 1165Mhz Core Speed

- 1317Mhz Boost Speed

- 1664 CUDA Cores PhysX

- 4096MB GDDR5 RAM

- 256-bit Memory Interface

- 7010Mhz Effective Memory Frequency

- 2-Way and 3-Way SLI Ready

- NVIDIA Dynamic Super Resolution Technology (4K)

- Up to 4096 x 2160 (Digital) Resolution

- Up to 2048 x 1546 (Analog) Resolution

- 240Hz Max Refresh Rate

- EVGA ACX 2.0 Cooling

- NVIDIA®GPU Boost 2.0

- NVIDIA®3D Vision

- NVIDIA® G-SYNC Ready

- NVIDIA® Adaptive Vertical Sync

- NVIDIA® Surround

- Supports up to 4 concurrent displays

- Two dual-link DVI

- HDMI® 2.0

- DisplayPort 1.2

- Supports up to 4 concurrent displays

- DirectX® 12

- PCI-Express 3.0 Support

- OpenGL 4.4 Support

- OpenCL Support

Now check out the unboxing

Unlike most other manufacturers of cards that have 2 PCI-E connections, most only bring you one adapter, EVGA provides 2. Some power supplies have enough for only 1 or 2 cards, since this series of card supports 3Way SLI they are just making sure you have what you need to get up and running.

And the usual DVI to VGA, if for some reason your monitor does not have DVI, only VGA you can connect this into your card and the VGA connection to your monitor.

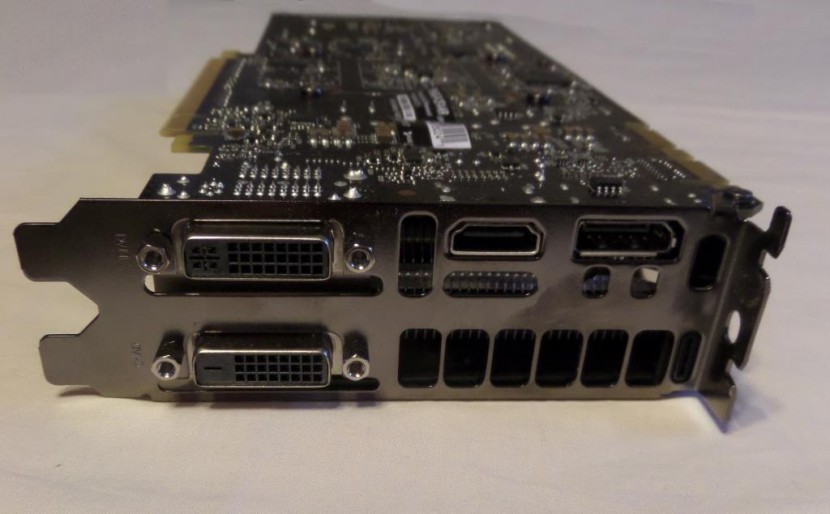

And here we can see the connections this card comes equipped with. 1 x HDMI, 1 x DisplayPort and 2 x DVI’s (DVI-I and DVI-D).

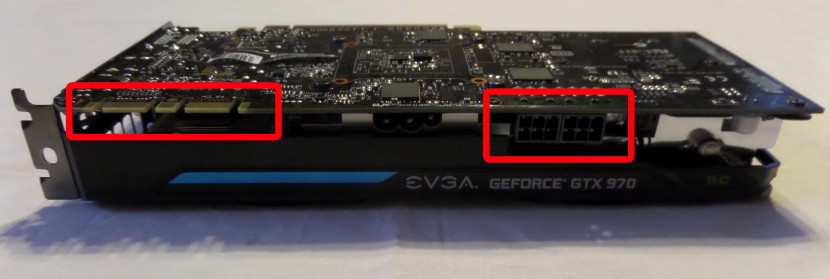

Here you can see on the left, 2 x 6-Pin PCI-E connectors that you will need to plug in to provide power to the card and on the left, you will see the SLI finger that you will need to connect to another card, in 2Way or 3Way SLI. I only have one right now, so I will leave it with the rubber cover it brings.

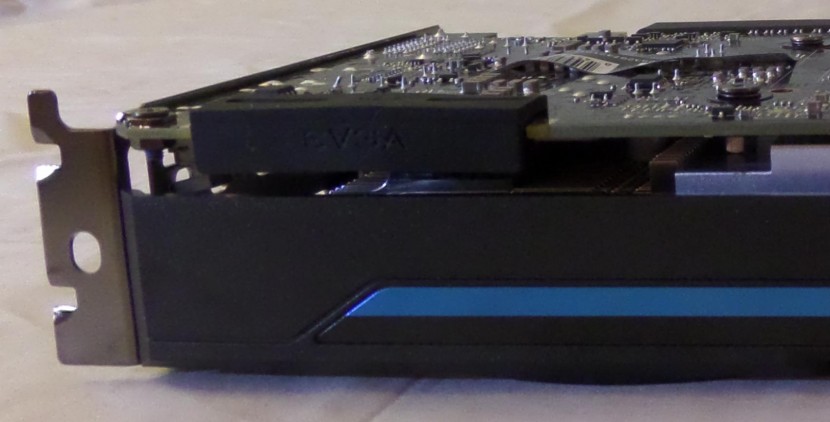

Here is how the card looks like with the rubber cover on it.

With this card, EVGA provides a 3 year warranty which seems to be the standard, although some other guys give you 2 years,… even only 1 year but again I can vouch personally for how good EVGA’s warranty is.

For all of you that may not know how to or may be a little scared on how to install a video card, check out this video, it might help you.

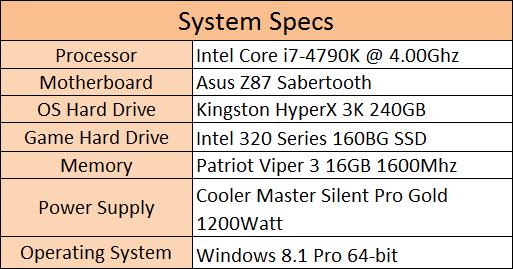

Now that the card is installed, let’s check out how she runs and we will do a little comparison as well to show you what the differences are.

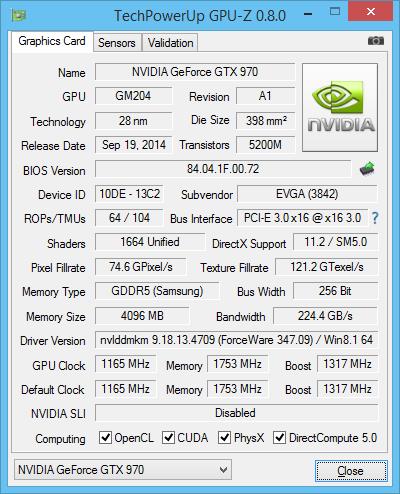

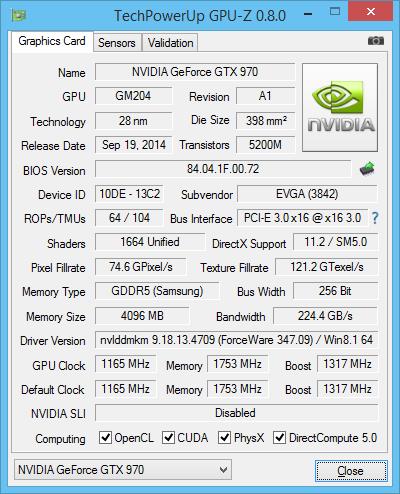

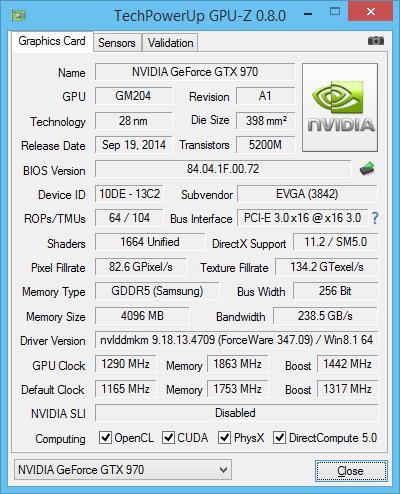

And here are the GPU-Z read outs of the card

The card has some great specs and is incredibly new. The drivers being used in these tests are the WHQL’ed 347.09 driver set.

The benchmarks being used in this review are 3DMark FireStrike, Metro Last Light, Tomb Raider and Thief.

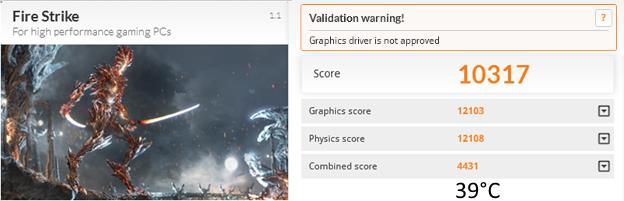

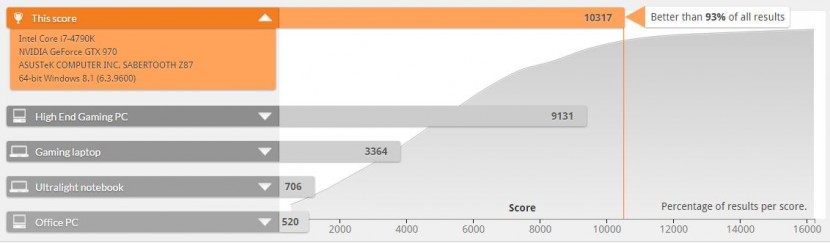

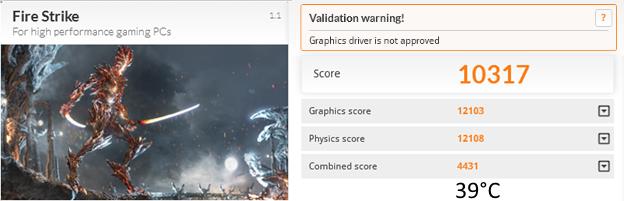

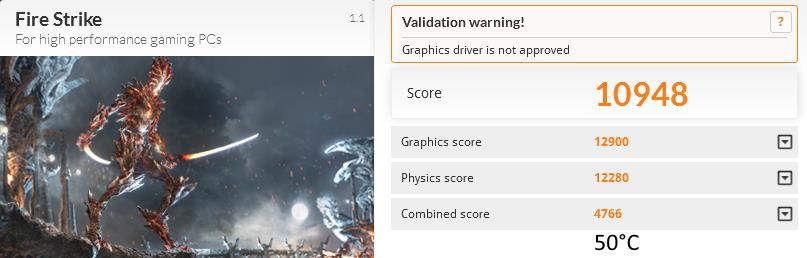

3DMark’s FireStrike Extreme test heavy tessellation and volumetric illumination, complex smoke simulation taking advantage of the compute shaders, not the hardest test for this bad boy but it is the staple of benchmark suites.

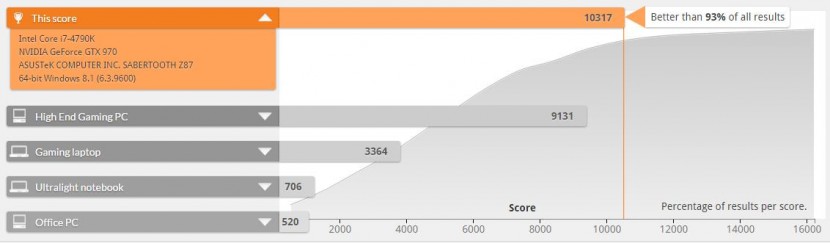

Comes in showing a very strong 10317 and digging in a bit deeper into the score we see below that it is 93% better than all other results validated on Futuremarks database, that’s kinda nice.

During this test, the system used a peak of 320Watts but averaged out about 212Watts and reached as high as 39°C. Some might think the temperature being so low would mean that the card is very loud but this is not the case.

This card as well as many other EVGA card employs a great technology named ACX2.0. ACX2.0 allows the fans to stay off, saving power and then once the card reaches a certain temperature the fans start to spin up. To make sure the fans spin as long as possible, as in lifespan of the card, the fans have double ball bearings and to provide better cooling also with its swept fan blade design(11 of them) which improves the performance and efficiency in cooling and decreases the noise the air creates.

EVGA claims this provides a 26% cooler GPU, which also makes the fans 36% quitter and 250% lower fan power since they are not always spinning when not needed compared to NVIDIA’s reference design. This also explains their claim to 400% longer lifespan.

This card ran an idle wattage floating around 125Watts and near silent.

To aid to EVGA’s ACX2.0, the 970 brings us NVIDIA’s latest and greatest Maxwell microarchitecture, a direct successor to their earlier Kepler series. Maxwell not only improved its power efficiency, improved the performance of CUDA even with less CUDA cores, improving its own video encoder and brought on its DSR technology to improve your gaming experience in 4K but on even lower resolutions.

Back to 3DMark. Initially when I ran 3DMark I came across and issue. When running SkyDiver, after the woman parachutes down and then enters the cave, she turns on her night vision and bam, my screen would go black yet my PC seemed to be running fine. I ran through a few tests, looking through logs and uninstalling/reinstalling drivers and software but the issue reamed, no matter what I tried.

I contacted EVGA and they were not sure what to think of the issue, but they tried to help me troubleshoot a few of which none helped. I also contacted Futuremark and they mentioned that this could be on issue on their side, with the benchmark as their forums revealed, as did EVGA’s though. I was impressed that FutureMark owned this issue and also mentioned that they were looking to come out with an update to correct it, but had no timeline to provide me.

Turns out working on a review for the Kingston HyperX 3K helped me resolve the issue. As a reviewer and a tinkerer by nature, I have installed tons of pieces of hardware and software on my previous build so the OS was dirty. Testing on the new Kingston drive, I had a brand new install of windows, drivers and software and testing on the new install corrected the issue, which is why I am able to bring you this review without any “While it worked here, it did not work here” captions. The issue was 100% on my side, although I do mention this in case you run across the same issue, it may be time for a clean install of your OS, my review of the Kinston HyperX 3K might help you out on this.

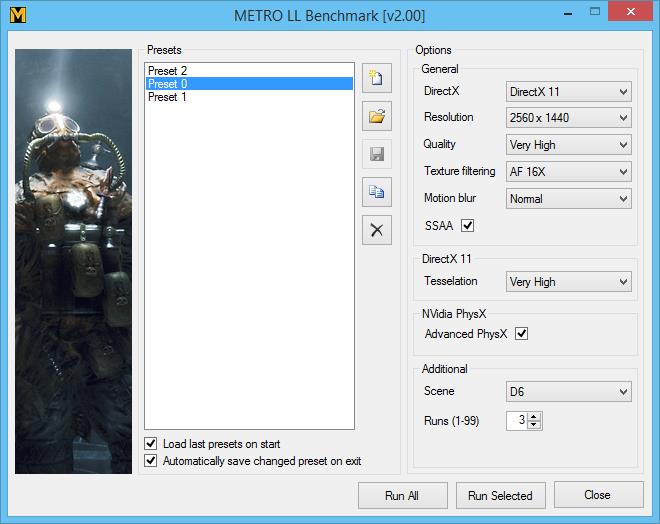

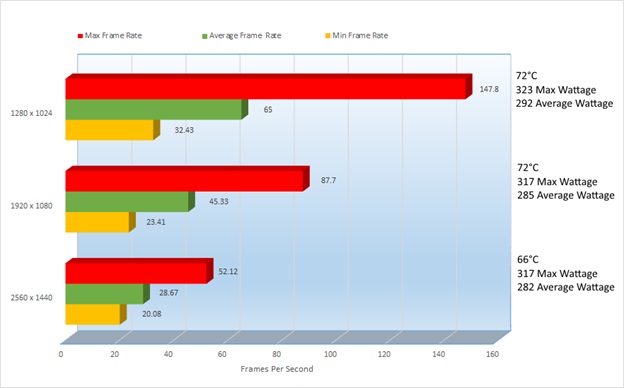

Next on my series of test is Metro Last Light. One of the most taxing games I have the pleasure of testing, please let me know if you know of another and I will see if I can add it to this list. This game is not only taxing on stock cards, but amazingly taxing on overclocks.

I have 3 Presets that are all the same, the only difference is in the Resolution, so here you can see my presets, also notice that each preset is set to run 3 times.

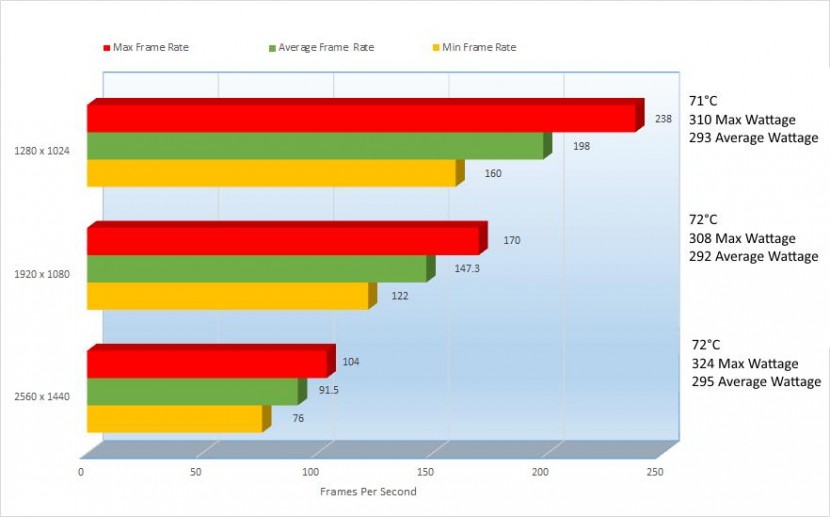

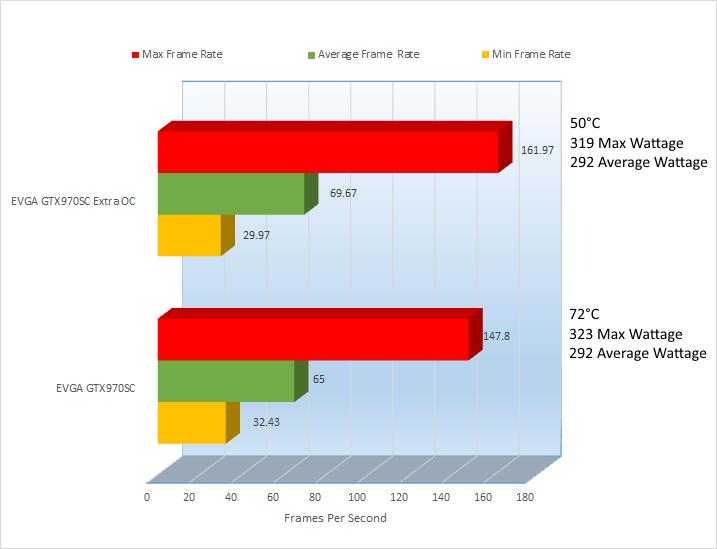

This card can run the cards a bit hot based on how much this game stresses the card, but the hottest this card got was 72°C, that’s not too bad and reached 323Watts at its highest. Oddly enough that was at a measly resolution of 1280×1024 but all peaks listed in this review were only hit once during testing and it was for 1 second, the average wattage is where the card hung around during testing.

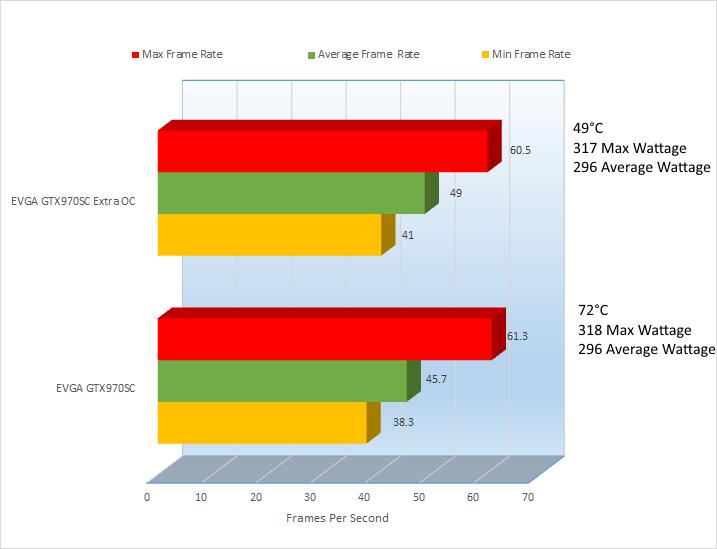

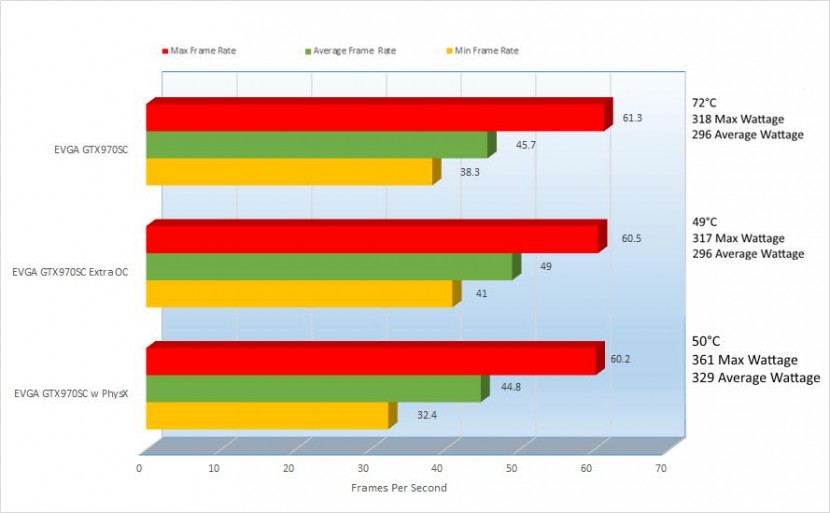

Don’t pay too much attention to the Max Frame Rate as like in the wattage these are peaks, not sustained FPS, sustained FPS would of course be average FPS, which are all pretty OK in this game. Minimum frame rate of course is important too because it shows you how low the card drops and they are all reasonable, not the greatest but not the worst.

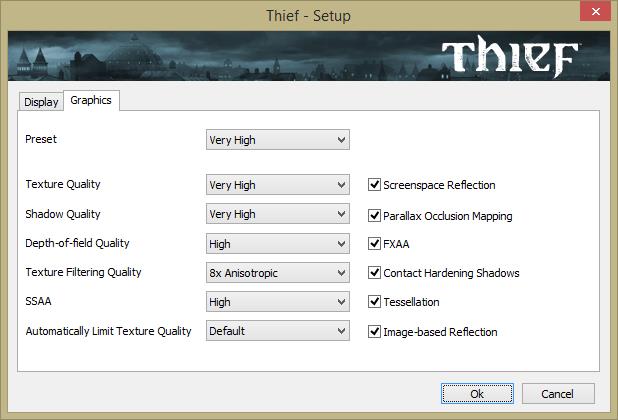

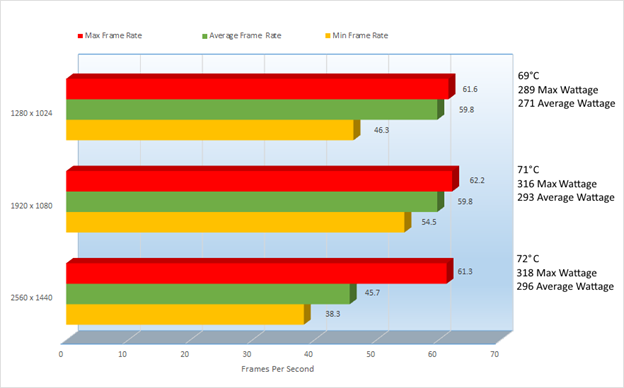

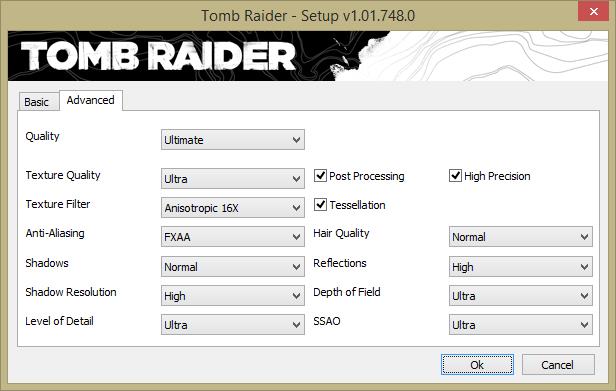

For Tomb Raider and Thief, they have relatively the same settings but I will list them so that you can see what they are, first is Thief.

These settings are much more playable even at the highest settings. The lowest frame rate under 2560 x 1400 was 38.3, not great but not bad and the lowest average FPS was 45.7, I would say that is very acceptable. You can of course get much better frame rate dropping a little bit of the eye candy, but not too much.

Last but not least is Tomb Raider. You will notice, the settings are relatively the same, but I will have to apologize on previous benchmarks of Tomb Raider that I have corrected beginning from this review. Under “Hair Quality” you will notice it reading “Normal” but on previous tests on NVIDIA cards, I had it reading “TressFX”.

TressFX is an AMD developed hair rendering proprietary technology (I thought all AMD stuff was open source, hmm) which actually does mess with NVIDIA performance if left on TressFX rather than “Normal”. In short, TressFX makes hair look nice and we know how important beautiful free roaming hair is in a game,… for the first 10 seconds of gameplay then it is forgotten. A great technology for hair dresses and beauticians but for us gamers, move along.

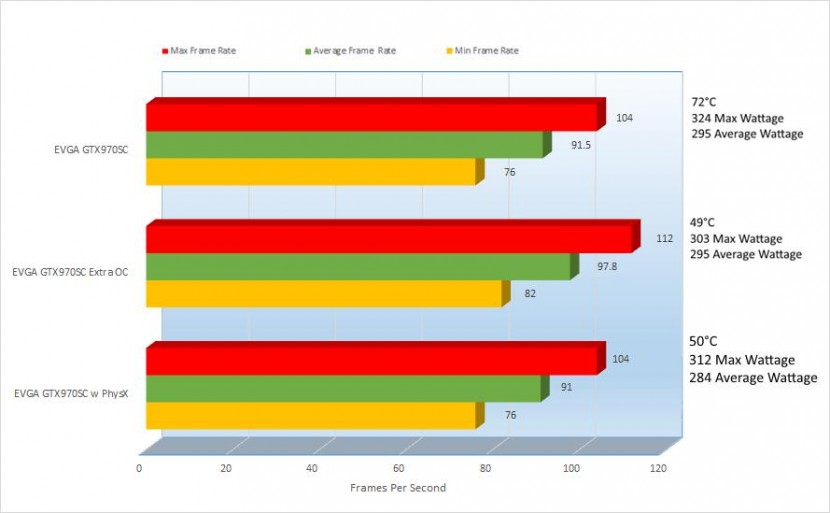

Tomb Raider knows no brand loyalty it seems and just runs great on NVIDIA and AMD. From Min to Max on 1280 x 1024 to 2560 x 1440 it is 100% playable. Looks like I may need to find myself another benchmark to put in these tests that might stress the cards a bit more. Mind you, it did pull some wattage, more than Metro but that seems to be the most stress the game provides at least on a card of this caliber.

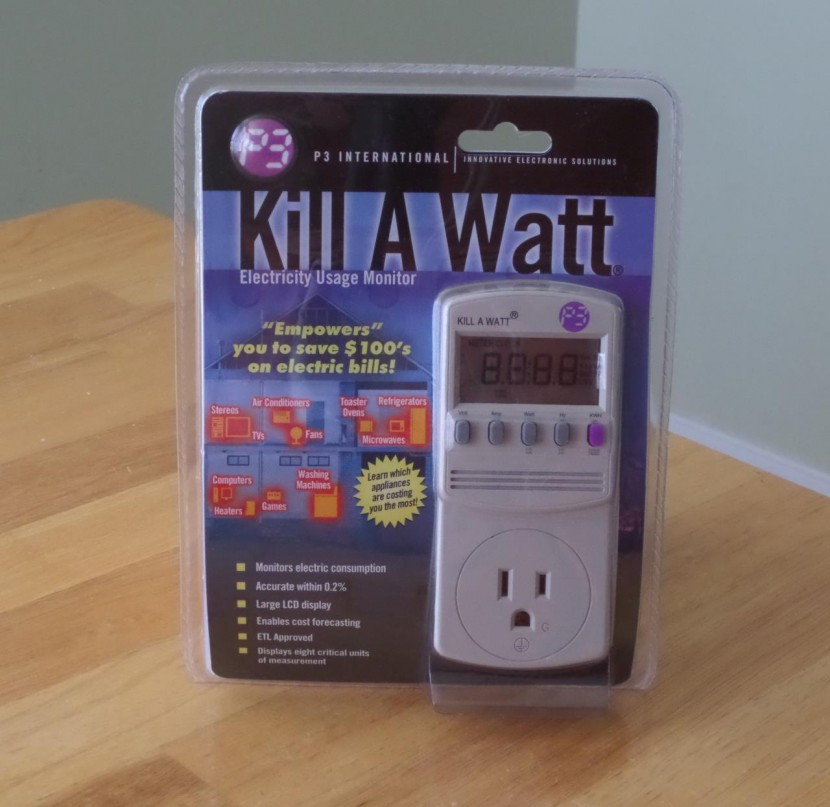

You will notice, I have started including wattage measurements in my reviews on the request of a reader and a poster of course, I can’t read your minds. I started calculating the wattage using my new trusty little friend the “Kill A Watt” by P3

This shows you how much Wattage, Voltage, Amperage, Hz and KWH are being used on the fly. I am learning now how to use it as I used to use a different way to measure it at my prior places of employment which costs more. Problem which the Kill A Watt is that you need to watch it while the tests are being run to know the peak and average, there is no way to keep them so that you can read them afterwards, unless I just don’t know how to use that portion. If you guys know, please let me know.

Also, another reader wanted to know how the cards performed in Battlefield 4 in real world gameplay, so I did that. I recorded a short video of me playing BF4 at Ultra in a resolution of 2560 x 1440. BF4 is a real killer with all of the eye candy on, but the proof is in the pudding, so check it out.

Not too bad at all right? Battlefield 4 is one of my favorite games and now I can play it at Ultra with Zero issues, it runs amazing.

So you have seen how fast it runs, how much power it pulls and just how capable it is but how well does it overclock? Let’s take a look.

As I showed you before, this is how GPU-Z reads

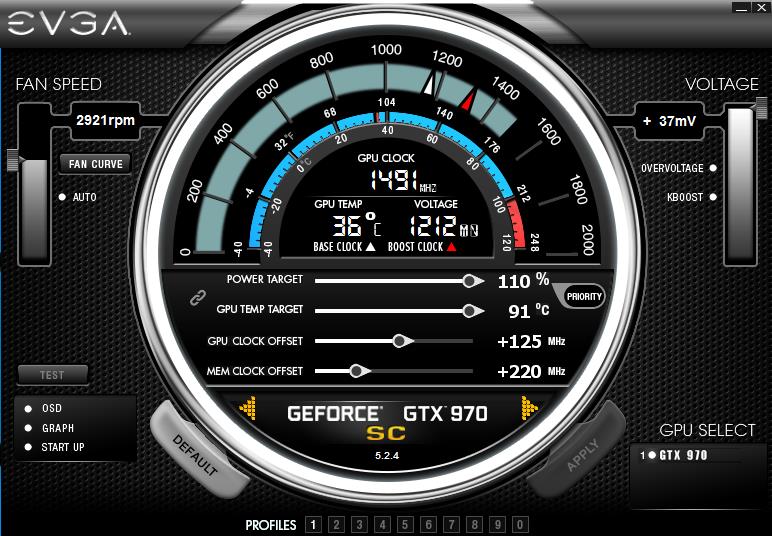

And with it PrecisionX reads

Pretty standard, but let’s overclock it a bit and see what they read now.

And PrecisionX

So I overclocked the GPU by 9.68%, boost clock by 9.49% and the memory by 5.90%. Honestly I thought I overclocked the memory more but looking again, it was not too much. I didn’t want to go to crazy and kill my card, but I wanted to show you what you can do, and it seems that even though this is an SC card (already factory overclocked) it has much more headroom.

With the GPU and Memory increased offset, I had to raise the Power Target to 110% and boost the Voltage to 37mV while enabling OVERVOLTAGE and KBOOST. KBOOST forces the card to operate at full boost speeds regardless of the load which will generate more heat and use more power. OVERVOLTAGE enables the slider in which I raised the voltage to +37mV to stabilize overclocks, this can of course kill your card; so use it carefully. I raised it to the max so that I would not need to play with it during my increasing of Mhz on the GPU and Memory.

“This can of course kill your card; so use it carefully”

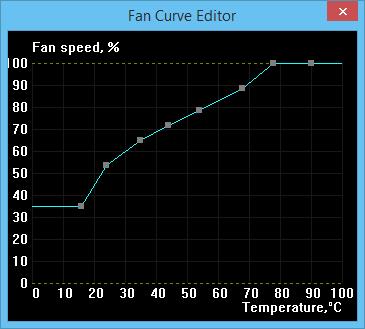

Even though I don’t endorse overclocking, I will say that when overclocking make sure that you provide adequate cooling to protect a little more in avoiding killing your components. For this, I click on the “Fan Curve” button and raised the fan curve about 70%, I also adjusted the Fan Curve which of course will raise the noise the card creates but I have my headphones on when I play, so it doesn’t matter to me.

You can of course be a little more timid on the cooling or more aggressive, it’s up to you but this is what I chose.

Overclocking and raising the fan speeds, my idle wattage is now 140 from the original 123. Remember, with the KBOOST, I have the card running at full boost speeds so with the fans being raised and speed being raised this explains the extra power.

With that said, let’s jump into more benchmarking.

EVGA GTX970 SC

EVGA GTX970 SC Extra OC

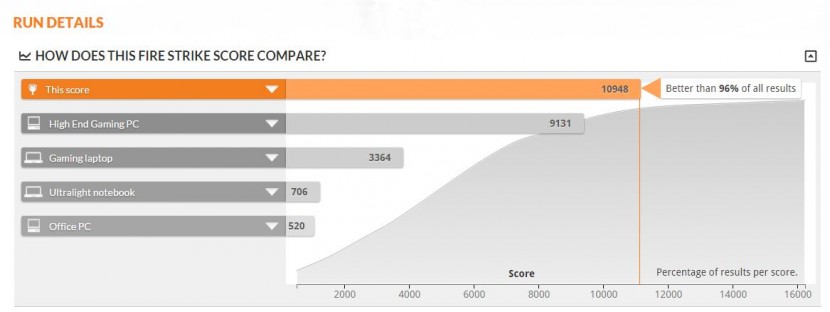

All across the board here, the score improved nicely. The original score of 10317, went up to 10948 a 5.76% improvement. The Graphics score went from 12103 to 12900, a more impressive 6.18% improvement. Physics went from 12108 to 12280 a 1.40% improvement. Finally the combined score went from 4431 to 4766, a 7.03% improvement. With the improvement, the heat went up from 39°C to 50°C but that is to be expected… or is it?

EVGA GTX970 SC

EVGA GTX970 SC Extra OC

With the overclock, you can see that I rose from being 93% better than other results to being 96%, on such a small bump.

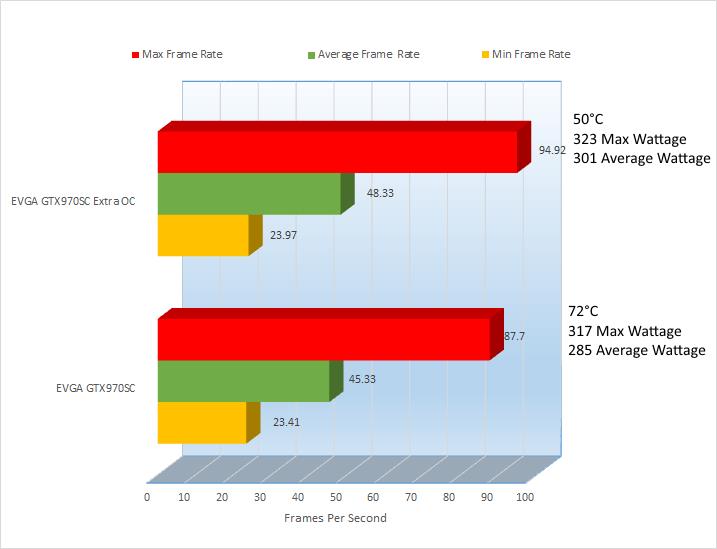

Let’s jump to Metro Last Light

1280 x 1024

Under the Average Frame Rate, the frame rate improved by 4.67, a 6.70% improvement. The best improvement was under the Max Frame Rate although not as important as Average did go up 14.17FPS a 8.75% improvement and oddly enough the Minimum Frame rate dropped a bit, but again the most important to look at is the average because that is what you will notice the most during game play.

Also notice that since the fan curve was adjusted, the temperatures are much better and a cooler system is a happier system and potentially a longer living one, although the system is a little longer.

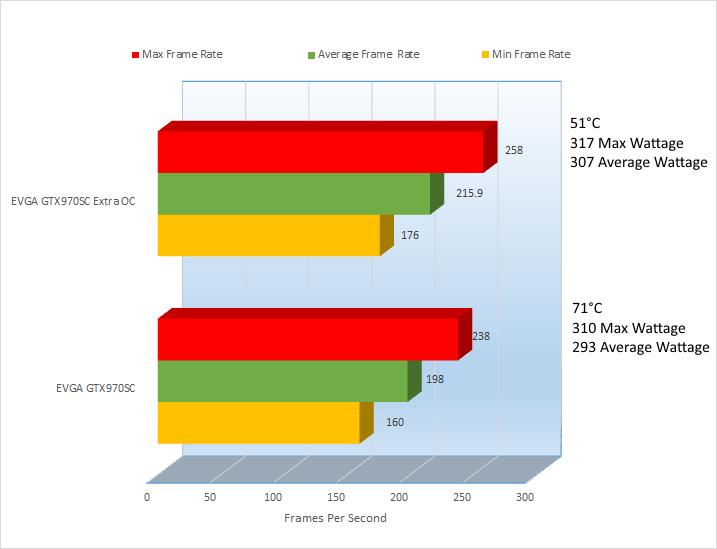

1920 x 1080

The average FPS in this one was slightly less impressive, but it still go up from 45.33 to 48.33, a 6.62% increase. Max had again the best improvement from 87.7 to 94.92, a 7.61% increase. Minimum saw only a 2.34% improvement but an improvement is an improvement none the less.

The wattage increase was only 1.86% but again the cooling improvement was impressive, 22°C lower.

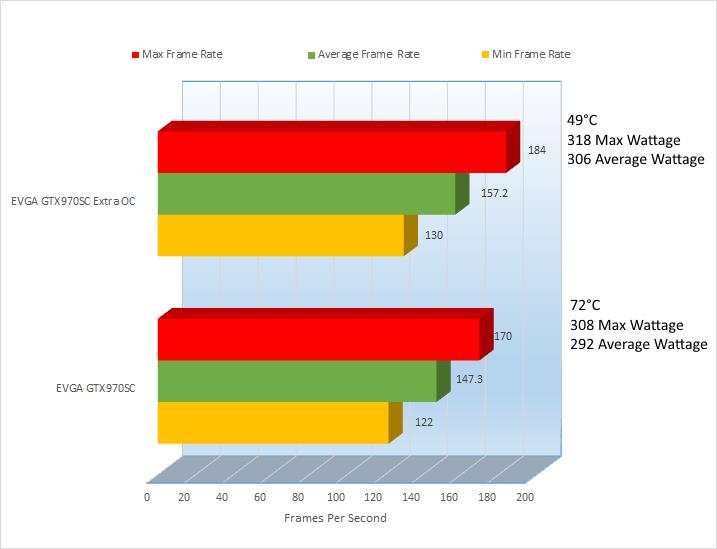

2560 x 1440

The average FPS did go up at a 6.52% increase. Max only a slight improvement at 5.06%. Minimum saw a 6.78% improvement.

The wattage increase was a measly 0.63% increase but the cooling stayed at a solid 50°C, 16°C cooler, I like those numbers.

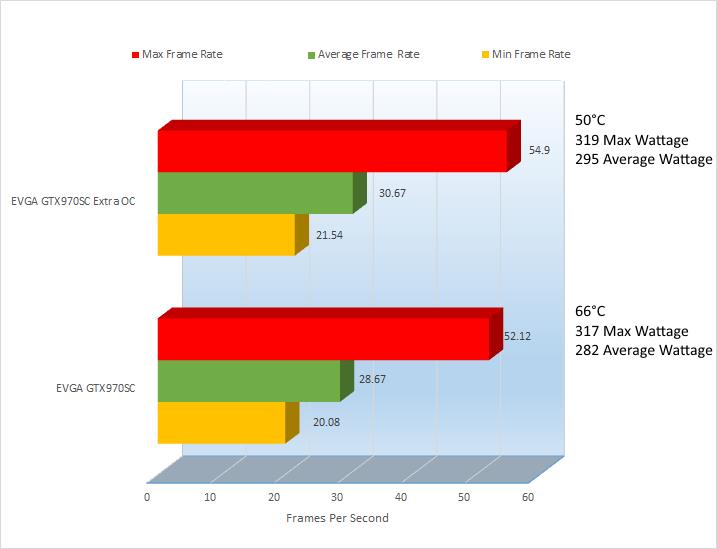

1280 x 1024

Thief does not seem to be entertained too much by my overclock and actually went down .97%. Not too much, but it did bring up the Minimum a whopping 21.66% almost flush with the Max and Average, which gives you almost seamless game play. The temperature drops 37% on the Extra OC and the wattage goes up by only 1.03%. Again, not the best example of an overclock but is a great example of making a game 100% playable.

1920 x 1080

Again Thief ignoring my slight overclock by almost nullifying it on Max and Average but the Minimum creeps closer again trying to flush itself up to its bigger brothers. Minimum went up 5.05%. The temperature went down 30.99% and wattage only went up .32%.

2560 x 1440

Max frame rate actually dropped 1.32% while Average went up 6.73% and Minimum went up 6.58%. Cooling went down 31.94% which is very nice and the wattage max wattage actually dropped .31%. Max again is not very important the minimum increased in all 3 and the average really only increased in the final test, although the most important at 2560 x 1440.

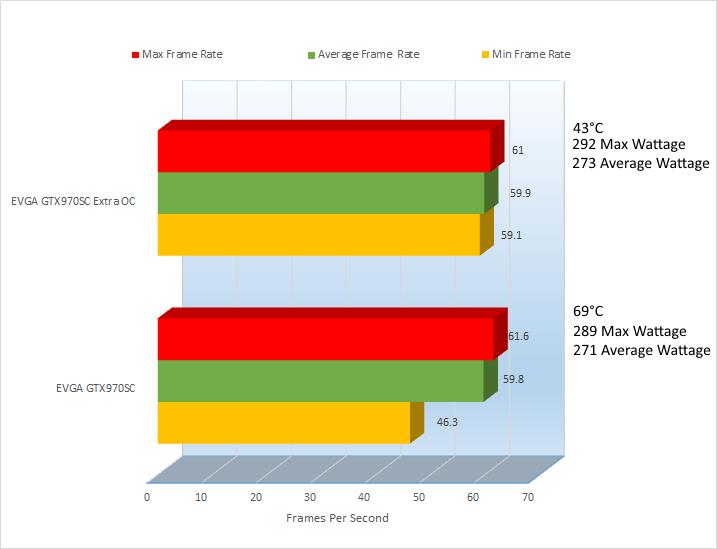

Again coming up at the rear is Tomb Raider, let’s see how these scores compared to it non extra overclocked counterpart.

1280 x 1024

Huge increases all across the board here, showing that the smallest of overclocks makes a huge different in Tomb Raider. Max FPS sees a 7.76% increase, while Average sees a 8.29% increase and Minimum pulls up a 9.1% increase. The temps rose from previous overclocked test, showing that Tomb Raider pegs the GPU a little higher but still goes drops cooling it 28.17% better.

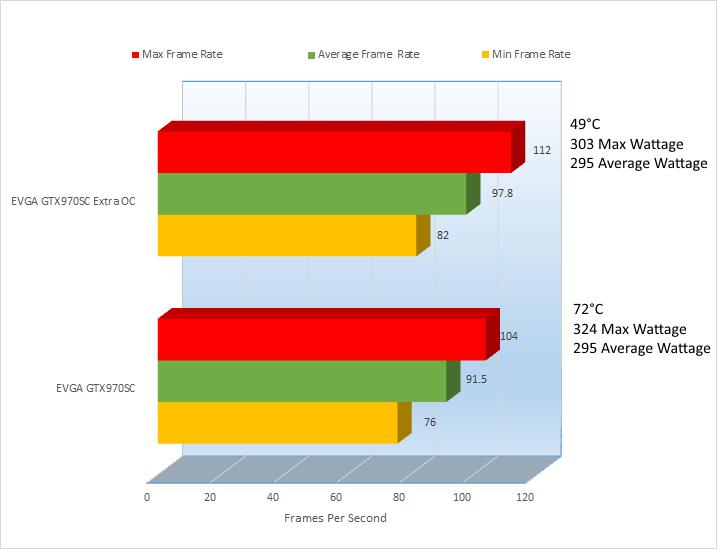

1920 x 1080

While not as impressive as the previous test, each test again came up ahead. Max improved by 7.61%, Average up by 6.72% and Minimum up by 6.15%. Cooling dropped by 31.94%, anyone could get used to that but again the wattage was raised 3.14%, an unfortunate side effect of overclocking.

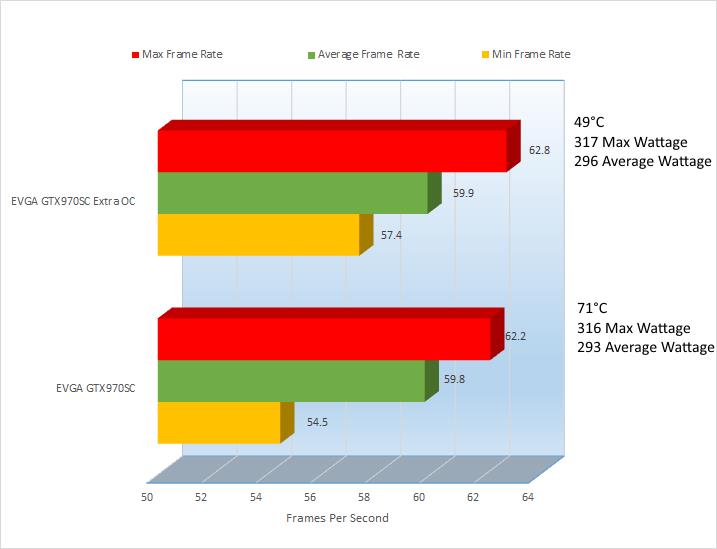

2560 x 1440

The difference again on the higher resolution was not as drastic as the lowest resolution, but still we were met with improvement all across the board. 6.48% improvement for Max framerate, 6.44% for Average and 7.32% for Minimum. The wattage was increased very sharply with the highest resolution at 6.48% but again our cooling stayed strong at 49°C, a 31.94% decrease in heat from the original tests.

While the overclock was not the highest we can see it did improve scores almost overall, and again, you do have room for improvement and more aggressive overclocks.

In our initial test, I played Battlefield 4 to see how this card faired, let’s see how it fairs with the overclock.

As you can see, there is an improvement, though not huge but any improvement is a step in the right direction.

So with this upgrade, I have put my trusty EVGA GTX660 TI aside and it feels like I have wasted my investment on my older card, but does it need to be that way? I remember way back when, always having an option of using my older NVIDIA based video card for PhysX. Well, let’s see if we can save this GTX 660 TI and what an older PhysX card can do to help a new Generation of video card, 3 Generations newer.

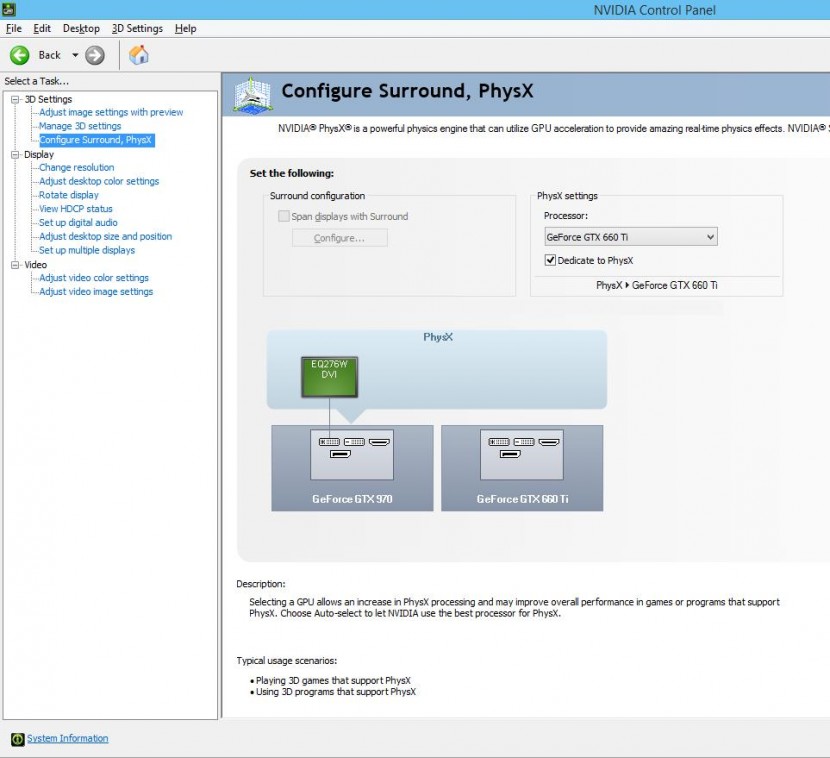

For this upgrade, we only need to put the GTX 660TI on the next PCI-E slot, you can use my previous video on upgrading your card for reference and attach the extra PCI-E cables to power this card. Since this is not SLI, you don’t need to use the SLI ribbon cable to connect the cards together, but how do you activate the dedicated PhysX card, funny you should ask, here’s how.

First, right click on an open space on your desktop and select “NVIDIA Control Panel”

With the Control Panel open, click on “Configure Surround, PhysX”

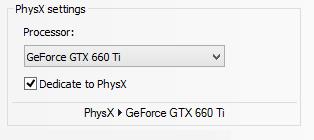

And “Under PhysX settings”, you will see a drop down bar entitled “Processor:”, click to display this menu

In my case, I have a GTX 970 and a GTX 660 TI, since I will use the GTX 660 TI for PhysX, I select it here.

And afterwards, make sure you place a check in the box reading “Dedicate to PhysX” and click Apply and you are done, now let’s do some quick testing to see how much of an improvement we see.

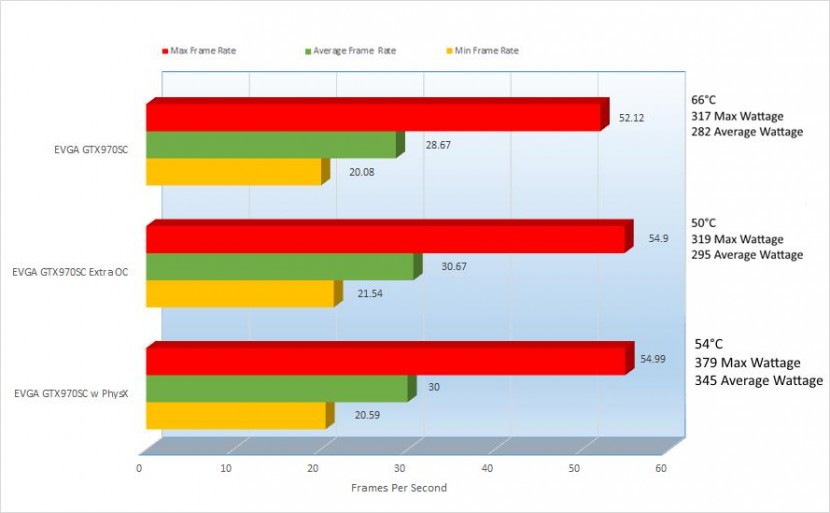

2560 x 1440

Here I have put together the results of benchmarking Metro Last Light at 2560 x 1440 for standard SC, Extra Overclock and Extra Overclock with a dedicated PhysX card. It looks like PhysX does work with the Extra OC set slightly but raising the Max frame rate by only 0.16%, dropped the Average frame rate by 2.18% and raise the minimum frame rate 2.48%. On Metro, the improvement is not very apparent and then it raises the heat by 4°, Max Wattage 15.83% and Average Wattage by 14.92%. So far, it seems that having this 2nd card as a dedicated PhysX card is more of a burden, but let’s see what Thief and Tomb Raider have to say.

2560 x 1440

Thief again being defiant decided to not work to improve anything across the board. All 3 Frame rates showed a drop, Max, Average and Minimum went down, Max and Average wattage went up and even the temperature went up. No improvement what so ever. What does Laura have to say about this?

2560 x 1440

Tomb Raider as we have seen before has been the most accepting of all in regards to changes, usually benefiting from all changes. In this case, it seems that the PhysX card was almost nonexistent aside from raising the overclocked temperature by 1°. Oddly enough it lowered the Max Wattage 3.70% from the standard SC clock and 2.88% from the Extra OC. Tomb Raider to me made no sense.

I figured offloading the PhysX processor from the main card to a secondary card would improve PhysX but it looks like I was wrong. Out of the 3 games tested, Metro Last Light was the only one optimized for PhysX by NVIDIA and was the one that saw the most improvement. The highest improvement was 2.48% and with that an Average Wattage increase by 14.92% you could soon enough buy yourself a 2nd EVGA GTX 970 SC and use SLI with what you would be paying to the electric company and maybe later you could treat yourself to a TRI SLI package.

EVGA to me has done it again bringing in a great package. Improving the reference design adding a second fan, then upgrading the 2 fans even more with ACX 2.0 and improving on the entire package bumping its reference speeds with their SC version, EVGA has done this card right. Adding to the improvements EVGA brings us this card at a decent price, while not the best pricing out of the GTX970 bunch definitely not the highest but it makes up for the difference with its customer server and community.

I will add that in encoding all of these videos for this review, the rendering time was noticeably improved due to NVIDIA’s Maxwell technology. I had noticed it while rendering and thought it was my imagination but doing more researching into Maxwell I found that my imagination was a reality, NVIDIA seems to be really good at bringing imagination to reality.

I would recommend this card to anyone and everyone for performance both gaming and video encoding and to add to that of course performance in cooling. I would have given it a 5 if the pricing were a little more aggressive, aside from that the card is perfect.

What did you guys think of think, was there something you think I should have added or maybe removed? I used your previous recommendations; I could use more if you have them.

We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

I have spent many years in the PC boutique name space as Product Development Engineer for Alienware and later Dell through Alienware’s acquisition and finally Velocity Micro. During these years I spent my time developing new configurations, products and technologies with companies such as AMD, Asus, Intel, Microsoft, NVIDIA and more. The Arts, Gaming, New & Old technologies drive my interests and passion. Now as my day job, I am an IT Manager but doing reviews on my time and my dime.