We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

Being a gamer is expensive, I know I have probably paid for a house with all the money I have put into PC’s since I was 13…. a ton of money. Even though I have put so much in and looking back, it hurts to see how much I have but I know it will just be a love affair that will continue. When I started, I didn’t have much money and back in those days,… it didn’t matter because nothing was affordable on an IBM 5150, but it’s a bit different today. Gamers today have a choice of going to an integrated solution on the CPU all the way up to a down payment on a car with the top of the line GPU. Just above the integrated solution we have the Sapphire Radeon NITRO RX460OC 4GB 11257-02-20G, a budget card…. Wait wait, don’t go, you might be surprised.

I will slowly work you into this review, but please be patient. Let me start you off with the Spec’s.

Spec’s and Features

- Engine Clock: 1175Mhz

- Boost Engine Clock: 1250 (Seems to always run at this speed though)

- 4096MB GDDR5 128-bit RAM

- 1750Mhz

- 7000Mhz Effective Memory Frequency

- Compute Shaders: 2304

- Supports Crossfire

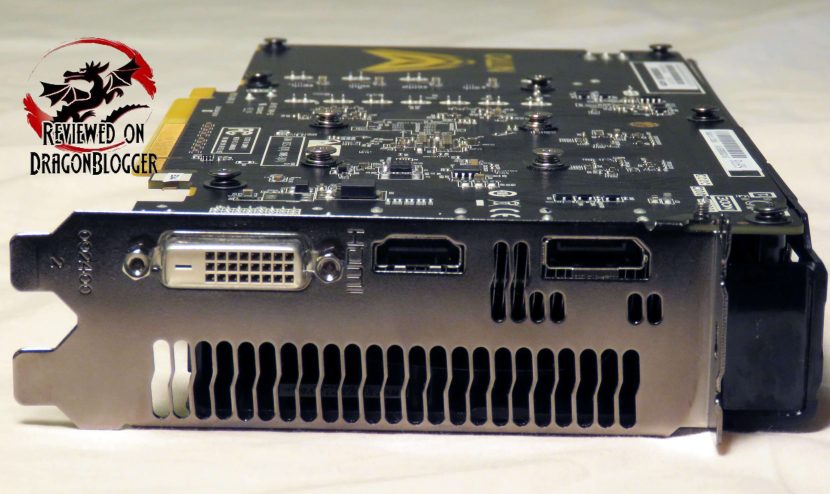

- 3 Output Maximum

- 1 x DVI-D

- 1 x HDMI 2.0b

- 1 x Display Port 1.4

- Resolutions Supported

- DVD-D

- 2560 x 1600 (60Hz)

- HDMI 2.0b

- 3840 x 2160p (60Hz)

- Display Port

- 3840 x 2160 (60Hz)

- Supported API’s:

- OpenGL 4.5

- OpenCL 2.0

- DirectX 12

- Shader Model 5.0

- Vulkan API

- Supported Features

- CrossFire

- FreeSync Technology

- AMD Eyefinity

- Dual Bios

- AMD Liquid VR Technology

- AMD Virtual Super Resolution (VSR)

- AMD TrueAudio Next Technology

- AMD Xconnect Ready

- DirecX 12 Optimized

- Radeon VR ready Premium

- HDR Ready

- Frame Rate Target Control

- NITRO Fan Check

- Dual X-Fans

- Two Ball Bearing

- NITRO Quick Connect System

- Dual-X Cooling

- NITRO Boost

- Black Diamond Choke 4

- Power Consumption: 150Watts

- System Requirements

- 400Watt Power Supply

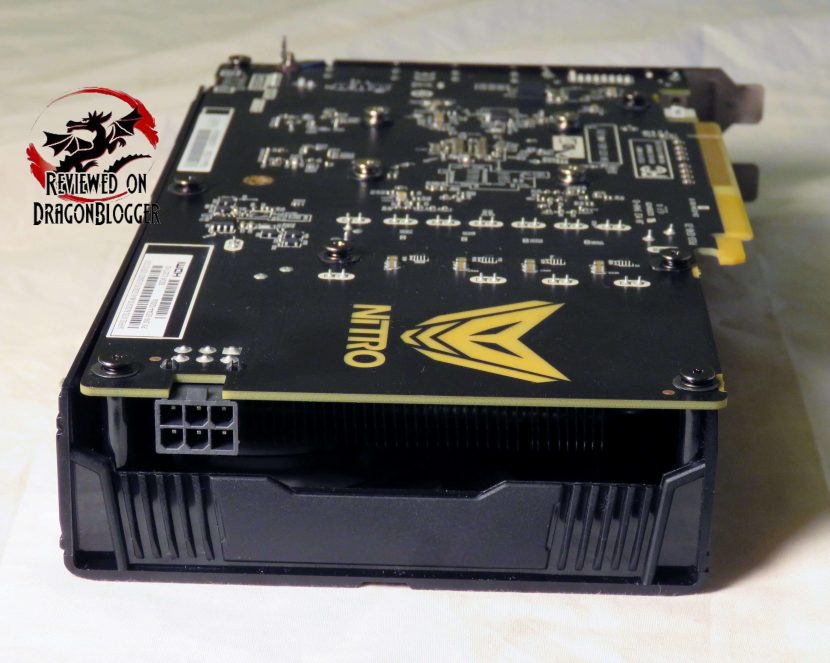

- 1 x 6 Pin AUX Power Connector (Yes, only 1)

- Windows 10, 8.1, 8 or 7

- Form Factor

- DVD-D

- Length: 8.70in

- Width: 4.84in

- Depth: 1.49in

- 1750Mhz

Lots of stuff there, I know, but let’s get into an unboxing

You can connect up to 3 monitors to this little guy,… it is a budget GPU.

You can attach an HDMI capable monitor, Display Port capable monitor or even a DVI-D capable monitor. Chances are, no matter what monitor you have you can connect to this video card.

And if you still had VGA, you might need to get an adapter like the one above. Sadly these cards do not bring any adapters, but it might raise the price a bit if they did. Remember, it does not include one.

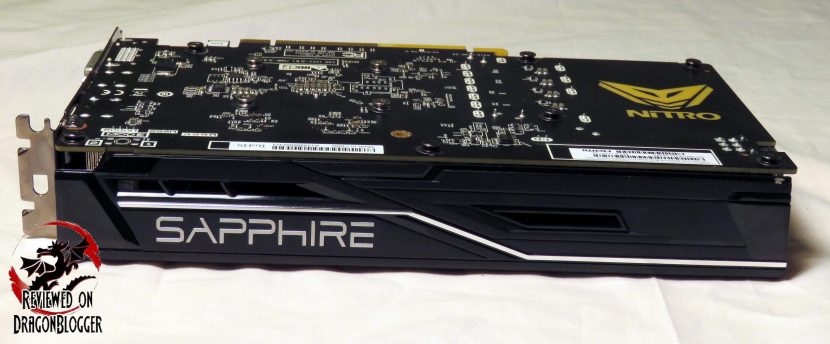

It has a basic no frills design, but it’s meant to be inside your PC so you may not look at it much. Once I install this into the system, I will show you the NITRO logo and how it glows. Let’s keep exploring this card.

Along the back of the card, we find the single PCI-e connection, and it is only a 6Pin. This video card only requires a 450Watt power supply, keeping in line with the budget theme.

Along the bottom of the card, it’s pretty basic. We can see that Sapphire has an open air design here, allowing the heat to blow onto the board. We can also see that they have employed a dual heatpipe design to keep everything cool.

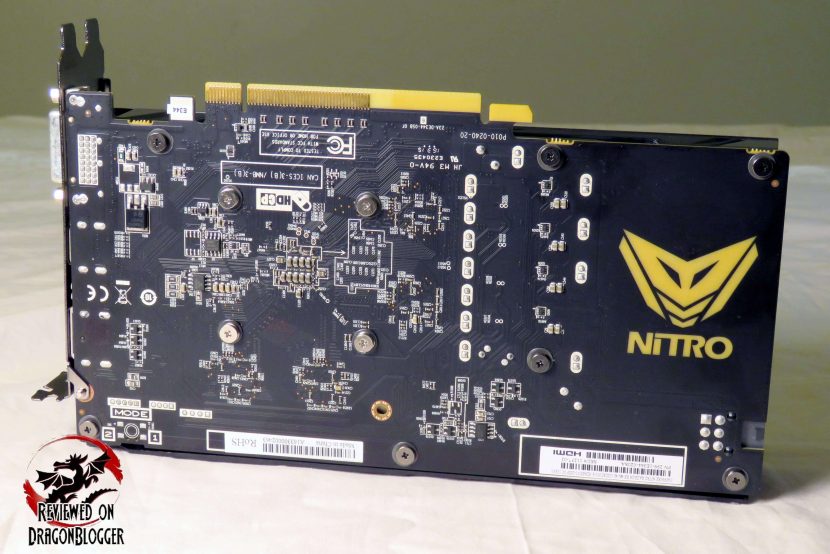

This is a budget card, so it does not have a backplate, but it does have a nice NITRO logo… it has a bit of a purpose of which I will show you a little later.

And finally, back to the top of the card, a basic looking card, but we know it will keep cool with those 2 fans.

OK, I want to get into some performance testing, so I am going to go ahead and install it. I recorded a video to show you how to do it, check it out on the next chapter.

[nextpage title=”Installing the Sapphire Radeon NITRO+ RX 460″]

We have all been at one point a noob, not knowing how to install a video card, RAM, CPU and the likes so I recorded this video to help you guys out. I have done quite a few videos like this, so once you are done with this one, stick around to find some more of them.

Installing the card on your own and other devices will save you tons of money, not having to pay people to install it for you. You may save enough to by another Sapphire Radeon NITRO RX460OC and you can Crossfire them and get even better performance.

Sorry I ramble, on to the video.

And here is what the find product looks like inside of my Anidees AI Crystal case. The case normally has a tempered glass plate and it is a bit tinted, so I removed it so that you can see it in all its glory and no glare either.

Quickies are not always bad, let’s move on to the next chapter where we can see how well or how poorly she runs.

[nextpage title=”Benchmarks, Performance, Temperatures and power consumption”]

While the video card is a budget card, the system is a little higher end but this actually might be your scenario. You might have bought the perfect motherboard, processor a memory… but now you don’t have enough money for a GPU… well this might be the best card to tide you over until you can get the latest and the great,… or maybe you chose to stick with it.

Anyway, check out my system specs to maybe put your own performance with this card into perspective:

- Anidees AI Crystal Case:https://geni.us/6NAIJBN?e0Ww

- Intel Core i7 5930K Processor:https://geni.us/6NAIJBN?4C8Itd

- EVGA X99 Classified Motherboard:https://geni.us/6NAIJBN?E9eamo

- Arctic Liquid Freezer 240MM CPU Liquid Cooling:https://geni.us/6NAIJBN?vEaJAf

- Kingston HyperX Predator 3000Mhz 16Gig:https://geni.us/6NAIJBN?w9kPe5

- Sapphire Nitro RX460OC Video card:https://geni.us/6NAIJBN?vd4v

- Samsung 850 EVO 500GB SSD:https://geni.us/6NAIJBN?1gf0fs

- Hitachi 1TB SATA 3G HD:https://geni.us/6NAIJBN?pU2QOo

- Patriot Ignite 480GB SSD:https://geni.us/6NAIJBN?eoPVsG

- Kingston HyperX 240GB SSD:https://geni.us/6NAIJBN?8leEDW

- Plextor 256GB PCIE SSD:https://geni.us/6NAIJBN?gVBR

- Cooler Master Silent Pro Gold 1200W Power Supply:https://geni.us/6NAIJBN?Umwm

- Microsoft Windows 10 Professional:https://geni.us/6NAIJBN?GYbBRY

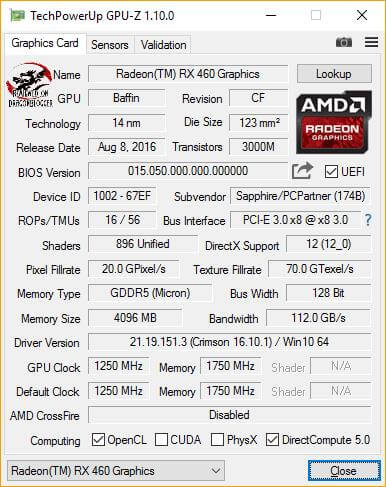

Ok, with all that, here are the specs of the card itself displayed on TechPowerUp’s GPU-Z.

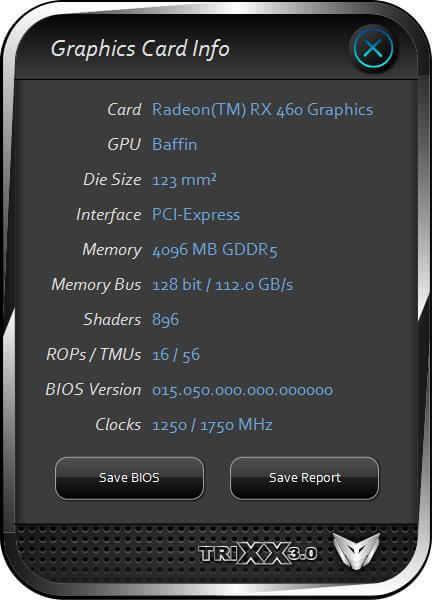

Sapphire’s own utility TRIXX 3.0 provides a lot of the same information.

I will use this program later on to overclock the card and perform a few other action plus go into a lot more detail about the program so that you know how to use it. You can grab a copy of Sapphire’s TRIXX 3.0 here: http://www.sapphiretech.com/catapage_tech.asp?cataid=291&lang=eng

The Sapphire Radeon NITRO RX460OC 4GB card is based off of AMD’s Baffin chipset, part of the Polaris line. This chipset if based off of the AMD’s latest and greatest 14nm FinFET process which greatly improves performance and lowers power requirements of the cards in it’s series, a very welcome set of features.

For some reason, GPU-Z did not allow me to show you the number of Shaders, but thankfully TRIXX does, you can see them above.

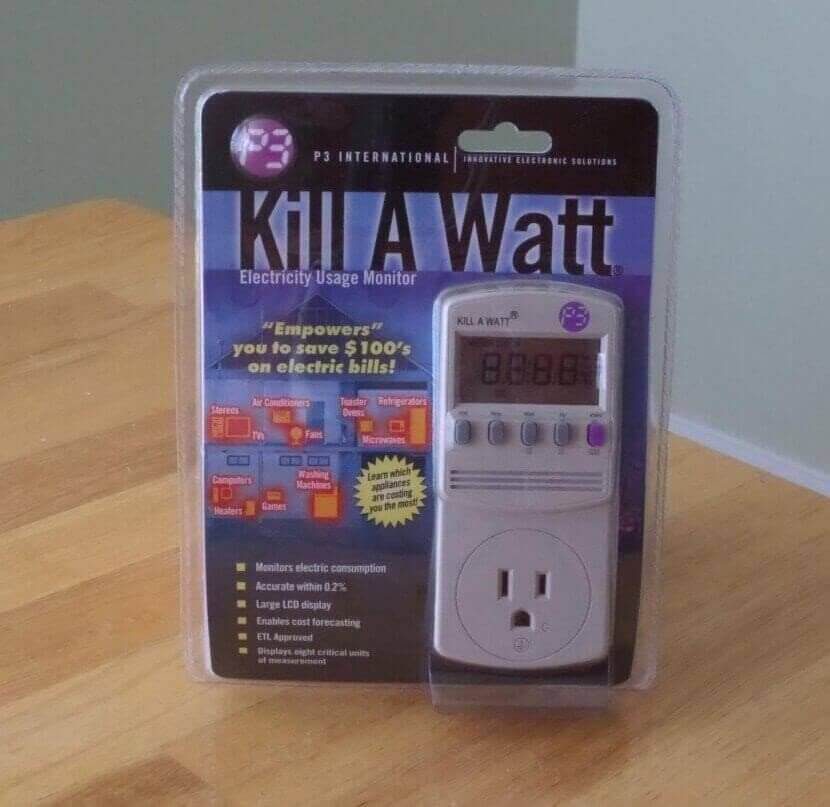

After this is the benchmarks, but I wanted to let you know first my testing process. To begin with, all of my benchmarks have wattage consumption listed. I test for Minimum, Average and Max power usage using the “Kill A Watt” by “P3 International”, a very handy little tool not only for testing PC power usage, but to see how much power annoying that can plug into a wall can use, can help you lower your power bill.

The programs I am using to benchmark are the following.

- FutureMark’s 3DMark Fire Strike

- Metro Last Light

- Thief

- Tomb Raider

- Ashes of Singularity

- Tom Clancy’s The Division

Later on, I also show you some gameplay of a few games, but let’s started benchmarking.

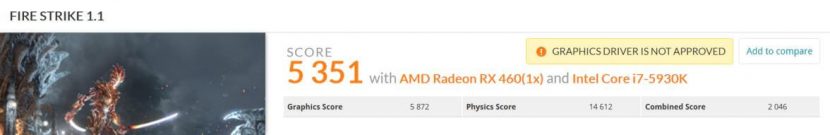

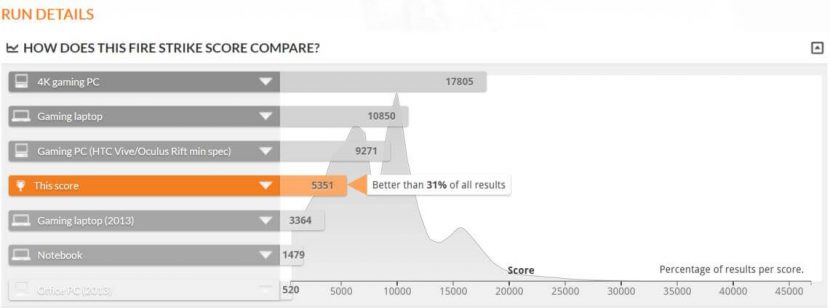

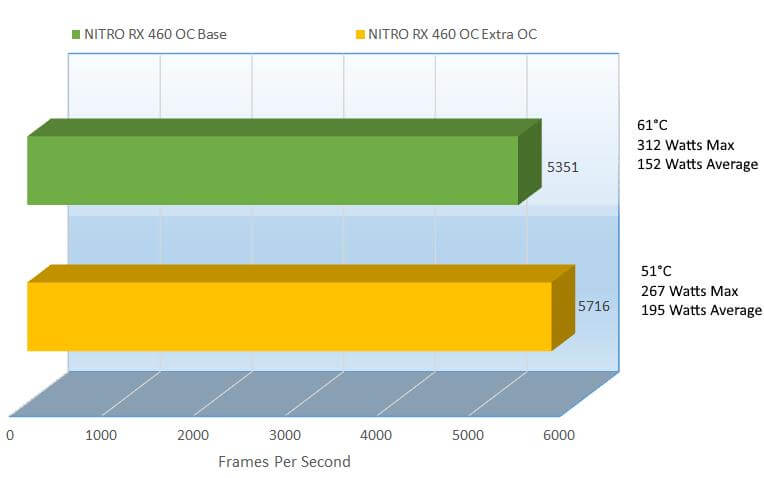

Budget GPU or not, the Sapphire Radeon RX460OC pulls up 31% better than all other results, that’s not bad at all. During the test, the lowest the power usage hit was 152Watts, the average was 238Watts and the max, a blip was 312Watts, that’s not bad at all. During the test, it hit was 61°C. Since the GPU hit 61°C, the fans turned on, but they are very quiet, I will show you how loud they are a little later in the review.

Sadly the scores could not be official because the drivers are not WHQL. The base drivers were, but when AMD ports them over to Crimson for performance, they usually do not send them off to Microsoft for WHQL certification, Microsoft charges for that.

Ok, let’s check out Metro Last Light.

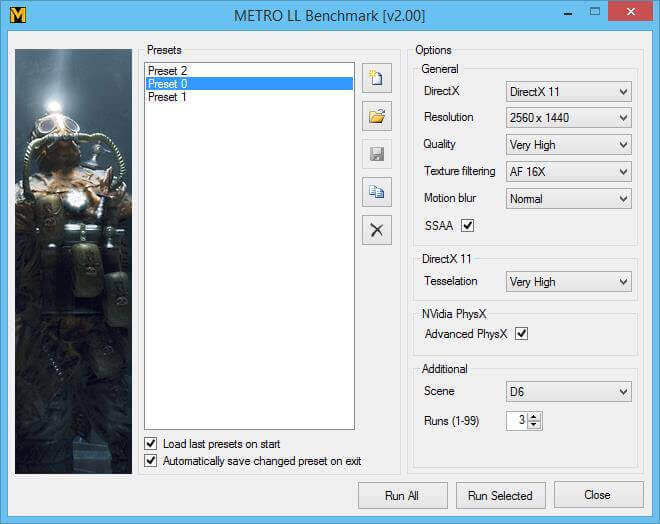

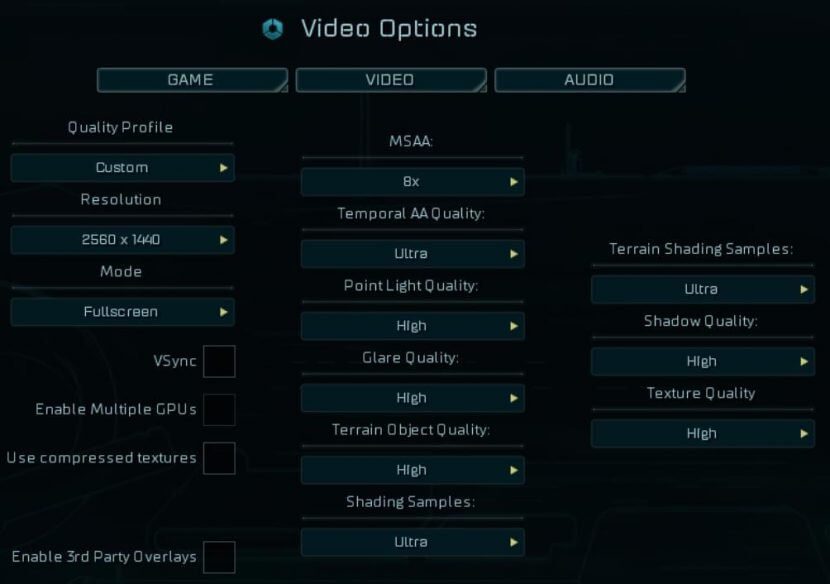

Here are my Metro Last Light presets, for each benchmark I will present the presets, changing only the resolutions.

The only difference between “Preset 2”, “Preset 0” and “Preset 1” is changing the resolution between 2560×1440, 1920×1080 and 1280×1024. Below are the results from each.

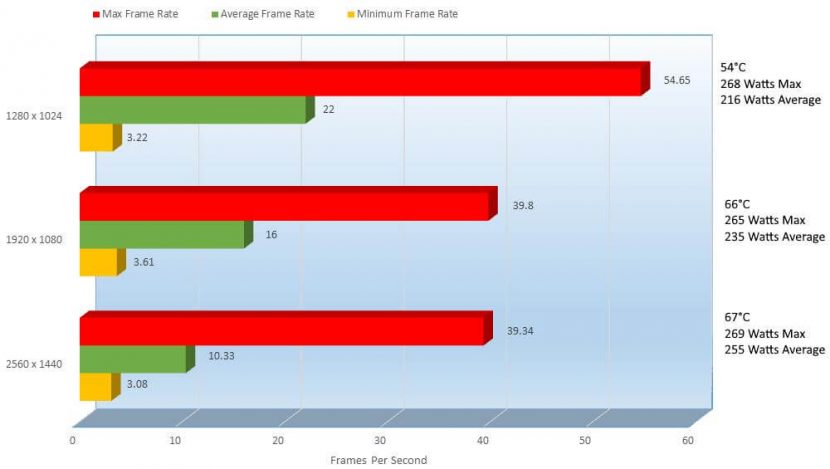

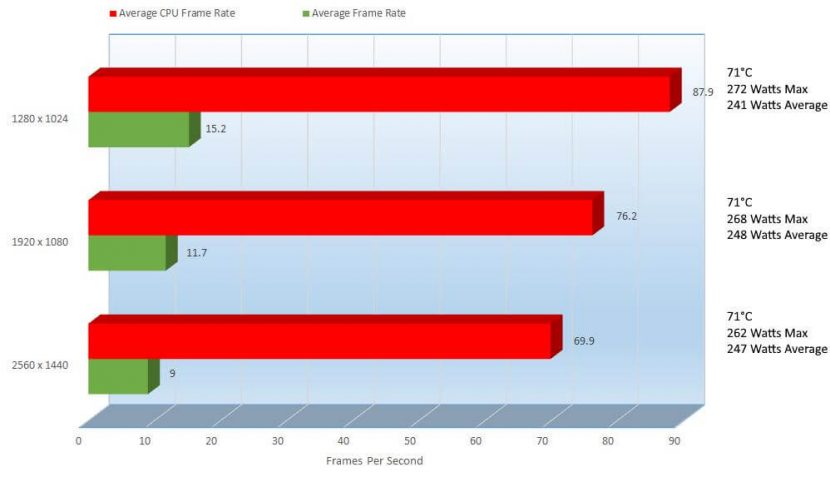

The performance here looks very rough, but you may be surprised to know that Metro taxes even the highest end card, so actually it’s not too bad here. Mind you at the settings I have laid out here, it is 100% not playable but if you were to take down the eye candy, surely it would be playable.

From 2560×1440 to 1920×1080, we can see that the frame rates increase by 5.67FPS a 43.07% improvement. Going from 1920 to 1280 we can see a 6FPS increase an improvement of 31.58%. From 1280 up to 2560 we can see both an increase in power consumption and temperate, a 21.49 increase in temperature and a 16.57% increase in power consumption.

The results here were a little loose since the gameplay was not good at all, but let’s move on to Thief to see if he can steal the show.

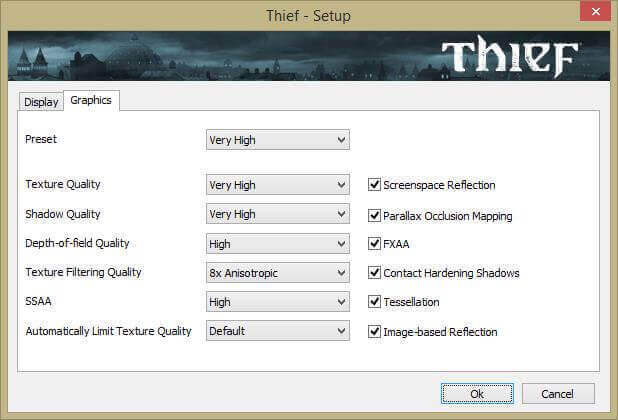

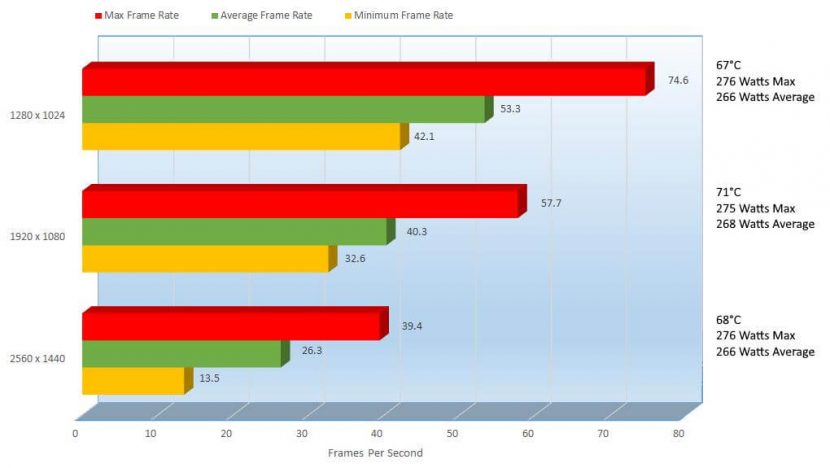

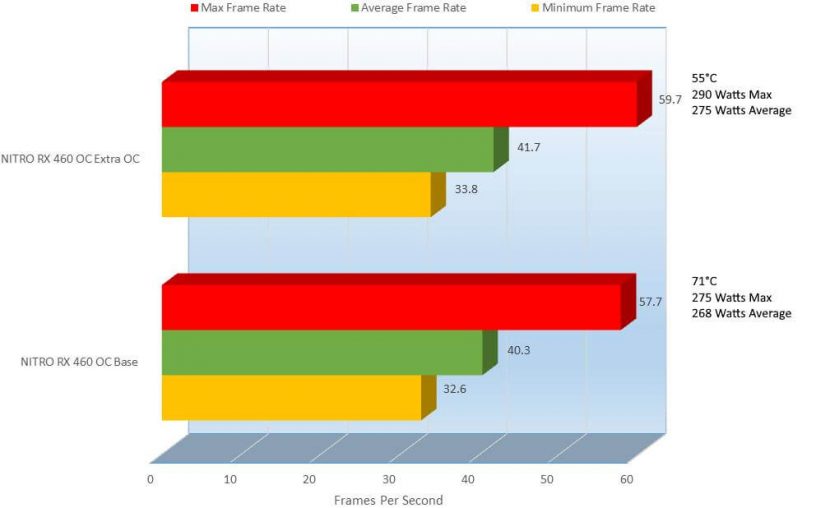

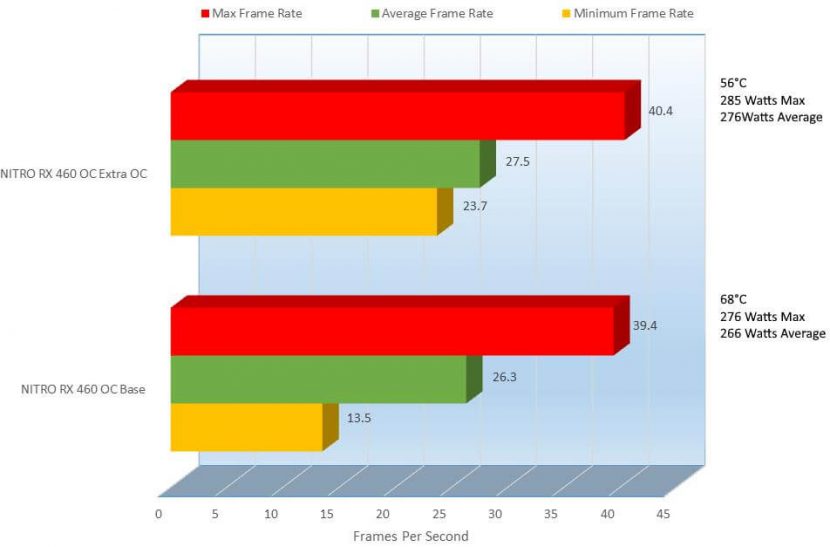

Thief gets very comfy showing the goods here now that things are much more playable. 2560×1440 while not playable at 26.3FPS, 1920×1080 comes at a much more reasonable 40.3FPS, peaking at 57.7 on average showing a 42.04% improvement in performance. Oddly enough, even though the resolution dropped, power consumption on 1920×1080 was actually .75% higher. From 1280×1024 is almost at the magical 60FPS coming in at 53.3FPS, 27.78% faster than the average on 1920×1080 and consuming .75% less wattage as well. 1280×1024 here is very playable, almost perfectly playable, but if you wanted to play at 1920×1080 all you would need to do is drop some eye candy and surely you would hit 60FPS as well.

This card is not looking too bad, but let’s see if Laura can pump up the score a bit.

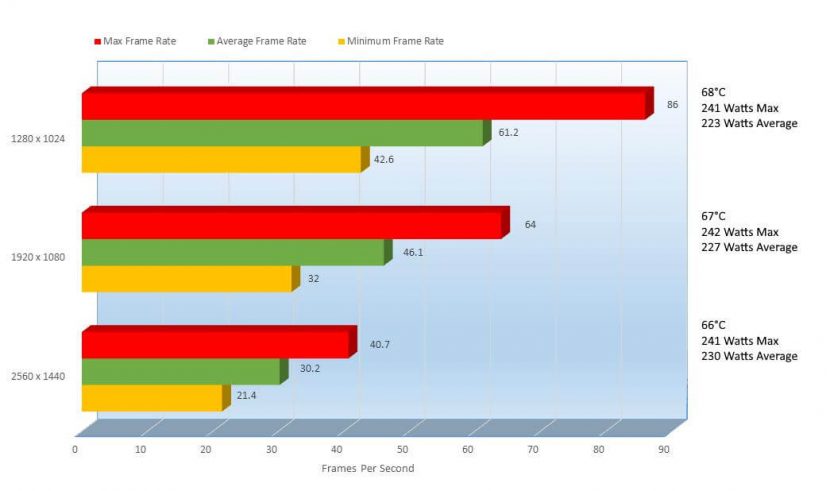

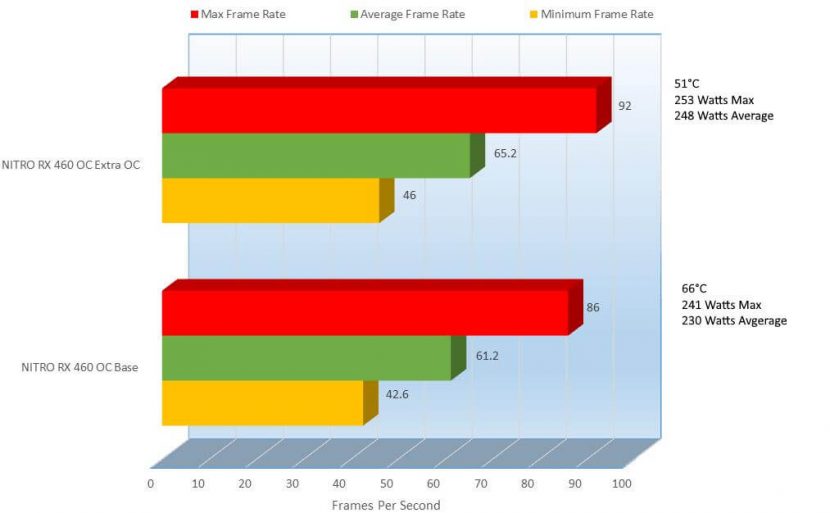

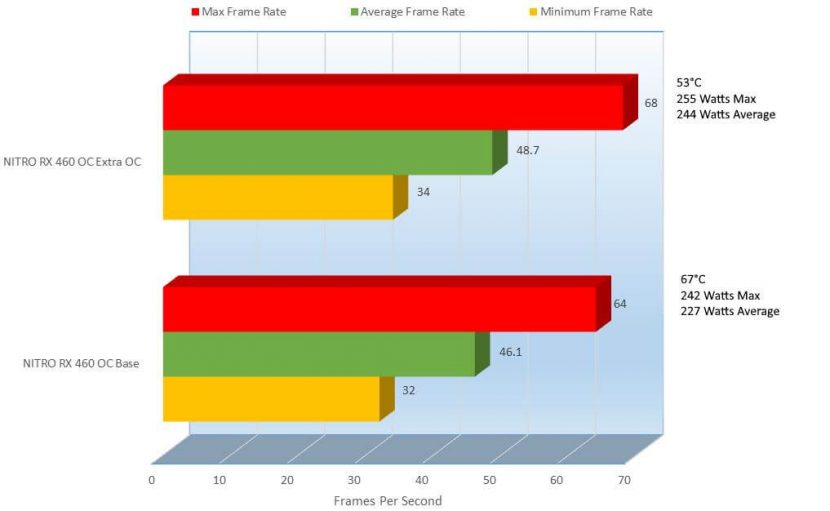

Alright, yet another decent score. At 2560×1440, she only hits 30.2FPS, but at 1920×1080 she is a lot more smooth at 46.1FPS, a 41.48% improvement and only a 1.31% decrease in power consumption. Now coming in at 1280×1024 we have better than perfect at 61.2FPS, an increase of 28.15% and a decrease in power usage of 1.78%. Like Thief, all you would need to do here is drop some of the eye candy and you could easily hit 60FPS at 1920. If your monitor can handle 1920×1080, I would suggest taking advantage of it. Let’s jump things into warp speed, or not… with Ashes of the Singularity.

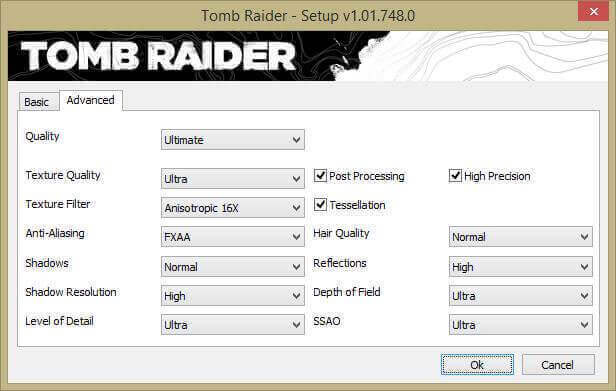

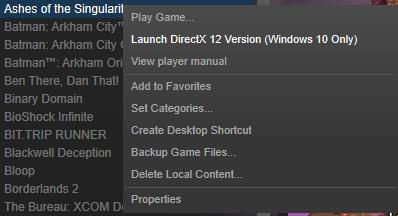

For Ashes of the Singularity, I test in DX12 though you cant actually utilize the DX12 feature from inside of the game itself oddly enough, let me show you how I get to it.

From within Steam, right click on the title

There you will see a drop down and you will find “Launch DirectX 12 Version (Windows 10 Only)”, then also as it implies, this will only work in Windows 10. After that, once it is loaded, here are my settings.

Also, you know you are benching in DX12 when you get this message when you click “Benchmark”

Notice under “API:” it reads “DirectX 12”.

Ashes of the Singularity, heavily utilizes the CPU, you can see here its pegging the CPU very much. This game seems to chug a bit on any GPU I have thrown at it; either poorly optimized or truly tortures the GPU. While a DX12 title, I have not seen a GPU handle it properly.

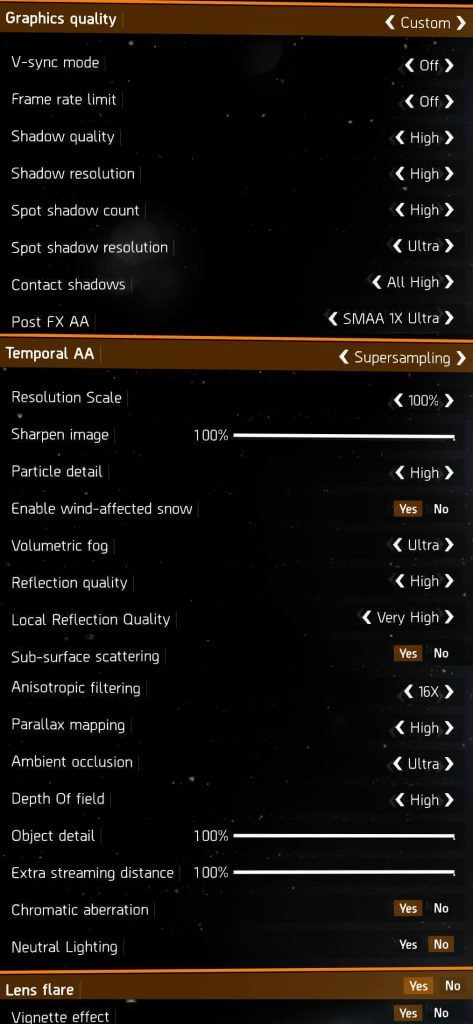

OK, let’s jump to our final benchmark, Tom Clancy’s The Division.

Here are the settings I defaulted at, again afterwards only changing the resolutions. There are a ton of settings.

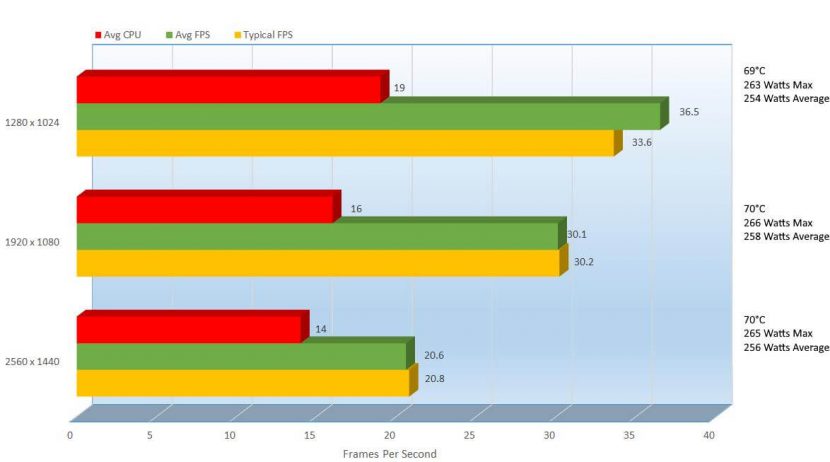

Like Ashes, The Division shows you how much CPU Utilization is occurring, but the reading is a bit more clear. We can see that this game utilizes both the CPU and GPU a little bit better, though it could be DX11 versus DX12.

We can see at 2560 to 1920 a FPS increase of 37.48% on the average FPS and only 1 more Watt consumed. It seemed to have fun much better than 30.1FPS but at 1280×1024 the 19.22% increase was noticed, coming in at 36.5FPS. The mixture of the CPU and GPU allows the game to perform much better than you would think at this FPS.

This next chapter shows a bit of game play, on some other games.

[nextpage title=”Gameplay and Performance”]

FPS and pictures are good and everything, but nothing is better than seeing it in action. So I will play a few games for you to show you what this card can do.

I played Paragon here, a pretty cool free MOBA tower defense type game, I liked it a lot and the graphics are pretty good too.

OK, that was actually pretty nice, I used Bandicam to record my videos and normally I would use the H264 (CPU) codec, the videos recorded under this setting looked like rainbows flowing in water, very odd. I switched to H264 (AMD APP) and everything recorded perfectly, but FPS took a big hit in this game and a few others.

With that explained, lets jump to League of Legends. Another free MOBA tower defense type game that’s loads of fun. Sadly I never played this one before either, but I quickly fell in love with it.

Then here, I play an oldie but a goody, I used to play the original Counter Strike for days on end, though this is the first time I played Counter Strike: Global Offensive and it was pretty good, I thought i had a key, but thankfully my buddy Andreas Niggemann had an extra he spared for me. Thanks again Andreas.

The Sapphire Radeon Nitro RX460Oc played this game super smooth, and it wasn’t even overclocked. Though it too suffered the CODEC fate and played much smoother when not being recorded.

The games ran all very well, and there will be more coming soon but I wanted to show you that. OK so you saw the stock performance, and especially being a budget card, we want to squeeze a little extra performance out of it, so let’s give it a try.

[nextpage title=”Overclocking Performance, Benchmarks, Temperatures and Power Consumption”]

OK, so before I provide the results, let me show you how I got there. First off, this is not a quick thing, to overclock correctly, you have to spend a few hours, have some patience, a paper and a pen because you will be there for a while recording your previous attempts.

Below, I will list before and after results of course of benchmarks but I will show reports from Sapphires TRIXX 3.0 and GPU-Z.

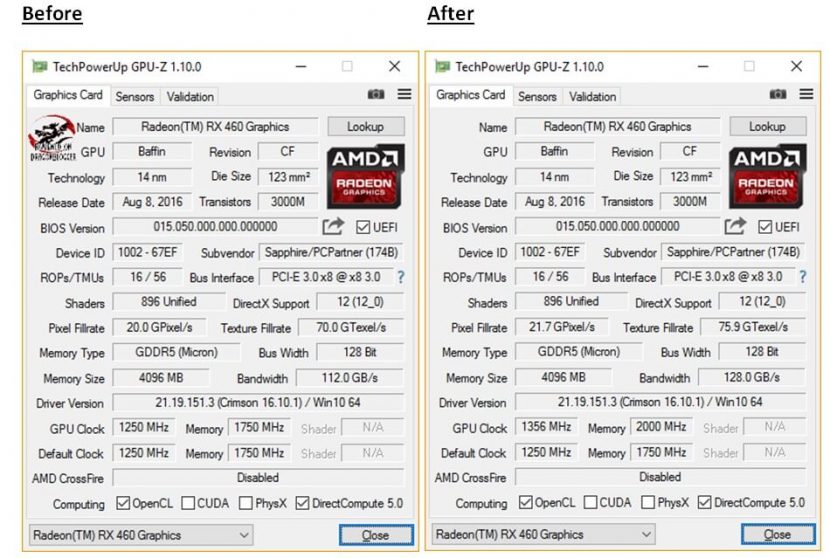

I was able to get 106Mhz from the GPU Clock and an impressive 250Mhz from the memory clock. With that, I was able to raise my bandwidth, pixel fill rate and texture fill rate. Surely I could have gone much higher, it was a great overclocking card, but I didn’t want to waste too much time overclocking, I wanted to give you my clocks and even confirmation as a reference. Please remember, if you buy the same exact card, the performance and overclockability may not be the same, yours may be able to clock higher or not very high at all, it’s the nature of the beast.

Before

After

I modified the GPU clock

Then also raised the “Power Limit”

Also the “GPU Voltage”

The “Memory Clock”

And finally the “Current Fan speed”

This program does more than just overclock the card, but I will get into that a little later in the review. Let’s get into the comparisons.

The performance difference here is pretty nice; we can see a 365 point increase in 3DMarks, a 6.60% improvement. The average wattage consumption increased as well as you would have imagined 24.78% from 152 Watts to 195 Watts, but we did raise the voltage quite a bit there. Also, the temperature went down 10 degrees, because now I had control of the fan, it was a little louder but with the side panel on it was barely noticeable.

For the games, I will be pairing the tests based on resolution, keeping things relevant and basing the scores only on average FPS since min and max do not mean as much.

With that said, let’s see what this means for Metro Last Light.

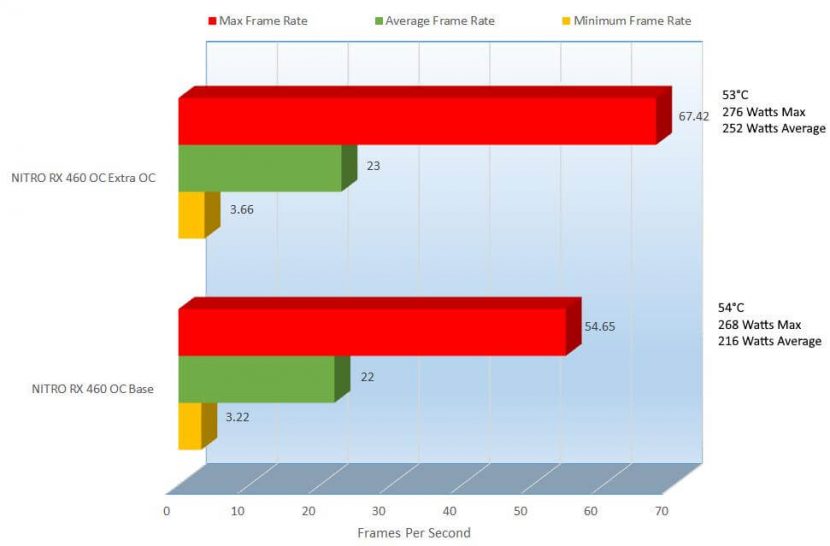

1280×1024

From this overclock we can see the average only went up a single FPS and on the flip side, the temperature went down a single degree. Wattage on the other hand did go up about 13.38%. Sadly still not playable, but let’s see what 1920 x 1080 brings.

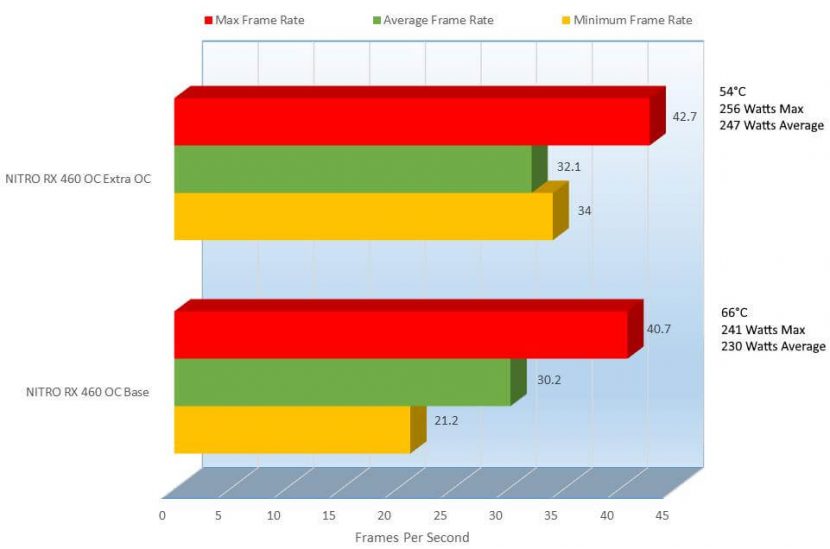

1920×1080

Well, at 1920×1080, we increased FPS only 2.04% and yet again the temperature did from 66°C to 53°C, a 21.85% decrease in thermals, always a welcomed treat. Since we did raise the voltage, we pulled an additional 2.61% more power on average which is not too bad. Now onto 2560×1440.

2560×1440

I didn’t expect 2560×1440 to be any better and well I was not disappointed. There was an increase of FPS .34 FPS, a 3.24% improvement. Due to the custom fan control, the temperature dropped 67°C to a pleasant 53°C, a 23.33% increase in thermal performance. Now you will notice the wattage did drop, lower temperatures usually means lower power consumption and we can see that here. On average the stock Sapphire Radeon RX460OC pulled in 255 and with a little extra umph we see that it dropped 3 watts a 1.18% decrease in power being consumed.

Thief will pick us up and take us, though I don’t know where.

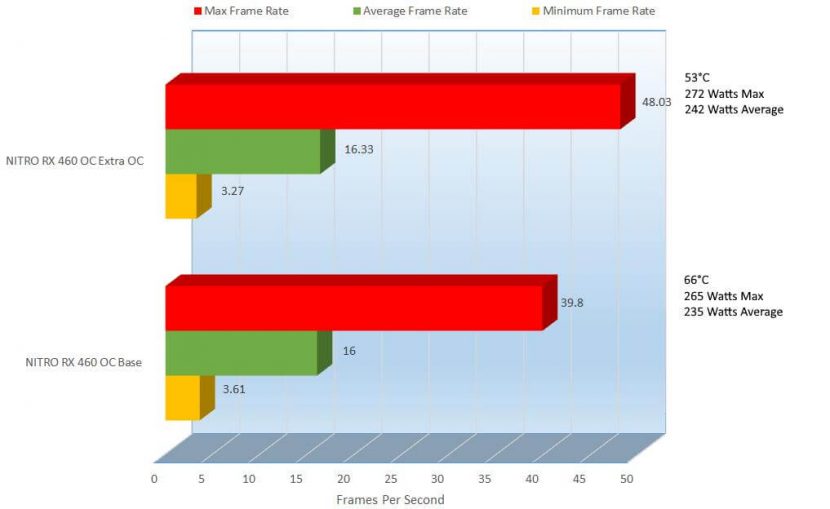

1280×1024

Thief for now has stolen the show, making the PC fun again and bringing much more playability. We started off at 53.3FPS and with the overclock, we have gone up to 55.5FPS a 4.04% increase in performance. With that, we see that the temperature dropped 19.67% below the stock temperature of 67°C though the wattage did increase 3.69% to 276Watts. Let’s see if a resolution of 1920×1080 produces playable results.

1920×1080

While the FPS here is not 60FPS, this can get so much better just lowering a few things. Aside from that, we did see here an increase from 40.3FPS to 41.7FPS, a 3.41% improvement and with that, the cooling dropped a considerable 25.40% from 71°C to 55°. Power draw did go up from 268 to 275Watts, a 2.58% increase in power consumption. How about 2560×1440?

2560×1440

Ouch, while there was an improvement in the overclock by 4.46%, it was a very tiny one that does not make too much of a difference. Keeping up with the trend, the temperature did drop 19.35% and though the average wattage being used went up 3.69.

In Thief, we saw things much more playable and the temps at a great place; let’s see how Laura reacts to this increase.

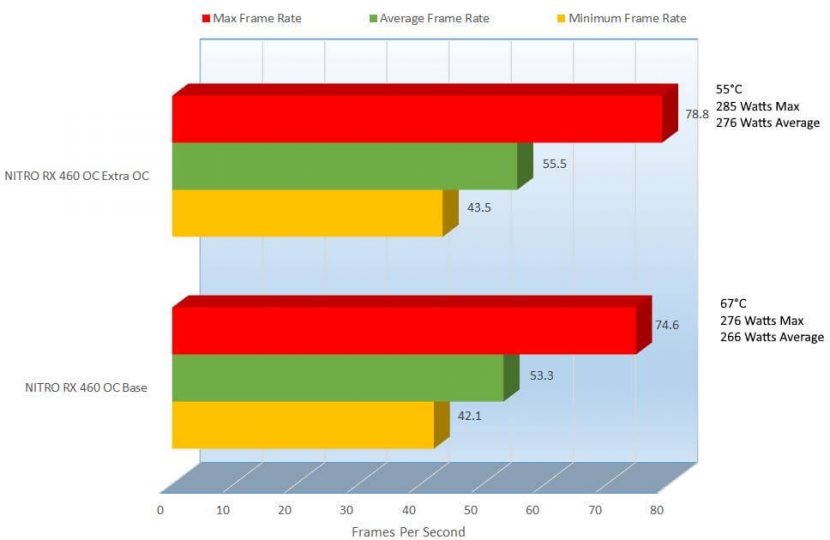

1280×1024

Laura takes care of us, giving us a smooth 65.2FPS, 6.33% above the stock 61.2FPs and with that she gives us the cold shoulder at a chilly 51°C a 25.64% improvement in cooling over the stock 66°C. The wattage did go up a bit consuming 7.53% more power than its stock counterpart. What does 1920×1080 hold for us?

1920×1080

At 1920×1080 we can see a 5.49% improvement from 46.1FPS to 48.7FPS. An increase in performance is always welcome and it will make it a little more playable but at 48.7FPS you will notice a little stutter. The temperature did decrease improving the temperature from its base 67°C to a nice 53°C but the power consumption shot up a bit from 227Watts on average to 244 Watts an increase in power usage of 7.22%. Ok, last but not least, let’s see what 2560×1440 can do.

2560×1440

2560×1440 for a budget card is a bit rough and she did what she could bring in 32.1FPS over its stock 30.2, a 6.10% improvement. The power did go up as well coming up at 7.13% more watts being pulled but she did stay at a nice 54°C, a 20% improvement over its stock counterpart coming in at 66°C.

Let’s continue with Ashes of the Singularity, and hope for out of this world performance.

1280×1024

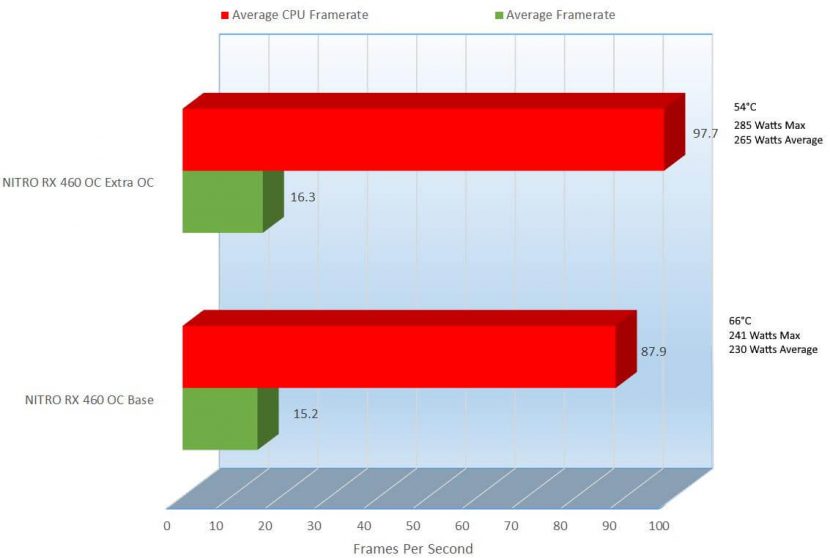

Ashes is really a rough benchmark to gauge, the results are not very telling. In this, the CPU compensates for the lower end GPU and to top it off, it is running in DX12 so it helps that much more. We can see here that the CPU, while not overclocked at all jump to 97.7FPS and the GPU jumped to 16.3FPS, a 6398% improvement on the GPU side. The temps did drop yet again from 66°C to 54°C and the power consumption did go up 14.14%.

The game was pretty smooth here, but when all of the ships got together, you can see that she struggled a bit. Let’s check out 1920×1080.

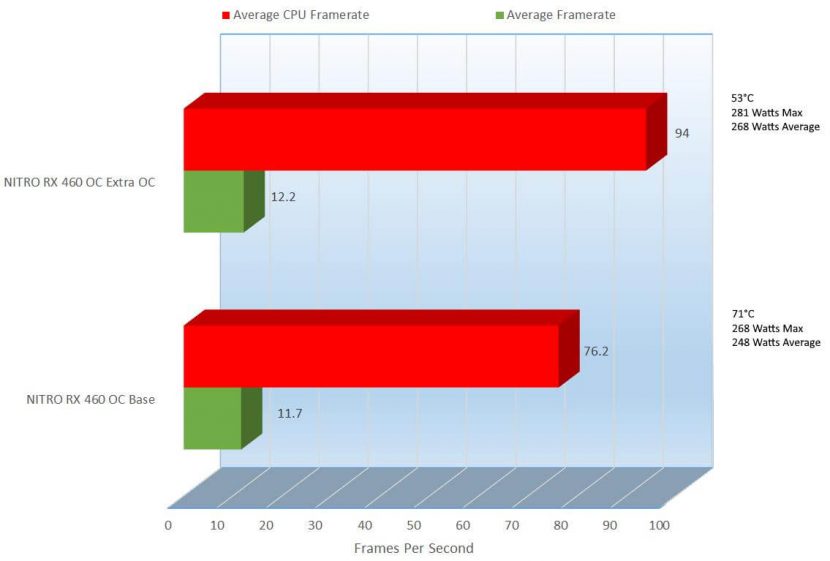

1920×1080

The overclock here turns up an additional 0.5FPS, but in the Average CPU Framerate, we can see a huge 17.8FPS increase. Not getting off of subject here for the GPU, but I thought I would mention it. The temperature took a nice drop from its pretty high 71°C to a very comfortable 53°C, a 20.92% improvement in cooling. OK, on we go to 2560×1440.

2560×1440

At 2560×1440 we gain .5FPS but the CPU steps in an covers coming in a 86.7FPS, 16.8FPS above the stock 69.9FPS. As usual, the temperature dips well below its stock 71°C at a very cool 54°C but comes up 3.19% above the stocks 247Watts, pulling up to 255Watts.

Ashes of the Singularity was a tough one, at 2560×1440 almost unplayable but let’s see if Tom Clancy can bring it back with The Division.

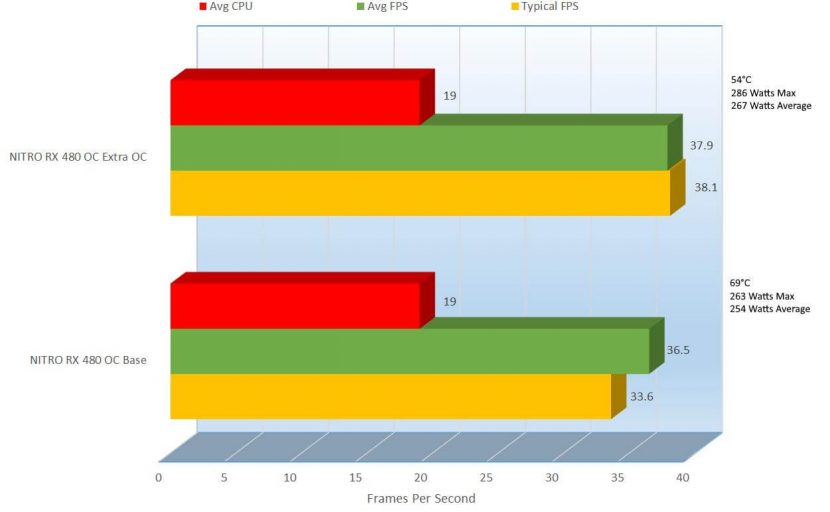

1280×1080

The extra overclock provides an additional 3.76% performance at 37.9FPS above the stock 36.5FPS, not too much but every bit helps. Power consumption goes up 4.99% at 267Watts above its stock wattage of 254Watts. Let’s check out 1920×1080.

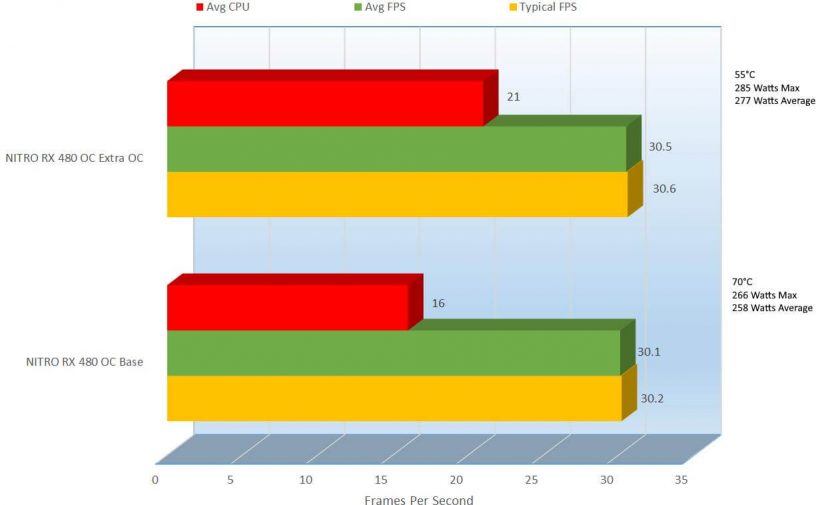

1920×1080

At 1920×1080, the CPU swoops in to try and give the GPU a hand, raising itself from 16FPS to 21FPs while the GPU bumps itself up slightly at 30.5FPS, a tiny 1.32% increase in performance beigning at 30.1FPS on stock. The temps on this one at stock came in high at 70°C and on the OC a nice 55°C, a 24% improvement but the power got hit again. The average consumption at stock was 258 Watts on average and overclocked came in at 277 Watts, a 7.10% increase. Last on the benchmarks is 2560×1440.

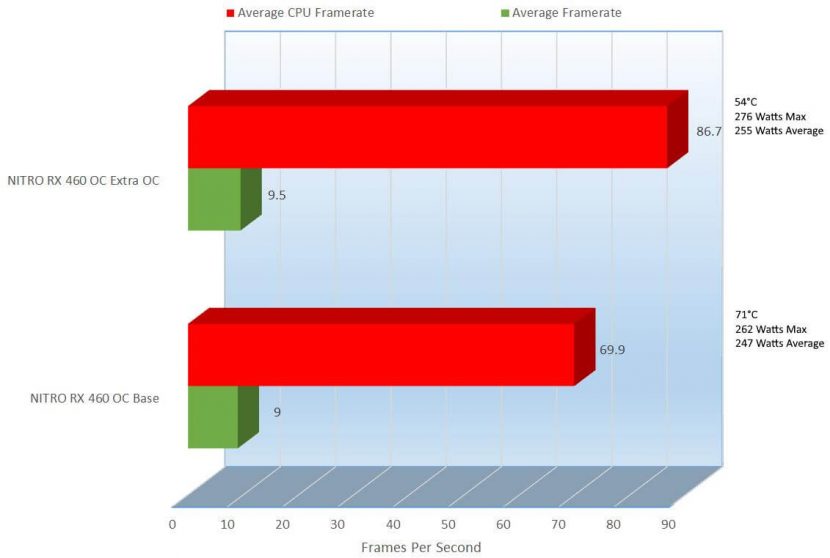

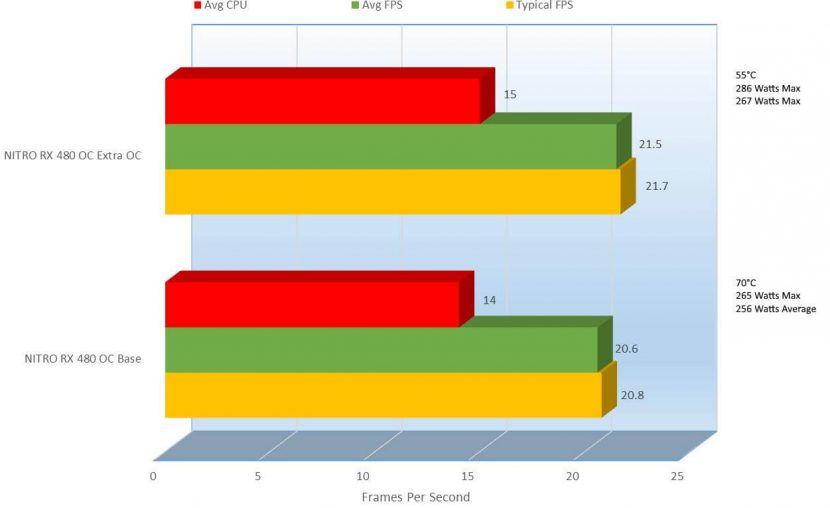

2560×1440

This is a bit of an odd one, everything at least on the performance side decided to improve by one or at least very close to it. The Average CPU FPS increase by 1FPS, the Average FPS improved by .9, a 4.28% increase in performance and there was yet again an improvement in cooling as well. The stock clocks heated the card up to 70°C and the overclock settings brought it to much more manageable 55°C, a 24% improvement in thermals. Needless to say, the average power consumption went up from 256Watts to an overclocked 267Watts, a 4.21% increase in power use.

We can see in these results throughout the benchmark section that the GPU did pull its weight in situations that make even higher performance cards sweat a bit. With all the eye candy on, mostly every test performed well at 1280×1024, which means that with the eye candy down a bit, 1920×1080 will play just fine in most games.

So lets take a moment and see how we were able to obtain these clocks.

[nextpage title=”TRIXX Overclocking and Card Utility”]

Sapphire TRIXX 3.0 is what I used to overclock this card and raise the fan speeds, but how does it work? I will show you here and maybe help you when you get this card overclock it some. Please only use it for reference, not all cards clock the same. For those of you learning, I will just quickly breeze over it.

The way I recommend doing it is to start from the bottom, start of your tests at stock, and slowly increase taking notes as you go. Please take note, this is my approach, everyone has their own approach to overclocking.

Here is again the overclock shown from within TRIXX 3.0 itself.

The stock clocks here if you remember were 1250Mhz on the GPU and 1750Mhz on the memory, so we have come up a bit and it did take me some time and lots of failed attempts to get here. I am sure I could have gone higher but for times sake I present you with the above, a decent overclock I would say.

To start here, raise your GPU Clock 5Mhz and then click “Apply” then run a round of “3DMark” and a benchmark of one of your most graphically intense games, I use “Metro Last Light”, it catches mostly everything wrong with an overclock. Actually this time, Ashes of the Singularity caught a mistake and it was my 2nd to last benchmark, once I found the mistake I had to redo all of my results, that took an extra day.

Once the results are in, write them down then raise 5 more Mhz and repeat the process. If during your testing the machine freezes or you notice artifacting or tearing (spots appearing on the screen, missing or stuck textures) then it’s time to raise the “Power Limit” bar and if that fails then also raise the GPU Voltage and test again. Make sure with all changes you make to click “Apply” and write it down.

Be careful with the GPU Voltage, if you raise it too much you can potentially damage the card, but on the lighter side you will eat up a lot more power needlessly.

As you are raising the GPU Clock, you will also want to work on the Fan Speed. I recommend clicking “Custom”, which opens up the “Custom Fan Speed” section where you can raise/lower the fan bar.

Check out exactly how to use it and how loud the cards get

Once you have reached a stable GPU speed and achieved adequate cooling then I recommend you start working the same process on the Memory Clock.

Save your work often, you can do this on the profiles.

Click on any one of those numbers and click “Save” the settings.

Use the same methods for Memory Clock that I mentioned for GPU Clock.

Here you will raise the “Memory Clock” slider

And like before, click “Apply” to apply the settings.

One thing to mention about overclocking on Sapphires TRIXX 3.0. When you restart your computer, at times the “GPU Voltage” will appear to be “0” as well the “Power Limit” setting. This is probably occurring since it is a beta, it’s not perfect yet but it is good but it is a quirk. The version I used here was v6.1.0.

If you do notice the 0, just click on the “Profile” you have saved your overclock to and click “Apply”, this will set everything as you last saved it.

TRIXX gives you a few more features, I will list them here.

FanCheck: A utility built into Sapphires TRIXX 3.0 that allows you to check the life of your fans, since you are actually able to easily replace these fans.

This will individually check each fan, or it should. In this version I see that it only shows 1 fan. I spoke with Sapphire support and they mentioned that TRIXX for now is considering this card as a reference card, the reference cards from AMD only have a single fan but they are working on updating TRIXX to properly show both fans here. I can assure you from my testing both fans are working properly; you will actually be able to see them spinning in some thermal photos I took below.

Once the test has completed, it will let you know that status of your fans

Under the settings button, we find a few more things.

Settings allows you to show “Effective Memory Clock”, “Synchronize CrossFire Cards”, “Set clock on Change”, “Save Fan Settings with Profile”, “Disable ULPS (ULPS)”: Ultra Low Power State: a sleep state that lowers the frequencies and voltages of primary and non-primary cards to save power, it can also cause instabilities with Crossfire and single card configurations). The other settings are to be able to “Load on Windows Startup”, start TRIXX minimized and restore clocks.

There is also “Graphics Card Info” which shows you information for the card. Most of the information is static information, if anything changed when overclocking it would update.

Here are the stock settings:

Here are the overclocked settings:

This also allows you to save the current bios by clicking “Save the BIOS” to store for your own purposes, give to a friend, share with the community or maybe adjust using another piece of software and flash back to the card.

Hardware Monitor, allows you to see all of the specs of the card in real life, as you are overclocking and maybe running through games and benchmarks. Here you can see where the voltages are maybe if the benchmark fails, then make adjustments or if maybe the voltages are needlessly high, you can adjust looking through here as well.

“Log Now” allows you to create a log to save your metrics to of your Sensors. Its output is similar to that of GPU-Z.

To keep costs down, they did not include an RGB lighting scheme on this card, but they did throw in some bling.

The back of the card has a NITRO logo that shines green through the PCB of the card.

Benchmarking builds up heat, I was able to use thermal imaging to see how the card reacted on each piece of the card. I used the Seek Compact Thermal Sensor that I will be reviewing soon to get this information.

This is the top of the card, and the picture was take with the system idle. The white area’s on the card are the hottest parts of the card. You can follow the legend on the left hand side of the picture to be able to tell the temperatures.

This is the bottom of the card, the hottest part was 34°C. You will also notice that the fans were not spinning since the card was not being being used really.

This is the card running 3DMark on Firestrike Ultra.

Here is the bottom of the card seconds after taking the pic of the top of GPU running 3DMark. The bottom will really not show the heat like the top will since the top is just behind the GPU and the heatsinks are absorbing that heat, the GPU here is covered by the card housing and being actively cooled by the fans.

I also recorded this, so you can see it in action

Sapphire did a great a great job with their Dual-X Cooling keeping the card cool.

Well, now its time to see what I thought overall and to see if you agree with my opinion.

[nextpage title=”Final Thoughts and Conclusions”]

There are some pros and some comes here, let’s check them out

Pros

- 3 Ports to fit almost any monitor

- FreeSync Support

- Supports DX12

- 0DB Fan mode

- Quick Connect Fan Replacement

- Great GPU/Memory Speeds

- Amazingly affordable for the speeds provided

- Sapphire TRIXX 3.0 is a nice addon for the card

Cons

- Does not include any adapters or adapter cables

- Could be important for people that have 2 x DVI Monitors, 2 x HDMI Monitors, 2 x DP, 1 x VGA monitors, etc…

- Sapphire should have checked and made sure the Fan check worked properly, but that’s an easy fix.

I think with this card, Sapphire redefines Budget, it can do some much. No adapters or cables included, so make sure you have what you need before you buy this card.

The card stays cool and quiet, though you can raise the fan speed and even the card speed as well to better suite your gaming or professional needs. It’s a budget card, but it can play anything but you have to watch the eye candy, turn off or lower AA, AF, MSAA and lower the resolution some, though it should be able to handle 1920×1080 on most games, the games I played, I played at 2560×1440 and you saw how great they played. It’s got plenty of room to overclock more than I did, but I gave you a good reference I think.

The card is amazingly priced right and the price will only continue to drop. For what it is, the only thing I can harp on are for the missing adapters though many of you may have them lying around. That being said, if you don’t have them laying around, you can find yourself in a predicament where you need to go to a store and buy one that can be around $50 additional, or you can buy one cheap online.

I give this card an Editor’s Choice. A great card for a great price, perfect for starters and even some regular PC usage.

We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

I have spent many years in the PC boutique name space as Product Development Engineer for Alienware and later Dell through Alienware’s acquisition and finally Velocity Micro. During these years I spent my time developing new configurations, products and technologies with companies such as AMD, Asus, Intel, Microsoft, NVIDIA and more. The Arts, Gaming, New & Old technologies drive my interests and passion. Now as my day job, I am an IT Manager but doing reviews on my time and my dime.