We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

Pulse, it’s Sapphire answer to your wallet, but is it worth it? Sapphire has created a line, separate from its NITRO series card to give you a little more to game with, with a smaller price tag. Sapphire’s catch here is toning down on the LED lighting, getting rid of the buzz worthy aesthetics and pulling the reigns back on the cooling and a few other things. You might be scared at the cooling part, but don’t worry, they did it right to save you a few bucks on this 570.

With that, today I bring you my review of Sapphire’s Radeon PULSE RX570 ITX 4GB 11266-06-20G. This card does not only take on Sapphire Pulse branding, but it offers a little something different to our PC friends with ITX systems, a chance. This card is incredibly small, coming in 6.69 inches allowing you to still use your small form factor PC with the promise of power.

Let’s check out the specs on this card.

Specifications and Features

- Boost Engine Clock: 1244

- 4096MB GDDR5 256-bit RAM

- 1750Mhz

- 7000Mhz Effective Memory Frequency

- Compute Shaders: 2048

- Supports Crossfire

- 3 Output Maximum

- 1 x DVI-D

- 1 x HDMI 2.0b

- 1 x Display Port 1.4

- Resolutions Supported

- DVD-D

- 2560 x 1600 (60Hz)

- HDMI 2.0b

- 3840 x 2160p (60Hz)

- Display Port

- 3840 x 2160 (120Hz)

- Supported API’s:

- OpenGL 4.5

- OpenCL 2.0

- DirectX 12

- Shader Model 5.0

- Vulkan API

- Supported Features

- Power Consumption: 150Watts

- System Requirements

- 450Watt Power Supply

- Windows 10, 8.1, 8 or 7

- Form Factor

- DVD-D

- Length: 6.69in

- Width: 4.41in

- Depth: 1.42in

- 1750Mhz

Let’ see just how tiny it is in an unboxing.

While I know, you may want to skip over everything included in the package and get to the card, lets pay Sapphire some respect and check it all out.

This sheet provides some information on the manufacturer. I don’t know why it comes in its own separate sheet, but here it is.

For those of you that don’t catch my reviews, they include a Quick Installation Guide. Don’t worry like with all reviews, I will show you how to install it a bit later in this review.

The Product registration pamphlet has all the information you need to register you card. On this pamphlet, they also include the serial number of your card. This is great because if you already have your card installed, you don’t need to remove the card to write down the serial number, it’s the little things that make a difference.

Inside the package includes a Drivers Disk. I do not recommend using this unless you have a horrible or no internet connection. The minute the drivers disk is printed, the drivers are already out of date. I always recommend downloading the latest driver off of AMD’s website, they fix incompatibility and performance issues.

And now what you have all come for, Sapphire Radeon PULSE RX570 ITX 4GB 11266-06-20G. At first I thought you came here to see a picture of me… but I was told that this was not the case.

This is a Pulse, so it is a little cut back, in that cut were the additional output connections. You get the basics here and that helps cut back on the price. The connections here are DVI-D, HDMI and Display Port. On the shield you also see vents, this helps vent heat from the card out of the case.

Pay attention here, remember the card does not bring any sort of adapters, so if you have 2 DVI-D, or 2 HDMI, 2 Display port or even VGA monitors, you will need to purchase adapters separately.

Working our way to the right, we find the front of the card. While it is a basic card, with no LED lights, they didn’t want to make it a completely basic looking card. We have the Pulse silver streak over the SAPPHIRE logo and a few accents embedded into the housing.

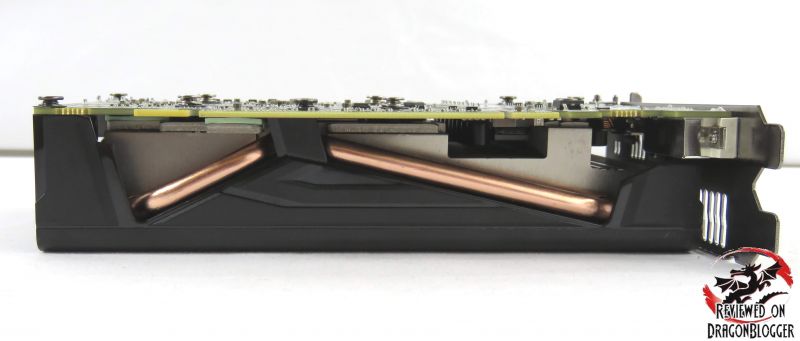

A closer shot shows these accents but also you can see a bit of the cooling solution, notice on the right the copper pipes.

Zooming out a bit, we find the single 6-Pin PCIe connection. Its big brother in the Nitro series comes with a 6-Pin and an 8-Pin connection, but does that mean we will get less performance, don’t worry we will find out soon enough.

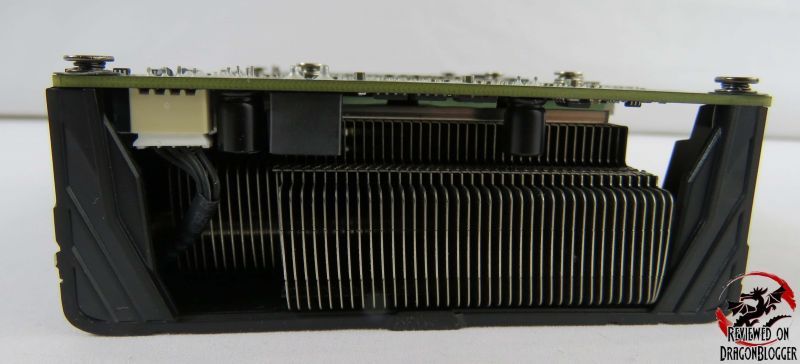

Even though they say they cut back on the cooling, you can see they do have their fair share of cooling attributes here. The back of the card exposes the heatsink fins and earlier you say the copper pipes. Potentially not as excessive as the NITRO series, but it looks good so far.

Turning the card a little more to the right, we see the underside of the card. It is very exposed it but it shows you the copper pipes and one side of the heatsink fins.

The bottom of the card, we find the single fan, it is a larger fan but this helps keep the card quieter. They could have used 2 smaller fans, but the noise level would have gone up.

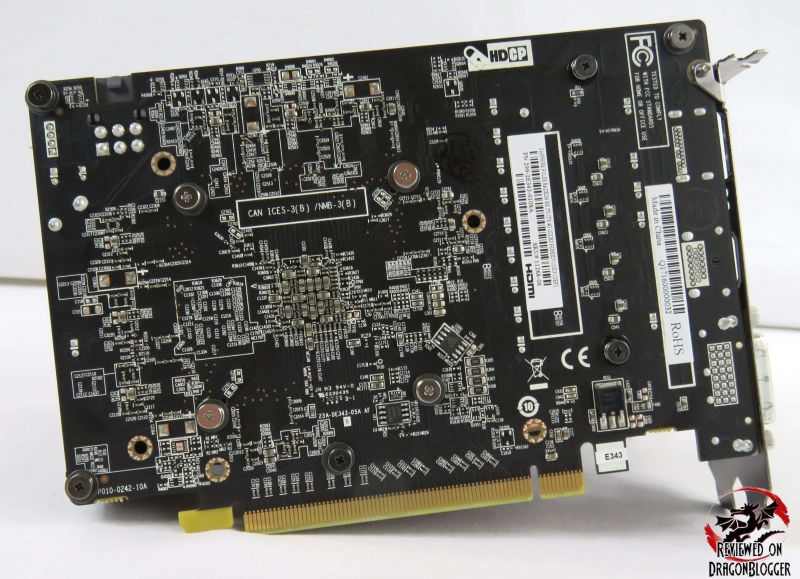

The back of the card is plain, no metal backing or fancy LED’s, just a basic black PCB with a bunch of traces, diodes and other components. Nothing to speak of here really.

On this next page, we will go over how to install the card, so let’s check that out. It is more for people that don’t really know how to install a video and maybe to help give those of that do some pointers, geez maybe I need some pointers too. Please let me know.

[nextpage title=”Installing the Sapphire Radeon PULSE RX570 ITX 4GB”]

Every one of us at one point did not know how to install a video card and this chapter is to help you guys out. Every one of us started as noobs, so don’t worry.

In this video below, I will show you how to install the Sapphire Radeon Pulse RX570 ITX 4GB but really it will help you with just about any other video card as well. Mind you , you may have to connect different PCIe connection or other things, but this will help you with the basics maybe even a little more.

Please remember to uninstall your older video drivers before installing this new card, or any newer card. You can download the newer drivers for your newer video card, but don’t install them until you have this newer card installed. Even if you are upgrading from an older AMD to card to a newer AMD card, it is always recommended to have the latest and greatest video drivers.

Check out the video:

In that video, I show you how to remove an older video card and how to install a newer video card, in this case the Sapphire Radeon Pulse RX570 ITX 4GB.

Here is the finished product, pretty simple wasn’t it?

I installed the card in a full ATX case, but this card touts it compatibility for ITX cases. What is ITX, it is a Small Form Factor case also known as SFF and this card is meant for that. Small Form Factor (SFF) cases support smaller motherboards and video cards so that you can have a mini power house without taking too much space. A case for example is the Cougar QBX case, you can find it by clicking here: CLICK HERE. This also means the card may not be as powerful as its NITRO 570 counterpart, but we will get into that a little later.

Now that the card is in, go ahead and install the latest drivers.

Now that everything is installed and configured, let’s go ahead and check out the performance.

[nextpage title=”Benchmarks, Performance, Temperatures and Power consumption”]

Before I get into the performance, let me give you a reference on what my system config is so that you can see how comparable our systems are to see what kind of performance you will see.

- EVGA CLC 280 Liquid CPU Cooler: https://geni.us/6NAIJBN?m4F6

- Sapphire Radeon Pulse RX570 ITX: https://geni.us/6NAIJBN?9Hj8

- Intel Core i7-7700K Kaby Lake BX80677I77700K Processor: https://geni.us/6NAIJBN?5e87

- EVGA Z270 FTW K, 132-KS-E277-KR Motherboard: https://geni.us/6NAIJBN?xNpK

- Patriot Viper Elite Series DDR4 32GB 2800MHz: https://geni.us/6NAIJBN?iGZs

- COUGAR Panzer ATX Case: https://geni.us/6NAIJBN?U5dr

- Windows 10 Professional: https://geni.us/6NAIJBN?GYbBRY

- WD Black 512GB PCI-E NVMe M.2: https://geni.us/6NAIJBN?9H46

- WD Blue 500GB SSD WDS500G1B0A: https://geni.us/6NAIJBN?udvK

- Samsung 850 EVO 500GB SSD: https://geni.us/6NAIJBN?1gf0fs

- Kingston HyperX 240GB SSD: https://geni.us/6NAIJBN?8leEDW

- Patriot Ignite 2.5″ 480GB SATA III MLC SSD PI480GS25SSDR: https://geni.us/6NAIJBN?eoPVsG

- Cooler Master Silent Pro Gold 1200W Power Supply: https://geni.us/6NAIJBN?Umwm

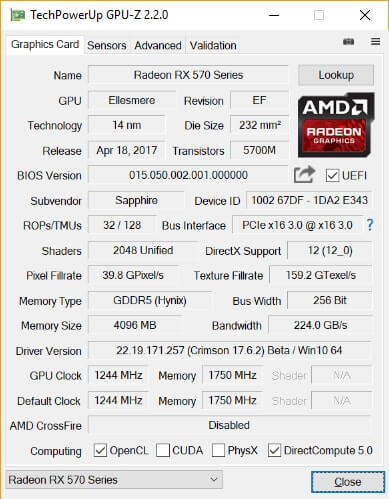

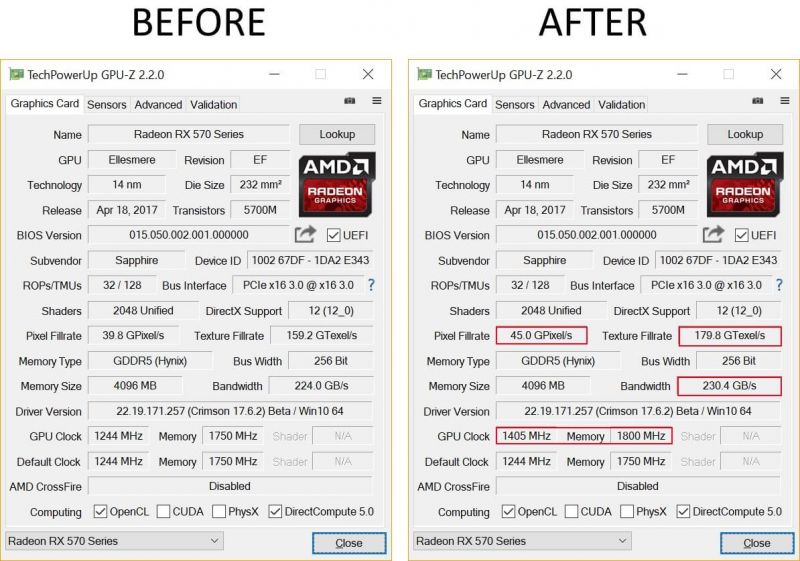

Specifications of my system aside, here are the GPU-Z reading of the card itself.

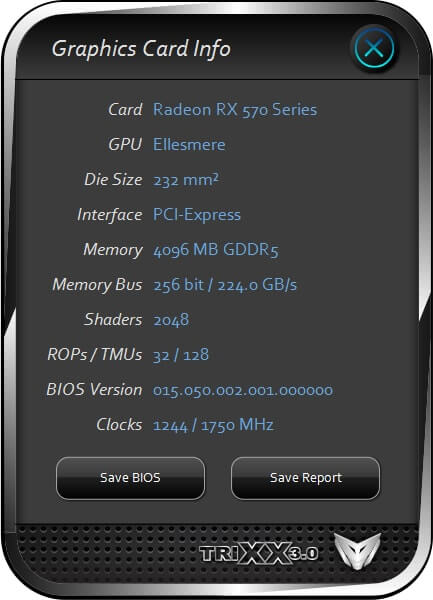

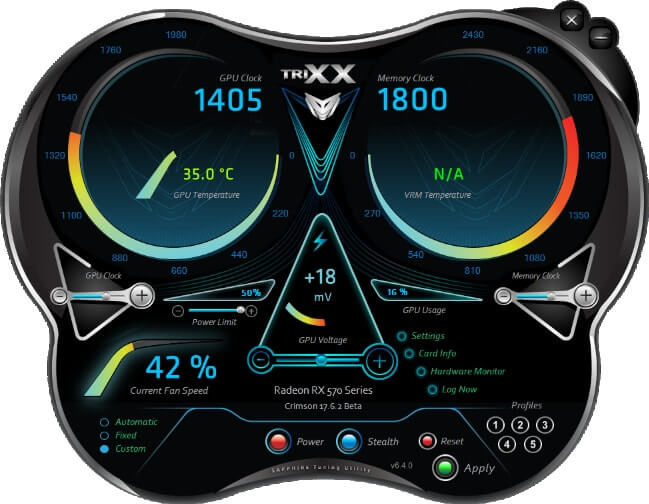

Here is a bit of the same from Sapphires own utility TRIXX. This utility can help you change the fan speeds, GPU clocks speeds, memory clock speeds and a host of other options.

I will utilize this program a little later in the review to unlock some hidden potential from this card so be sure to continue reading. You can grab a copy of TRIXX from here: http://www.sapphiretech.com/catapage_tech.asp?cataid=291&lang=eng

This is some more information that TRIXX provides. You can see this card is based off of AMD’s Ellesmere technology. This technology can offer up to 2304 stream processors at a 256-bit wife GDDR-5 interface.

During the benchmarking I will be monitoring and of course reporting how much wattage is being consumed and to do that I will be using a “Kill A Watt” by “P3 International”.

I use the following applications to benchmark

- FutureMark’s 3DMark Fire Strike

- FutureMark’s TimeSpy 2.0

- Metro Last Light

- Thief

- Tomb Raider

- Tom Clancy’s The Division

OK, let’s get started benchmarking

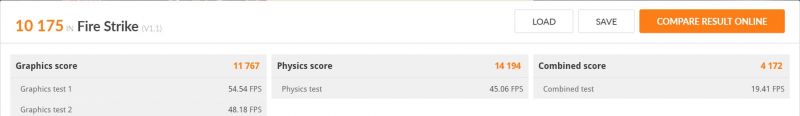

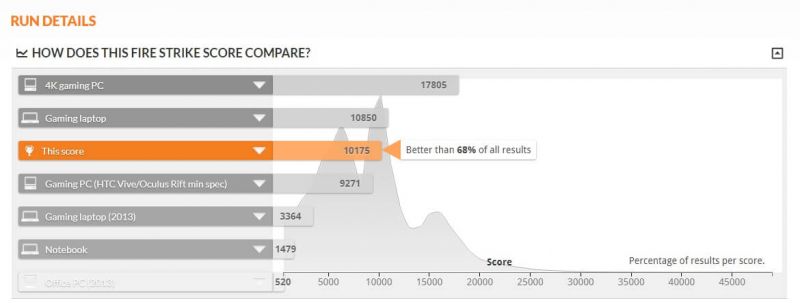

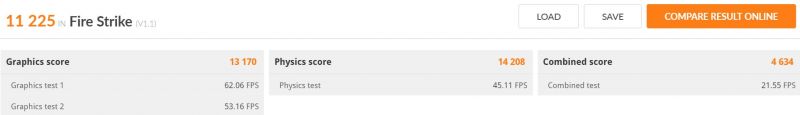

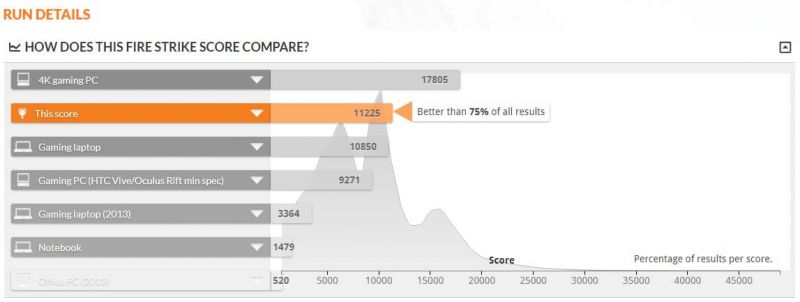

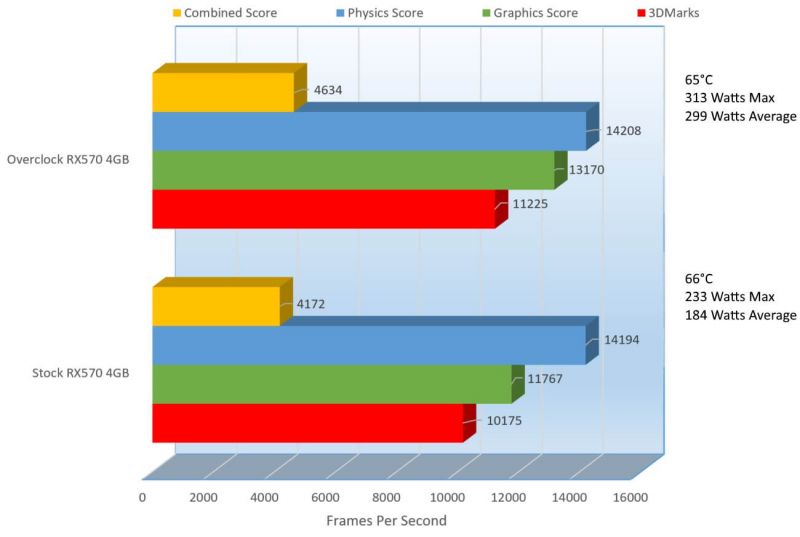

A score of 10,175 in 3DMark Fire Strike, not bad at all and with that score we can see we are getting 68% better results than all other PC’s.

During this test, the minimum power pulled from my machine was 98.7Watts and the highest was 233Watts. The most important however was the average, on average the PC pulled 184Watts, that’s is amazingly low. During this testing, the GPU only heated up to 66°C.

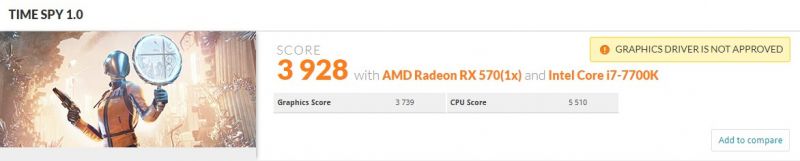

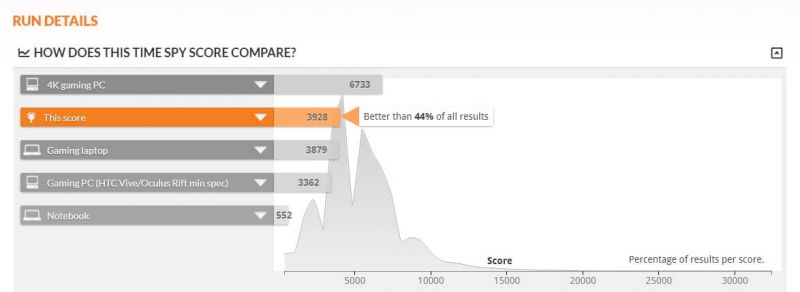

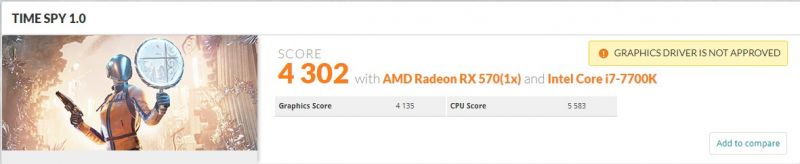

So let’s check out 3DMarks sister benchmark, Time Spy.

So this result is 44% better than all other benchmarked systems. In this test, the average power consumption was a very low 178Watts and the GPU heated up to 66°C. All looking good so far and the temps are doing pretty well. 3DMark however is not a game, it is to give you a point of reference of your GPU’s performance to maybe compare with friends or compare to yourself as you tweak and tune your system. For real world performance, we need to check out some games, so I will start it off with Metro Last Light.

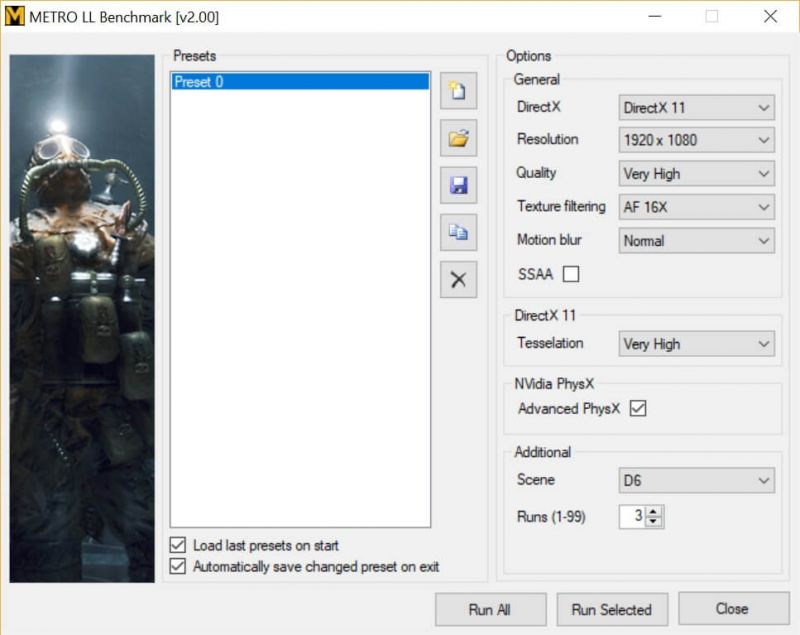

Before we start with the results, here are my settings for Metro Last Light. I will be testing at 1920 x 1080, 2560 x 1440 and 3840 x 2160 but aside from the resolutions, these settings will remain the same.

On my previous reviews, I always had SSAA enabled, that destroyed the results and brought no benefits what so ever in the quality of the image, so I have now and will continue to disable it on all reviews. OK, enough talk on to the results.

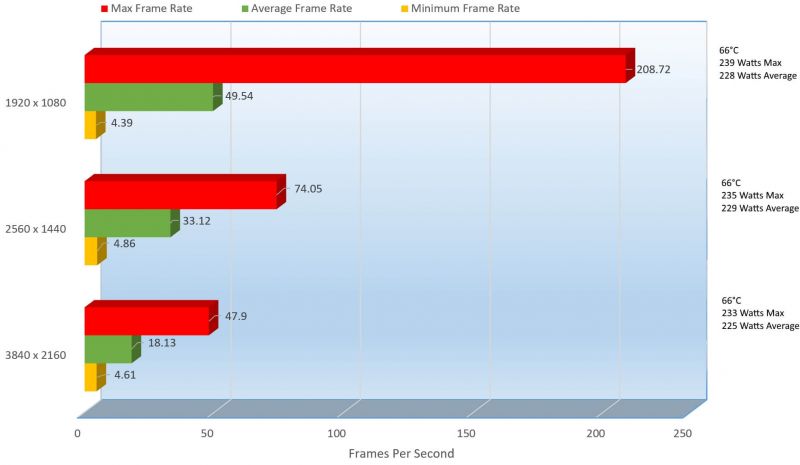

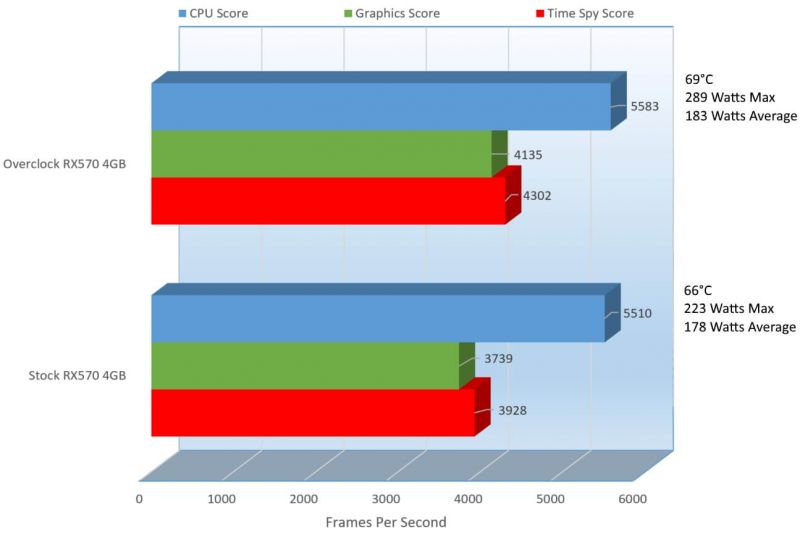

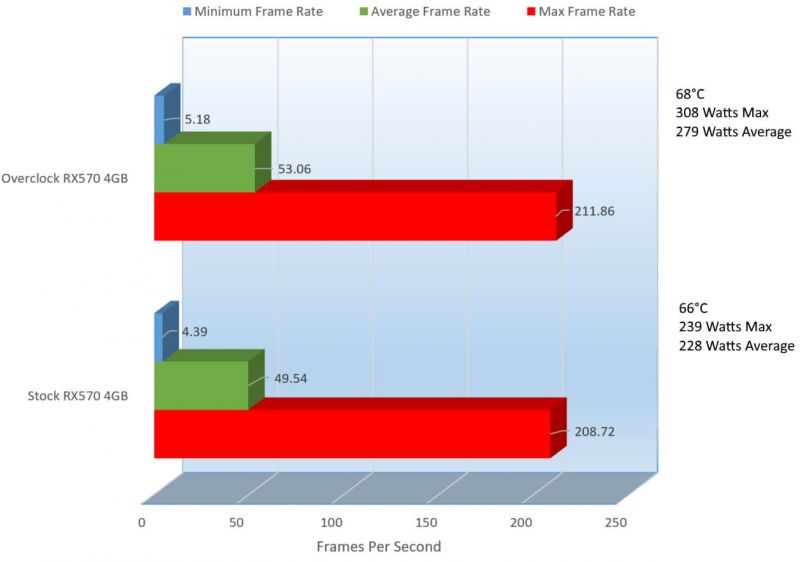

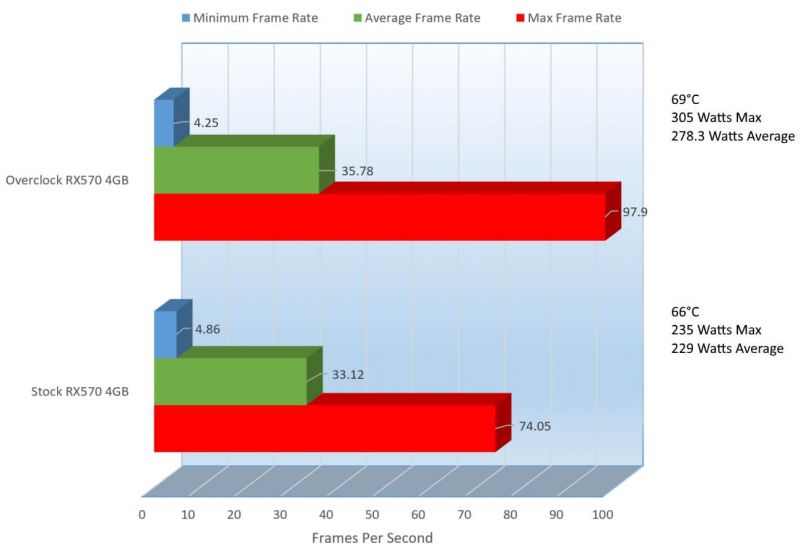

With all settings set to Very High, this card struggled a bit though surely at Medium it would be able to handle everything just fine. At its lowest resolution of 1920 x 1080, it only picked up 49.54 frames per second at 66°C with an average power consumption of 228 Watts. Bumping the resolution up to 2560 x 1440 the performance dropped by 33.14% and with the actually consumed 1 more watt on average, the temps stayed the same. Trying it out at 3840 x 2160, the performance yet again dropped by a steep 45.26% but honestly it was expected as this card is not really meant to be played at 4K, but lowering the eye candy would help tremendously, this is a mid-tier card.

Metro has always been a video card slayer, its either poor optimization on the games engine or it is just that brutal. Without SSAA it is still a bit manageable but it seems like at 1920 x 1080 there might be something else we can do to improve performance, it’s so close to the magic 60 frames per second. We will work on that a little later in the review. So next up is THIEF.

Here is the configuration I used for THIEF’s benchmark, again only changing resolutions in between tests.

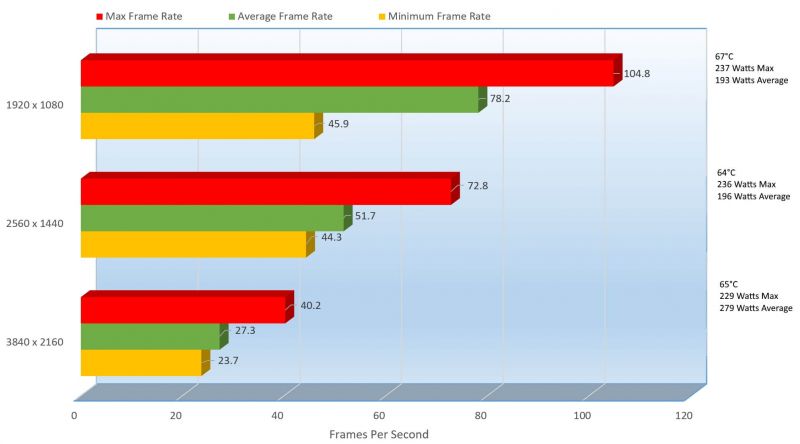

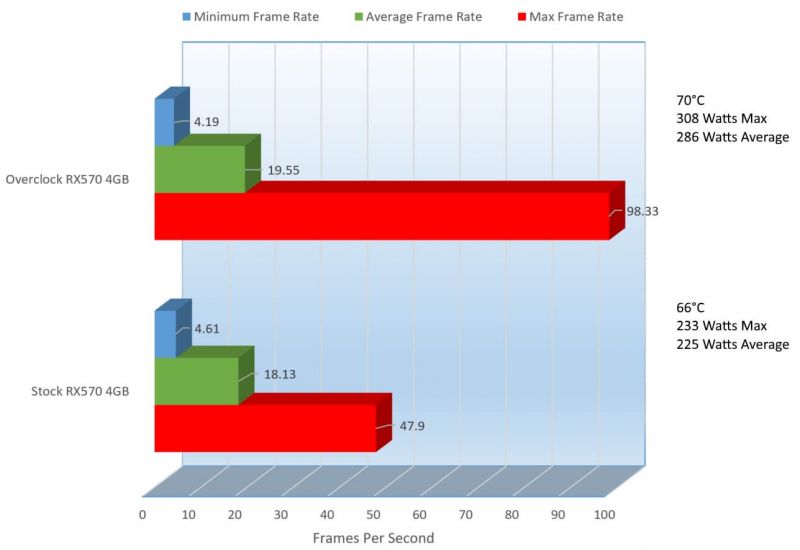

THIEF seems to be a bit better optimized, the results are pretty nice. At 1920 x 1080, we have a very playable 78.2 frames per second and the power consumption was a very mild 193 on average. With its performance gains, the temperature of the GPU only went up from Metro’s 66°C. With a little tweaking, it looks like THIEF at 2560 x 1440 would be playable but it came in at 51.7 frames per second, 33.89% lower than its lower setting at 1920 x 1080. Power consumption only went up though 1.53 Watts though the temperature did drop 3 degrees. At 3840 x 2160 things looked a little bleak only pulling 27.3 frames per second, 47.20% lower than its 2560 x 1440 counterpart score. The temps did raise but by only 1 degree, and the average power consumption did sharply rise 42.35%.

I don’t think we expected 4K UHD to play very well, but at 2560 x 1440 a 51.7 FPS is pretty decent, I would say playable. So let’s check out Tomb Raider.

Here is the configuration I used for the Tomb Raider benchmark, again only changing resolutions in between tests.

And now on to the results.

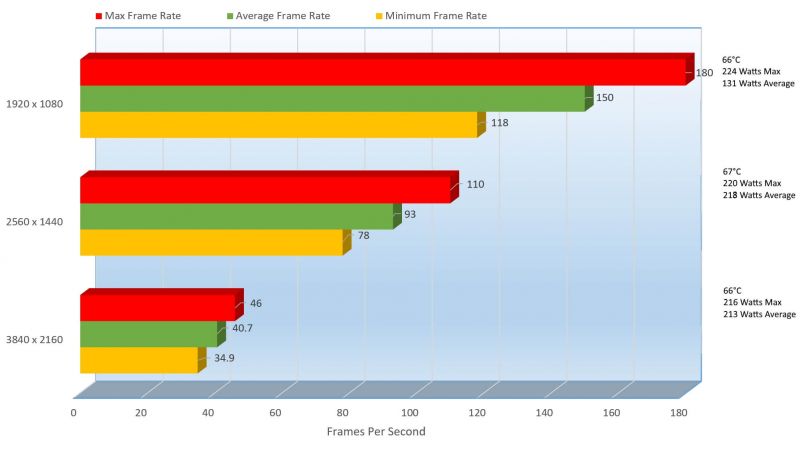

Laura kicked it up a bit here and at 1920 x 1080, we can see a whopping 150 frames per second on average heating up only to 66°C and consuming an average of 131 Watts. Then we step it up to 2560 x 1440 and we can see the frame rate drop down to still a very playable 93 FPS count, the temps only went up 1 degree but here is where things got a little bad. At 2560 x 1440, we can power consumption jumped 39.91% to 218Watts, not horrible but a pretty big leap. Performance got pretty bad though at 3840 x 2160, performance dropped considerable at 56.24% to 40.7 frames per second, but I think we can take care of that a bit and we will go over that a little later in the review.

My favorite benchmark, because I love the game is Tom Clancy’s The Division and it’s up next.

And here are my settings

Lots of settings there, but let’s jump into the results.

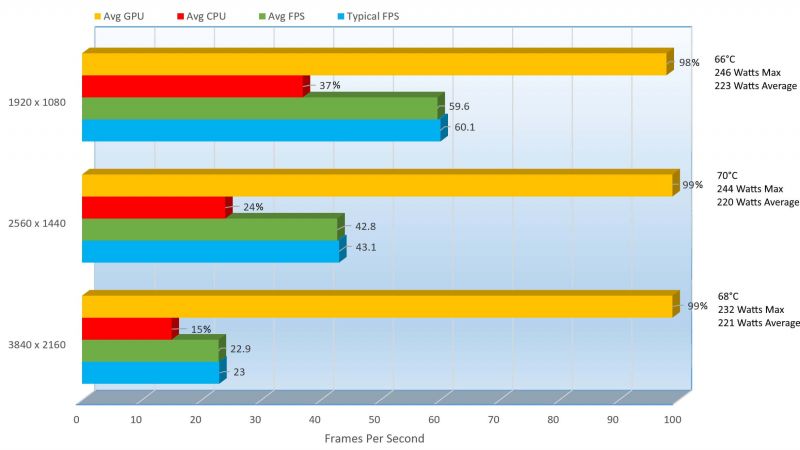

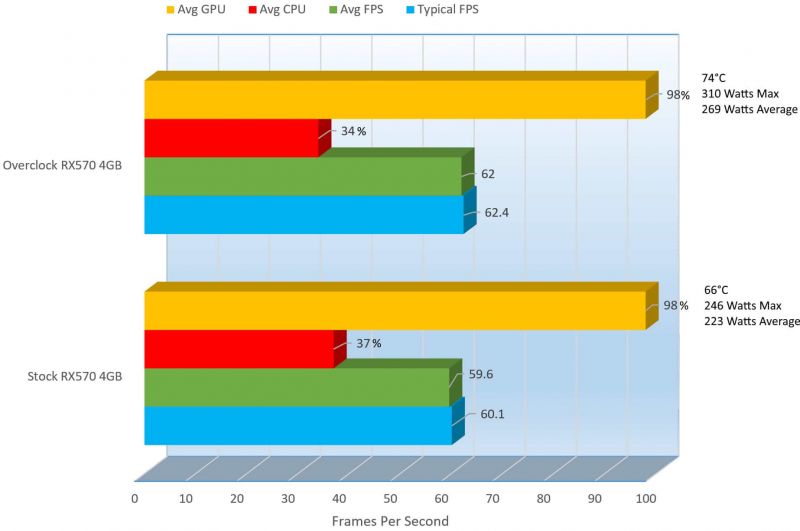

This one might look a little different than the others because the benchmark shows the percentage of CPU and GPU used as well.

At 1920 x 1080, the Typical GPU score was 60.1, totally playable and the Average Frame Per second is 59.6 which is also very playable, but does not speak well to resolutions above this. At 1920 x 1080, the maximum temperature was 66°C and the average Wattage consume was 223.

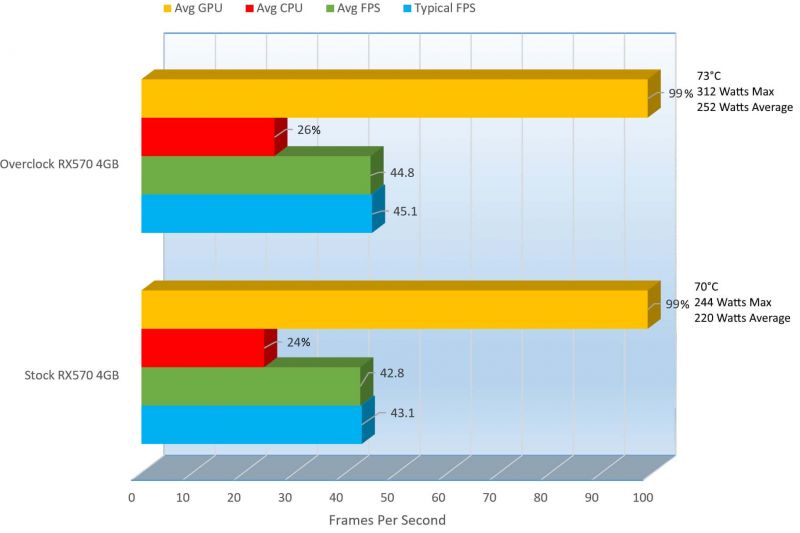

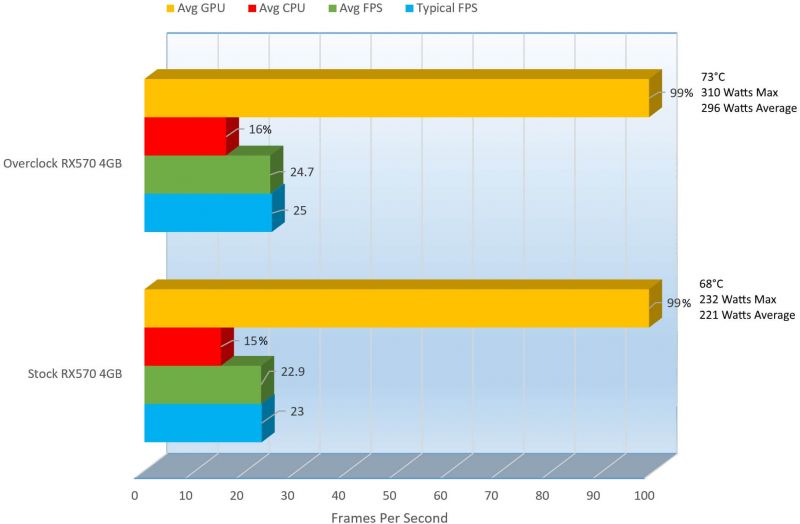

At 2560 x 1440, we can see there was a 28.55% decrease in performance from the Average FPS counter and a 28.29% decrease in Typical FPS though that is to be expected since we did go up. In 3840 x 2160, we saw a more significant decrease of 46.50% in Average FPS from 42.8 down to 22.9 with a temperature drop of 2°C and only 1 additional watt of power used.

Here at 2560 x 1440 seems that most games start to get at the point where performance starts to suffer a bit, still a bit playable but not 100% at least at the presets we have set here. At 2560 x 1080 without all the eye candy is where I play most of my games on this card, maybe with High or Medium settings placed. Talk is cheap though, so let’s get into some game play so you can see what I mean.

[nextpage title=”Gameplay and Performance”]

So after hours of unboxing, installing and benchmarking, this is the part I like the most, the gameplay. I am a gamer at heart, but reviewing, my day job, my family and keeping up the house does not give me much time to game. Here is my relaxing time,… I take a little more time here than I should.

The games we will be trying here are Grand Theft Auto V, Battlefield One, Tom Clancy’s The Division, Overwatch and PLAYERUNKNOWN’S BATTLEGROUNDS. Sorry for the caps on that last one, that is how it is written. PB is new to me, I just got it for this review so it will be a bit different.

To start things off, we will check out Grand Theft Auto V.

So you can see, I played this game at 2560 x 1440 on DirectX 11 with most settings at Very High and the frame rates from the upper 60’s to the lower 70’s, that’s nice.

Next up is Battlefield One.

Again, I played this game at 2560 x 1440 but this time at Medium… but it still looked and played great. The game ran in the mid 80’s, yeah 80+ frames per second and she played great. The Battlefield series is one of my favorite series of games, that and Command & Conquer, but that series is dead now. One of my newer favorite games is Tom Clancy’s The Division.

Let’s check out some Division gameplay.

So in The Division, I had most of the settings at High and the resolution at 2560 x 1440 and we get the upper 50’s and lower 60’s in Frames Per Second. Since mostly it would run in the 60’s, one or two settings turned down to medium would have kept this game in the 60’s or 70’s.

Now I don’t want to keep playing all the same games for you, I want to change it up sometimes so with that, I introduce Overwatch to the Performance section.

Let’s watch some Overwatch, pun intended, I mean if you can call it a pun.

OK, so I went a little crazy on this one, I had only played once or twice before but I played this game at 4K, well UHD but close enough. I played at 3840 x 2160, sorry for the screaming, I was trying to compensate by how loud the game was, I should have lowered the audio. Even though I was in 4K and in the High presets, I was getting frame rates in the 60’s and 70’s, that’s awesome.

OK, and I got another game, just for you guys (that’s what I tell my wife and kids) is PLAYERUNKNOWN’s BATTLEGROUNDS.

I love this game, I just got it now for this review and it is very cool, here’s a link to it on Amazon if you are interested https://geni.us/6NAIJBN?0Q1y. Aside from that when I first started testing the game (the first half of the video, I was getting frame rates between the 30’s and 40’s but at the 25-minute mark I changed my resolution from 2560×1440 at Medium to 1920×1080 still at medium then my frame rate went to the upper 50’s into the 60’s. At around the 32-minute mark I changed from Medium to High settings and I remained in the 50’s and 60’s.

PLAYERUNKNOWN’S BATTLEGROUND is an early release game, so it might still be a bit buggy but I got it now because I am sure when it does get released it will get to the $60’s and I won’t want to pay for it. I wouldn’t want to pay for it not because it is not good, it is but I am cheap and I would not wan to spend that much money.

OK, so now with the constant and subtle teases, I bring you some benchmarking with the card overclocked a bit. I am not the best, and I do not claim to be the best overclocker but I did get some more juice out of this card, let’s see how it helped, I should say if it helped.

[nextpage title=”Overclocking Performance, Benchmarks, Temperatures and Power Consumption “]

Lots of time was spent on this, more than usually because of how interesting the results were. Overclocking takes some patience, a pen/pencil and paper since you will be recording your settings. Mind you, you can use Notepad.exe but if the machine crashes from a bad overclock, you lose everything, or at least everything not saved.

Below I will list the before and after results of the overclock so that you can compare.

First off is with GPU-Z

You can see, we have overclocked the GPU Clock from 1244Mhz to 1405Mhz, a 161Mhz boost, that’s a 11.46% increase in GPU Clock speed. For RAM, a very modest 1750 to 1800Mhz, a tiny 2.78% increase in the Memory clock. Mind you, I could have gone MUCH further, but I spent too much time on this as it was but if/when you buy this card, surely you can go further and it would be great if you could let us know in the comments below what you obtained.

Overclocking the GPU and Memory not only increase those, but those overclocks improved the Pixel Fillrate by 11.56% increase, the Texture Fillrate by 11.46% and finally the bandwidth by 2.78%. So let’s check out what TRIXX shows.

BEFORE

AFTER

A little less telling, but this is the program I used to overclock. I also used AMD’s own WattMan.

This program helped me figure out a problem I had where my overclocks were not registering correctly. GPU-Z and TRIXX both showed they were overclocked but WattMan showed me the true clock speeds and the wattage. I learned used WattMan that I had to raise my PowerLimit and boom, the overclock started working. WattMan, is not a separate download, it is included in the AMD Drivers.

To find WattMan, with the AMD drivers installed, right click on a clear area on the desktop and select “AMD Radeon Settings”. In the Radeon Settings, click “Global Settings” then click on the “Global WattMan. This helped making my overclocking take a lot less time.

Now to overclock, I first modified the GPU Clock

Then I also raised the Power Limit.

As well as the GPU Voltage

The Memory Clock

Fan profile

With Custom Fan Speed, we can select what speed the fans will rev up to when it hits that specific temperature threshold. The settings above is what I set for the overclock but even if you buy this GPU, you may not be able to hit these scores, but you may actually be able to get better scores as well, its a lottery really.

TRIXX has a lot of uses, Overclocking and Custom Fan Speeds are two of them. Time for the comparison.

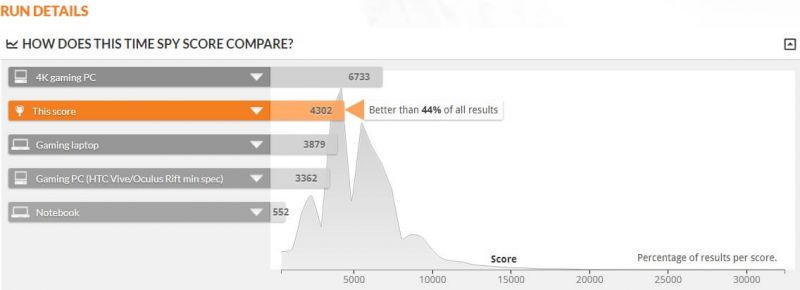

If you remember previously, we scored 68 better than other benchmarked systems but now with the overclock we got 75% over benchmarked systems. 7% improvement is very nice but let’s compare all of the results.

Wow, a nice increase here. On the total score, the 3DMarks we can see a 9.35% increase in performance. The Physics score improved by only 0.10%, but we can see the Combined score improved by 9.97% and Graphics Score by 10.65%.

Now let’s compare Time Spy

Sadly, the score did not raise the spot of the system here in Time Spy but let’s see what the overall score improvement is.

On Time Spy, there is not much of an increase, but there is still an improvement. With the Time Spy score, there was a 8.69% increase. In Graphics Score there was a 9.58% increase and in CPU score we find a tiny 1.31% increase. The temperature did go up 3 degrees and there was only a 2.73% increase in average power consumption. Odd that 3DMark liked it, but Time Spy not so much, at least it was not negative.

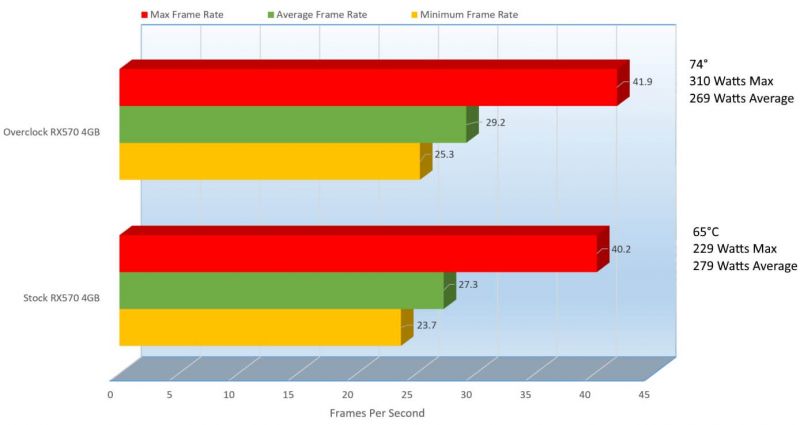

Since there will be a lot more information here, I will break the benchmarks into resolutions. Let’s start with 1920 x 1080.

1920 x 1080

On Average, we can see that performance improved by 6.63% and with that a 2°C increase in temperature and consumed 18.30% more power than in stock. All across the board, in Minimum and Maximum the score improved so that is a great sign. Now on to 2560 x 1440.

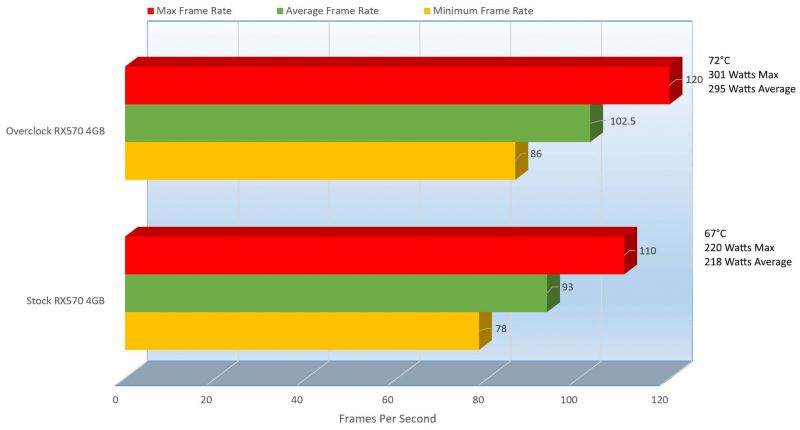

2560 x 1440

We can see yet again another improvement here, though it’s not across the board. On average, we can see there is a 7.43% improvement, which is the most important and on Max Frame Rate, we can see a huge improvement of 24.36%. Max is not as important as the Average but it is nice to see some spikes and then for minimum, here is where we see a decrease, but it’s not too bad only at 12.55%. There is two times the increase in Max than the decrease in Minimum, but both are still not as important as the Average score. Let’s hope we can keep this momentum. Let’s check in 3840 x 2160.

3840 x 2160

It seems the trend continued, not only on average but on Max and Minimum. We can see on average the performance improved by 7.26%, on Max 51.29% then a decrease on Minimum by 9.11%. The difference between Max and Minimum was much greater, time 6 even so that was good, but still at 4K, that average cannot cut it. Though with a 570, there was never a doubt that 4K especially with Very High or Ultra settings that the performance would not be good. On Metro, the overclock helped 1920 x 1080 become more playable, but still suffered a bit at 2560 x 1440 and 3840 x 2160.

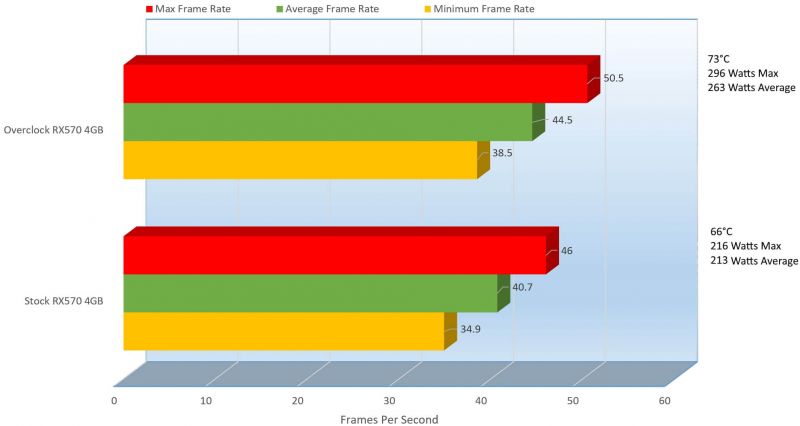

So let’s do some comparison with Thief.

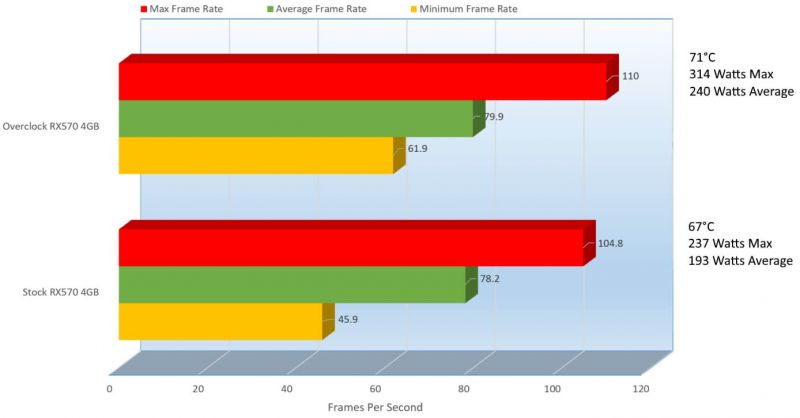

1920 x 1080

OK this is good, even though the performance was spot on before, it just gets better here. On average, we can see a 2.13% increase in performance. On Max, we can see a 4.73% increase and on Minimum, we can see a greater improvement of 25.85%. Even though they were marginal improvements, an improvement is an improvement.

Now the bad part is that with the improvement, came the huge wattage increase. On Average, the overclock consume 19.59% more power and increased thermals by 5.63%. Is that improvement worth it?

While you think about that, let’s check out 2560 x 1440.

2560 x 1440

I really dislike trends, but it’s happening again. On average, we can see there was a 6.17% increase in performance. On Max, we can see an 8.31% improvement, but then on Minimum there was a decrease in performance of 4.06%. Again, Minimum is almost as important as Max, which is not very much, it signifies spikes in performance, what matters is the Average frames per second.

3840 x 2160

Event at 3840, the overclock could not save it. While there was an improvement across the board, it was minuscule. On average, we pull an additional 6.51% improvement, and power consumption actually dropped 3.58% though the temperature increased 12.16%, surely a fan curve change would fix that though. Min and Maximum improved as well, but nothing significant to speak of.

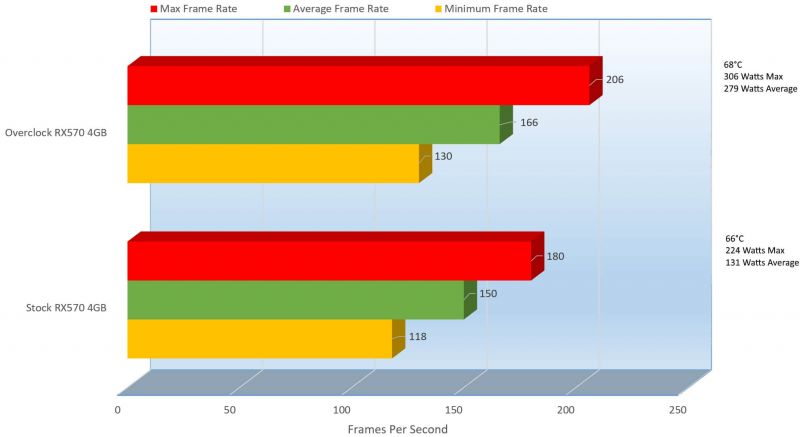

Let’s get a little closer to Laura and see what she has to say about things.

1920 x 1080

Alright, some nice increases here at 1920 x 1080. On average, we gained 9.64% improvement on performance. Problem is Laura had no problem eating up the wattage, it tore through 53.04% more power than on stock, but I did raise to the limit the power limit. I might have been able to get away with a setting of 40, but I figured to max it out. The temperature here only went up about 2 degrees. Like with most of the rest, the improvement was also on Max and Min.

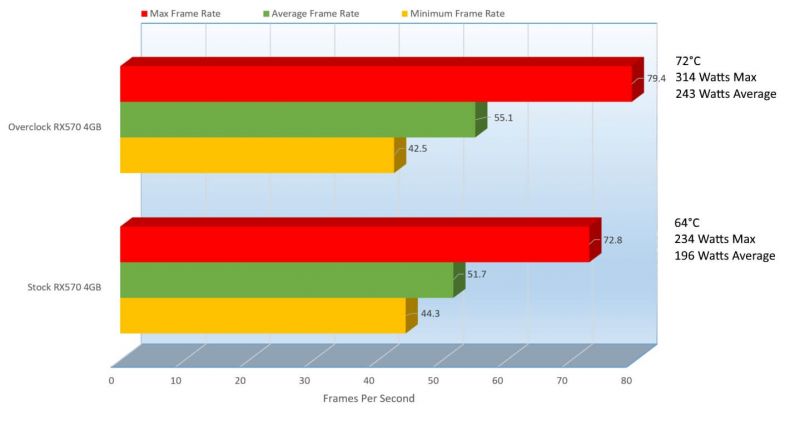

2560 x 1440

Yet again, Laura takes the stage and takes no prisoners. On average, we can see a 9.27% improvement in performance, at 2560 x 1440, this game even on Ultra is still amazingly playable. Though, like a trainer packs on the carbs for a massive work out, Tomb Raider packs on the power draw, consuming 26.10% more power and a 6.94% more heat, though still very manageable.

3840 x 2160

I thought we had some good momentum, but it looks like the 4K beast was even too much for Laura. While there was an increase throughout this test, it still did not help to make this very playable. On average, there was a 8.54% increase in performance and the power draw was slightly smaller increasing only by 19.01% and temperature increase by 9.59%. Both increases are manageable and with a better overclock, the performance would have been better. Last up is Tom Clancy’s The Division.

1920 x 1080

At 1920 x 1080, you can see that before it fell just shy of 60FPS, now overclocked we get 62 Frames per second, a 3.87% increase in performance. Also, now with the overclock we can see that the dependency on the CPU dropped 3%. Typical FPS also went up 2.3%. The temperature did increase however 10.81% and power consumption also rose 17.10%, do you think it’s worth it?

2560 x 1440

It does not look like 2560 x 1440 made the cut, even though there was still an improvement in all aspects. On average, performance rose 4.46%, temperature only 4.11% and power consumption rose 12.70%. Not very playable at 44.8 frames per second, but as I have mention on previous results, dropping some eye candy will help if you really want to play at 2560 x 1440. The scores on the others did rise, but here we can see the dependency on the CPU actually went up 2% here. Let’ check out 3840 x 2160, but I would not hold you hopes high on this one.

3840 x 2160

It was pretty bleak to begin with but still, there were gains. On average, there was a 7.29% increase in performance, with a 5° increase in thermals and a rather large 25.33% increase in power consumption. This crippled the card with such high settings, but this card is not really meant for 4K, but it pulls its weight where it can. Here as well, the CPU tried to pull a little more coming up 1%.

Finishing this off, let’s see how this card does on my final thoughts and conclusions.

[nextpage title=”Final Thoughts and Conclusions”]

This to me was a struggle between David and Goliath of sorts, the Sapphire Radeon Pulse RX570 ITX 11266-06 vs the Sapphire Radeon NITRO RX570 11266-14. I did not have a NITRO to benchmark with, but I tried my hardest to surpass it by overclocking and I did pull the GPU well beyond that of the NITRO. The NITRO’s GPU clock is set to 1340Mhz, while the overclock was at 1405Mhz. The NITRO has 2 Fans and a larger/wider heatsink, whereas the ITX only has one fan and a smaller heatsink, but their uses are potentially similar, just different landscapes, maybe.

A NITRO would be used more for full systems with plenty of space and cooling and the ITX is made for much more cramped system that might be a little tight in cooling as well. Sapphire did an amazing job cramming all of that performance into while maintaining close to the same performance. I might have been able to tap more out of it than even the NITRO can provide, but I will leave that comparison till the day I have one to review.

Let’s check out the Pro’s and Con’s

Pros

- DVI, HDMI and Display Port available

- Supports 4K displays

- FreeSync Support

- Supports DX12

- 0DB Fan mode

- Great GPU/Memory Speeds

- Relatively affordable for the speeds provided

- Sapphire TRIXX 3.0 is a nice Utility

- Tiny Size to fit in most spaces

- Only requires a single 6Pin PCIe connection

Cons

- Only includes one of each output for video

- Does not include RGB LED’s

- Does not include any adapters or adapter cables

- Could be important for people that have 2 x DVI Monitors, 3 x HDMI Monitors, 3 x DP monitors, etc…

The RGB LED’s and Additional adapter are all nice touches, but this is meant to be affordable, so it is not to be expected so I cannot say so much they are cons, but worth mentioning. The biggest con the lack of additional outputs, tackled on with the lack of adapter cables does degrade the score a tiny bit, can you imagine a PC the size of a small printer hooked up to 2 monitors being able to play games at a decent resolution, that’s a winner in my opinion.

Additional adapters or an additional output would have put this as an Editor’s choice but I can only give it a Highly Recommended. If it were a single slot card, then it would have been an Editor’s choice because there was no more room, but there is on this card. Do you agree with my call? Let me know what you think below, you could make the difference.

We are influencers and brand affiliates. This post contains affiliate links, most which go to Amazon and are Geo-Affiliate links to nearest Amazon store.

I have spent many years in the PC boutique name space as Product Development Engineer for Alienware and later Dell through Alienware’s acquisition and finally Velocity Micro. During these years I spent my time developing new configurations, products and technologies with companies such as AMD, Asus, Intel, Microsoft, NVIDIA and more. The Arts, Gaming, New & Old technologies drive my interests and passion. Now as my day job, I am an IT Manager but doing reviews on my time and my dime.